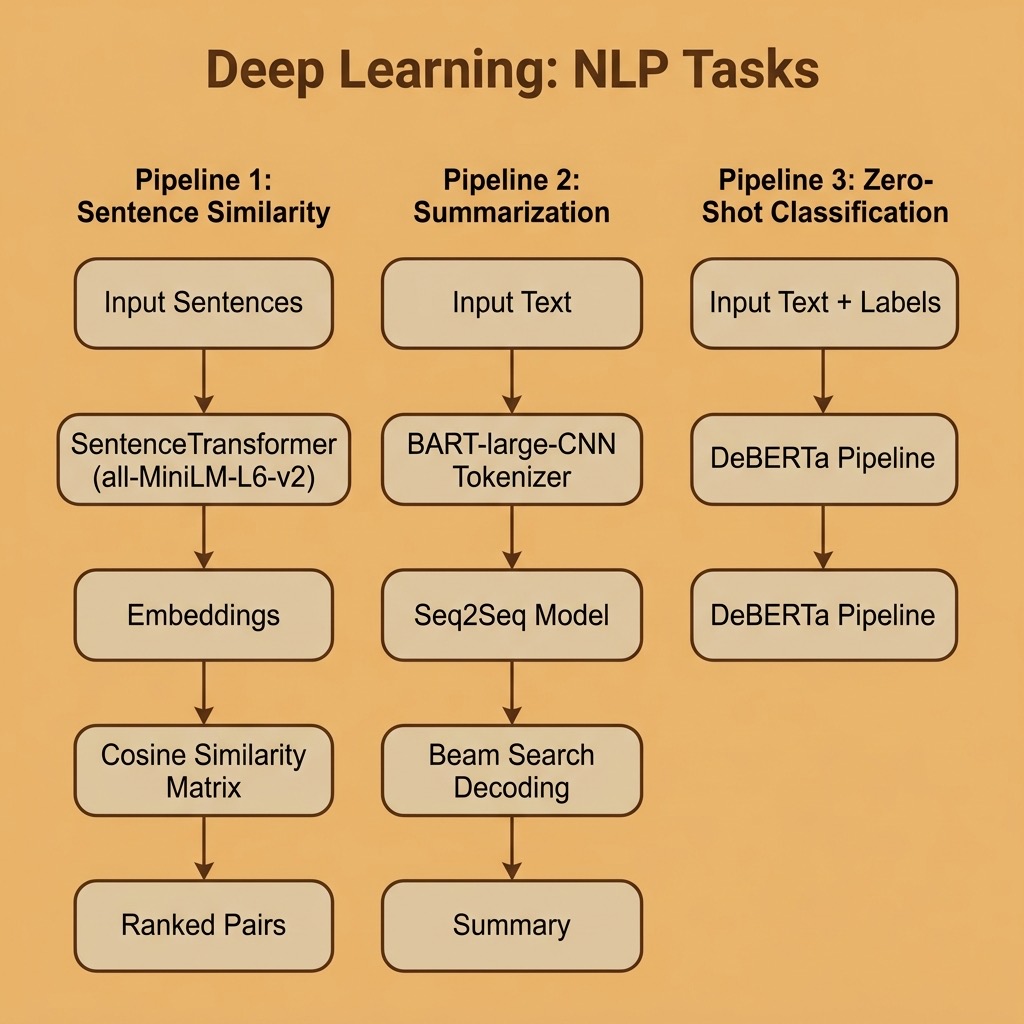

Natural Language Processing Using Deep Learning

I spent several years in the 1980s using symbolic AI approaches to Natural Language Processing (NLP) like augmented transition networks and conceptual dependency theory with mixed results. For small vocabularies and small domains of discourse these techniques yielded modestly successful results. I now only use Deep Learning approaches to NLP in my work.

Deep Learning in NLP is a branch of machine learning that utilizes deep neural networks to understand, interpret and generate human language. It has revolutionized the field of NLP by improving the accuracy of various NLP tasks such as text classification, language translation, sentiment analysis, and natural language generation (e.g., ChatGPT).

Deep learning models such as Recurrent Neural Networks (RNNs), Convolutional Neural Networks (CNNs), and Transformer models have been used to achieve state-of-the-art performance on various NLP tasks. These models have been trained on large amounts of text data, which has allowed them to learn complex patterns in human language and improve their understanding of the context and meaning of words.

The use of pre-trained models, such as BERT and GPT-4, has also become popular in NLP and I use both frequently for my work. These models have been pre-trained on a large corpus of text data, and can be fine-tuned for a specific task, which significantly reduces the amount of data and computing resources required to train a derived model.

Deep learning in NLP has been applied in various industries such as chatbots, automated customer service, and language translation services. It has also been used in research areas such as natural language understanding, question answering, and text summarization.

In the last decade deep learning techniques have solved most NLP problems, at least in a “good enough” engineering sense. In this chapter we will experiment with a few useful pre-trained models that you can run locally on your laptop using PyTorch.

Hugging Face and the Transformers Library

Hugging Face provides an extensive library of pre-trained models and the transformers Python library that allows developers to quickly integrate NLP capabilities into their applications. The pre-trained models are based on state-of-the-art transformer architectures, trained on large corpora of data. Hugging Face maintains a task page listing all kinds of machine learning that they support at https://huggingface.co/tasks for task domains:

- Computer Vision

- Natural Language Processing

- Audio

- Tabular Data

- Multimodal

- Reinforcement Learning

As an open source and open model company, Hugging Face is a leading provider of NLP technology. They have developed a library of pre-trained models, including models based on transformer architectures such as BERT, DeBERTa, and GPT variants, which can be fine-tuned for various tasks such as language understanding, language translation, and text generation.

The requirements for the examples in this chapter are:

1 uv pip install torch transformers sentence-transformers

The examples for this chapter are in the directory source-code/deep_learning_nlp.

All models will be downloaded automatically to ~/.cache/huggingface the first time you run each script. Subsequent runs will use the cached models without re-downloading.

Summarizing Text Using a Pre-trained Model on Your Laptop

We use the facebook/bart-large-cnn model for text summarization. This model runs locally on your laptop using PyTorch — no API keys or cloud services needed. The downloaded model and associated files are a little less than two gigabytes of data.

In the current version of the transformers library (v5+), we load the model and tokenizer explicitly using AutoModelForSeq2SeqLM and AutoTokenizer:

1 from transformers import AutoTokenizer, AutoModelForSeq2SeqLM

2

3 model_name = "facebook/bart-large-cnn"

4 tokenizer = AutoTokenizer.from_pretrained(model_name)

5 model = AutoModelForSeq2SeqLM.from_pretrained(model_name)

6

7 text = (

8 "The President sent a request for changing the debt ceiling to "

9 "Congress. The president might call a press conference. The Congress "

10 "was not oblivious of what the Supreme Court's majority had ruled on "

11 "budget matters. Even four Justices had found nothing to criticize in "

12 "the President's requirement that the Federal Government's four-year "

13 "spending plan. It is unclear whether or not the President and "

14 "Congress can come to an agreement before Congress recesses for a "

15 "holiday. There is major disagreement between the Democratic and "

16 "Republican parties on spending."

17 )

18

19 inputs = tokenizer(text, return_tensors="pt", max_length=1024, truncation=True)

20 summary_ids = model.generate(

21 **inputs, max_length=60, num_beams=4, early_stopping=True

22 )

23 summary = tokenizer.decode(summary_ids[0], skip_special_tokens=True)

24 print(summary)

Here is the output from running summarization.py:

1 $ python summarization.py

2 Loading summarization model (facebook/bart-large-cnn)...

3

4 Original text (87 words):

5 The President sent a request for changing the debt ceiling to Congress...

6

7 Summary:

8 The President sent a request for changing the debt ceiling to

9 Congress. The Congress was not oblivious of what the Supreme

10 Court's majority had ruled on budget matters. Even four Justices

11 had found nothing to criticize in the President's requirement

12 that the Federal Government's four-year spending plan be changed

Note: for production summarization work with longer documents, consider using larger instruction-tuned models (e.g., Llama 3 or Phi-4) that support context windows of 128K+ tokens. The BART model used here is limited to 1,024 tokens of input but is excellent for learning and prototyping.

Zero Shot Classification Using a Local Model

Zero shot classification models work by specifying which classification labels you want to assign to input texts — no pre-training on labeled examples is required. We use the MoritzLaurer/deberta-v3-base-zeroshot-v2.0 model, which is a modern and efficient replacement for the older bart-large-mnli model:

1 from pprint import pprint

2 from transformers import pipeline

3

4 classifier = pipeline(

5 "zero-shot-classification",

6 model="MoritzLaurer/deberta-v3-base-zeroshot-v2.0",

7 )

8

9 text = (

10 "Hi, I recently bought a device from your company but it is not "

11 "working as advertised and I would like to get reimbursed!"

12 )

13 candidate_labels = ["refund", "faq", "legal"]

14

15 result = classifier(text, candidate_labels)

16 pprint(result)

Here is the output from running zero_shot_classification.py:

1 $ python zero_shot_classification.py

2 Loading zero-shot classification model...

3

4 Input text: Hi, I recently bought a device from your company but

5 it is not working as advertised and I would like to get reimbursed!

6 Candidate labels: ['refund', 'faq', 'legal']

7

8 {'labels': ['refund', 'legal', 'faq'],

9 'scores': [0.9839024543762207, 0.01550459023565054, 0.000593014934565872],

10 'sequence': 'Hi, I recently bought a device from your company but it is not '

11 'working as advertised and I would like to get reimbursed!'}

The model correctly classifies the customer message as a “refund” request with 98.4% confidence. This approach is powerful because you can define any set of labels at runtime without training a custom model.

Comparing Sentences for Similarity Using Transformer Models

The sentence-transformers library provides an easy-to-use interface for computing sentence embeddings using PyTorch. We use the all-MiniLM-L6-v2 model which is small (80 MB) and fast while producing high-quality embeddings:

1 from sentence_transformers import SentenceTransformer, util

2

3 model = SentenceTransformer("all-MiniLM-L6-v2")

4

5 sentences = [

6 "The IRS has new tax laws.",

7 "Congress debating the economy.",

8 "The politician fled to South America.",

9 "Canada and the US will be in the playoffs.",

10 "The cat ran up the tree.",

11 "The meal tasted good but was expensive and perhaps not worth the price.",

12 ]

13

14 # Encode all sentences

15 embeddings = model.encode(sentences)

16

17 # Compute cosine similarities between all pairs

18 cos_sim = util.cos_sim(embeddings, embeddings)

19

20 # Collect and sort all unique pairs

21 pairs = []

22 for i in range(len(cos_sim) - 1):

23 for j in range(i + 1, len(cos_sim)):

24 pairs.append((cos_sim[i][j].item(), i, j))

25

26 pairs.sort(key=lambda x: x[0], reverse=True)

27

28 print("Top-8 most similar pairs:")

29 for score, i, j in pairs[:8]:

30 print(f" {score:.4f} {sentences[i]}")

31 print(f" {sentences[j]}")

Output from running sentence_similarity.py:

1 $ python sentence_similarity.py

2 Loading sentence-transformers model (all-MiniLM-L6-v2)...

3

4 Top-8 most similar pairs:

5 0.1793 The IRS has new tax laws.

6 Congress debating the economy.

7

8 0.1210 Congress debating the economy.

9 Canada and the US will be in the playoffs.

10

11 0.1131 Congress debating the economy.

12 The meal tasted good but was expensive and perhaps not worth the price.

13

14 0.1061 Congress debating the economy.

15 The politician fled to South America.

16

17 0.0901 The politician fled to South America.

18 The meal tasted good but was expensive and perhaps not worth the price.

19

20 0.0677 The politician fled to South America.

21 The cat ran up the tree.

22

23 0.0432 The cat ran up the tree.

24 The meal tasted good but was expensive and perhaps not worth the price.

25

26 0.0388 Congress debating the economy.

27 The cat ran up the tree.

The results make intuitive sense: sentences about government and economics cluster together, while unrelated sentences about cats and meals have very low similarity scores.

A common use case is a customer service chatbot where we match the user’s question with all recorded questions that have accepted “canned answers.” The runtime to get the best match is O(N) where N is the number of previously recorded user questions. The cosine similarity calculation, given two embedding vectors, is very fast.

For a practical application you can use the cos_sim function from the sentence-transformers utility library:

1 >>> from sentence_transformers import util

2 >>> util.cos_sim(embeddings[0], embeddings[1])

3 tensor([[0.1793]])

Deep Learning Natural Language Processing Wrap-up

In this chapter we have seen examples of how effective deep learning is for NLP using PyTorch and the Hugging Face transformers ecosystem. All three examples — summarization, zero-shot classification, and sentence similarity — run locally on your laptop without requiring API keys or cloud services.

I worked on other methods of NLP over a 25-year period and I ask you, dear reader, to take my word on this: deep learning has revolutionized NLP and for almost all practical NLP applications, deep learning libraries and pre-trained models from organizations like Hugging Face should be the first thing that you consider using.