Part 2: Object-Oriented World

Most of the examples in the previous part were about a single object that did not have dependencies on other objects (with an exception of some values – strings, integers, enums, etc.). This is not how most OO systems are built. In this part, we are finally going to look at scenarios where multiple objects work together as a system.

This brings about some issues that need to be discussed. One of them is the approach to object-oriented design and how it influences the tools we use to test-drive our code. You probably heard something about a tool called mock objects (at least from one of the introductory chapters of this book) or, in a broader sense, test doubles. If you open your web browser and type “mock objects break encapsulation”, you will find a lot of different opinions – some saying that mocks are great, others blaming them for all the evil in the world, and a lot of opinions that fall in between. The discussions are still heated, even though mocks were introduced more than ten years ago. My goal in this chapter is to outline the context and forces that lead to the adoption of mocks and how to use them for your benefit, not failure.

Steve Freeman, one of the godfathers of using mock objects with TDD, wrote: “mocks arise naturally from doing responsibility-driven OO. All these mock/not-mock arguments are completely missing the point. If you’re not writing that kind of code, people, please don’t give me a hard time”. I am going to introduce mocks in a way that will not give Steve a hard time, I hope.

To do this, I need to cover some topics of object-oriented design. In fact, I decided to dedicate the entire part 2 solely for that purpose. Thus, this chapter will be about object-oriented techniques, practices, and qualities you need to know to use TDD effectively in the object-oriented world. The key quality that we’ll focus on is objects’ composability.

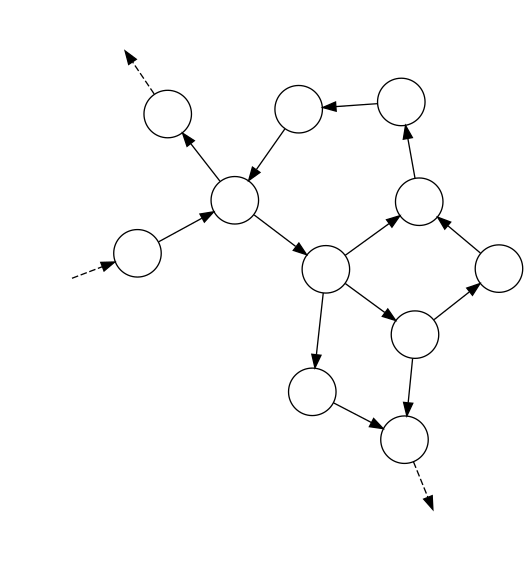

After reading part 2, you will understand an opinionated approach to object-oriented design that is based on the idea of the object-oriented system being a web of nodes (objects) that pass messages to each other. This will give us a good starting point for introducing mock objects and mock-based TDD in part 3.

On Object Composability

In this chapter, I will try to outline briefly why object composability is a goal worth achieving and how it can be achieved. I am going to start with an example of an unmaintainable code and will gradually fix its flaws in the next chapters. For now, we are going to fix just one of the flaws, so the code we will end up will not be perfect by any means, still, it will be better by one quality.

In the coming chapters, we will learn more valuable lessons resulting from changing this little piece of code.

Another task for Johnny and Benjamin

Remember Johnny and Benjamin? Looks like they managed their previous task and are up to something else. Let’s listen to their conversation as they are working on another project…

Benjamin: So, what’s this assignment about?

Johnny: Actually, it’s nothing exciting – we’ll have to add two features to a legacy application that’s not prepared for the changes.

Benjamin: What is the code for?

Johnny: It is a C# class that implements company policies. As the company has just started using this automated system and it was started recently, there is only one policy implemented: yearly incentive plan. Many corporations have what they call incentive plans. These plans are used to promote good behaviors and exceeding expectations by employees of a company.

Benjamin: You mean, the project has just started and is already in a bad shape?

Johnny: Yep. The guys writing it wanted to “keep it simple”, whatever that means, and now it looks pretty bad.

Benjamin: I see…

Johnny: By the way, do you like riddles?

Benjamin: Always!

Johnny: So here’s one: how do you call a development phase when you ensure high code quality?

Benjamin: … … No clue… So what is it called?

Johnny: It’s called “now”.

Benjamin: Oh!

Johnny: Getting back to the topic, here’s the company incentive plan.

Every employee has a pay grade. An employee can be promoted to a higher pay grade, but the mechanics of how that works is something we will not need to deal with.

Normally, every year, everyone gets a raise of 10%. But to encourage behaviors that give an employee a higher pay grade, such an employee cannot get raises indefinitely on a given pay grade. Each grade has its associated maximum pay. If this amount of money is reached, an employee does not get a raise anymore until they reach a higher pay grade.

Additionally, every employee on their 5th anniversary of working for the company gets a special, one-time bonus which is twice their current payment.

Benjamin: Looks like the source code repository just finished synchronizing. Let’s take a bite at the code!

Johnny: Sure, here you go:

1 public class CompanyPolicies : IDisposable

2 {

3 readonly SqlRepository _repository

4 = new SqlRepository();

5

6 public void ApplyYearlyIncentivePlan()

7 {

8 var employees = _repository.CurrentEmployees();

9

10 foreach(var employee in employees)

11 {

12 var payGrade = employee.GetPayGrade();

13 //evaluate raise

14 if(employee.GetSalary() < payGrade.Maximum)

15 {

16 var newSalary

17 = employee.GetSalary()

18 + employee.GetSalary()

19 * 0.1;

20 employee.SetSalary(newSalary);

21 }

22

23 //evaluate one-time bonus

24 if(employee.GetYearsOfService() == 5)

25 {

26 var oneTimeBonus = employee.GetSalary() * 2;

27 employee.SetBonusForYear(2014, oneTimeBonus);

28 }

29

30 employee.Save();

31 }

32 }

33

34 public void Dispose()

35 {

36 _repository.Dispose();

37 }

38 }

Benjamin: Wow, there is a lot of literal constants all around and functional decomposition is barely done!

Johnny: Yeah. We won’t be fixing all of that today. Still, we will follow the boy scout rule and “leave the campground cleaner than we found it”.

Benjamin: What’s our assignment?

Johnny: First of all, we need to provide our users with a choice between an SQL database and a NoSQL one. To achieve our goal, we need to be somehow able to make the CompanyPolicies class database type-agnostic. For now, as you can see, the implementation is coupled to the specific SqlRepository, because it creates a specific instance itself:

1 public class CompanyPolicies : IDisposable

2 {

3 readonly SqlRepository _repository

4 = new SqlRepository();

Now, we need to evaluate the options we have to pick the best one. What options do you see, Benjamin?

Benjamin: Well, we could certainly extract an interface from SqlRepository and introduce an if statement to the constructor like this:

1 public class CompanyPolicies : IDisposable

2 {

3 readonly Repository _repository;

4

5 public CompanyPolicies()

6 {

7 if(...)

8 {

9 _repository = new SqlRepository();

10 }

11 else

12 {

13 _repository = new NoSqlRepository();

14 }

15 }

Johnny: True, but this option has few deficiencies. First of all, remember we’re trying to follow the boy scout rule and by using this option we introduce more complexity to the CommonPolicies class. Also, let’s say tomorrow someone writes another class for, say, reporting and this class will also need to access the repository – they will need to make the same decision on repositories in their code as we do in ours. This effectively means duplicating code. Thus, I’d rather evaluate further options and check if we can come up with something better. What’s our next option?

Benjamin: Another option would be to change the SqlRepository itself to be just a wrapper around the actual database access, like this:

1 public class SqlRepository : IDisposable

2 {

3 readonly Repository _repository;

4

5 public SqlRepository()

6 {

7 if(...)

8 {

9 _repository = new RealSqlRepository();

10 }

11 else

12 {

13 _repository = new RealNoSqlRepository();

14 }

15 }

16

17 IList<Employee> CurrentEmployees()

18 {

19 return _repository.CurrentEmployees();

20 }

Johnny: Sure, this is an approach that could work and would be worth considering for very serious legacy code, as it does not force us to change the CompanyPolicies class at all. However, there are some issues with it. First of all, the SqlRepository name would be misleading. Second, look at the CurrentEmployees() method – all it does is delegating a call to the implementation chosen in the constructor. With every new method required of the repository, we’ll need to add new delegating methods. In reality, it isn’t such a big deal, but maybe we can do better than that?

Benjamin: Let me think, let me think… I evaluated the option where CompanyPolicies class chooses between repositories. I also evaluated the option where we hack the SqlRepository to makes this choice. The last option I can think of is leaving this choice to another, “3rd party” code, that would choose the repository to use and pass it to the CompanyPolicies via its constructor, like this:

1 public class CompanyPolicies : IDisposable

2 {

3 private readonly Repository _repository;

4

5 public CompanyPolicies(Repository repository)

6 {

7 _repository = repository;

8 }

This way, the CompanyPolicies won’t know what exactly is passed to it via its constructor and we can pass whatever we like – either an SQL repository or a NoSQL one!

Johnny: Great! This is the option we’re looking for! For now, just believe me that this approach will lead us to many good things – you’ll see why later.

Benjamin: OK, so let me just pull the SqlRepository instance outside the CompanyPolicies class and make it an implementation of Repository interface, then create a constructor and pass the real instance through it…

Johnny: Sure, I’ll go get some coffee.

… 10 minutes later

Benjamin: Haha! Look at this! I am SUPREME!

1 public class CompanyPolicies : IDisposable

2 {

3 //_repository is now an interface

4 readonly Repository _repository;

5

6 // repository is passed from outside.

7 // We don't know what exact implementation it is.

8 public CompanyPolicies(Repository repository)

9 {

10 _repository = repository;

11 }

12

13 public void ApplyYearlyIncentivePlan()

14 {

15 //... body of the method. Unchanged.

16 }

17

18 public void Dispose()

19 {

20 _repository.Dispose();

21 }

22 }

Johnny: Hey, hey, hold your horses! There is one thing wrong with this code.

Benjamin: Huh? I thought this is what we were aiming at.

Johnny: Yes, except the Dispose() method. Look closely at the CompanyPolicies class. it is changed so that it is not responsible for creating a repository for itself, but it still disposes of it. This is could cause problems because CompanyPolicies instance does not have any right to assume it is the only object that is using the repository. If so, then it cannot determine the moment when the repository becomes unnecessary and can be safely disposed of.

Benjamin: Ok, I get the theory, but why is this bad in practice? Can you give me an example?

Johnny: Sure, let me sketch a quick example. As soon as you have two instances of CompanyPolicies class, both sharing the same instance of Repository, you’re cooked. This is because one instance of CompanyPolicies may dispose of the repository while the other one may still want to use it.

Benjamin: So who is going to dispose of the repository?

Johnny: The same part of the code that creates it, for example, the Main method. Let me show you an example of how this may look like:

1 public static void Main(string[] args)

2 {

3 using(var repo = new SqlRepository())

4 {

5 var policies = new CompanyPolicies(repo);

6

7 //use above created policies

8 //for anything you like

9 }

10 }

This way the repository is created at the start of the program and disposed of at the end. Thanks to this, the CompanyPolicies has no disposable fields and it does not have to be disposable itself – we can just delete the Dispose() method:

1 //not implementing IDisposable anymore:

2 public class CompanyPolicies

3 {

4 //_repository is now an interface

5 readonly Repository _repository;

6

7 //New constructor

8 public CompanyPolicies(Repository repository)

9 {

10 _repository = repository;

11 }

12

13 public void ApplyYearlyIncentivePlan()

14 {

15 //... body of the method. No changes

16 }

17

18 //no Dispose() method anymore

19 }

Benjamin: Cool. So, what now? Seems we have the CompanyPolicies class depending on a repository abstraction instead of an actual implementation, like SQL repository. I guess we will be able to make another class implementing the interface for NoSQL data access and just pass it through the constructor instead of the original one.

Johnny: Yes. For example, look at CompanyPolicies component. We can compose it with a repository like this:

1 var policies

2 = new CompanyPolicies(new SqlRepository());

or like this:

1 var policies

2 = new CompanyPolicies(new NoSqlRepository());

without changing the code of CompanyPolicies. This means that CompanyPolicies does not need to know what Repository exactly it is composed with, as long as this Repository follows the required interface and meets expectations of CompanyPolicies (e.g. does not throw exceptions when it is not supposed to do so). An implementation of Repository may be itself a very complex and composed of another set of classes, for example, something like this:

1 new SqlRepository(

2 new ConnectionString("..."),

3 new AccessPrivileges(

4 new Role("Admin"),

5 new Role("Auditor")

6 ),

7 new InMemoryCache()

8 );

but the CompanyPolicies neither knows or cares about this, as long as it can use our new Repository implementation just like other repositories.

Benjamin: I see… So, getting back to our task, shall we proceed with making a NoSQL implementation of the Repository interface?

Johnny: First, show me the interface that you extracted while I was looking for the coffee.

Benjamin: Here:

1 public interface Repository

2 {

3 IList<Employee> CurrentEmployees();

4 }

Johnny: Ok, so what we need is to create just another implementation and pass it through the constructor depending on what data source is chosen and we’re finished with this part of the task.

Benjamin: You mean there’s more?

Johnny: Yeah, but that’s something for tomorrow. I’m exhausted today.

A Quick Retrospective

In this chapter, Benjamin learned to appreciate composability of an object, i.e. the ability to replace its dependencies, providing different behaviors, without the need to change the code of the object class itself. Thus, an object, given replaced dependencies, starts using the new behaviors without noticing that any change occurred at all.

The code mentioned has some serious flaws. For now, Johnny and Benjamin did not encounter a desperate need to address them.

Also, after we part again with Johnny and Benjamin, we are going to reiterate the ideas they stumble upon in a more disciplined manner.

Telling, not asking

In this chapter, we’ll get back to Johnny and Benjamin as they introduce another change in the code they are working on. In the process, they discover the impact that return values and getters have on the composability of objects.

Contractors

Johnny: G’morning. Ready for another task?

Benjamin: Of course! What’s next?

Johnny: Remember the code we worked on yesterday? It contains a policy for regular employees of the company. But the company wants to start hiring contractors as well and needs to include a policy for them in the application.

Benjamin: So this is what we will be doing today?

Johnny: That’s right. The policy is going to be different for contractors. While, just as regular employees, they will be receiving raises and bonuses, the rules will be different. I made a small table to allow comparing what we have for regular employees and what we want to add for contractors:

| Employee Type | Raise | Bonus |

|---|---|---|

| Regular Employee | +10% of current salary if not reached a maximum on a given pay grade | +200% of current salary one time after five years |

| Contractor | +5% of average salary calculated for last 3 years of service (or all previous years of service if they have worked for less than 3 years | +10% of current salary when a contractor receives score more than 100 for the previous year |

So while the workflow is going to be the same for both a regular employee and a contractor:

- Load from repository

- Evaluate raise

- Evaluate bonus

- Save

the implementation of some of the steps will be different for each type of employee.

Benjamin: Correct me if I am wrong, but these “load” and “save” steps do not look like they belong with the remaining two – they describe something technical, while the other steps describe something strictly related to how the company operates…

Johnny: Good catch, however, this is something we’ll deal with later. Remember the boy scout rule – just don’t make it worse. Still, we’re going to fix some of the design flaws today.

Benjamin: Aww… I’d just fix all of it right away.

Johnny: Ha ha, patience, Luke. For now, let’s look at the code we have now before we plan further steps.

Benjamin: Let me just open my IDE… OK, here it is:

1 public class CompanyPolicies

2 {

3 readonly Repository _repository;

4

5 public CompanyPolicies(Repository repository)

6 {

7 _repository = repository;

8 }

9

10 public void ApplyYearlyIncentivePlan()

11 {

12 var employees = _repository.CurrentEmployees();

13

14 foreach(var employee in employees)

15 {

16 var payGrade = employee.GetPayGrade();

17

18 //evaluate raise

19 if(employee.GetSalary() < payGrade.Maximum)

20 {

21 var newSalary

22 = employee.GetSalary()

23 + employee.GetSalary()

24 * 0.1;

25 employee.SetSalary(newSalary);

26 }

27

28 //evaluate one-time bonus

29 if(employee.GetYearsOfService() == 5)

30 {

31 var oneTimeBonus = employee.GetSalary() * 2;

32 employee.SetBonusForYear(2014, oneTimeBonus);

33 }

34

35 employee.Save();

36 }

37 }

38 }

Benjamin: Look, Johnny, the class, in fact, contains all the four steps you mentioned, but they are not named explicitly – instead, their internal implementation for regular employees is just inserted in here. How are we supposed to add the variation of the employee type?

Johnny: Time to consider our options. We have a few of them. Well?

Benjamin: For now, I can see two. The first one would be to create another class similar to CompanyPolicies, called something like CompanyPoliciesForContractors and implement the new logic there. This would let us leave the original class as is, but we would have to change the places that use CompanyPolicies to use both classes and choose which one to use somehow. Also, we would have to add a separate method to the repository for retrieving the contractors.

Johnny: Also, we would miss our chance to communicate through the code that the sequence of steps is intentionally similar in both cases. Others who read this code in the future will see that the implementation for regular employees follows the steps: load, evaluate raise, evaluate bonus, save. When they look at the implementation for contractors, they will see the same order of steps, but they will be unable to tell whether the similarity is intentional, or a pure accident.

Benjamin: So our second option is to put an if statement into the differing steps inside the CompanyPolicies class, to distinguish between regular employees and contractors. The Employee class would have an isContractor() method and depending on what it would return, we would either execute the logic for regular employees or contractors. Assuming that the current structure of the code looks like this:

1 foreach(var employee in employees)

2 {

3 //evaluate raise

4 ...

5

6 //evaluate one-time bonus

7 ...

8

9 //save employee

10 }

the new structure would look like this:

1 foreach(var employee in employees)

2 {

3 if(employee.IsContractor())

4 {

5 //evaluate raise for contractor

6 ...

7 }

8 else

9 {

10 //evaluate raise for regular

11 ...

12 }

13

14 if(employee.IsContractor())

15 {

16 //evaluate one-time bonus for contractor

17 ...

18 }

19 else

20 {

21 //evaluate one-time bonus for regular

22 ...

23 }

24

25 //save employee

26 ...

27 }

this way we would show that the steps are the same, but the implementation is different. Also, this would mostly require us to add code and not move the existing code around.

Johnny: The downside is that we would make the class even uglier than it was when we started. So despite initial easiness, we’ll be doing a huge disservice to future maintainers. We have at least one another option. What would that be?

Benjamin: Let’s see… we could move all the details concerning the implementation of the steps from CompanyPolicies class into the Employee class itself, leaving only the names and the order of steps in CompanyPolicies:

1 foreach(var employee in employees)

2 {

3 employee.EvaluateRaise();

4 employee.EvaluateOneTimeBonus();

5 employee.Save();

6 }

Then, we could change the Employee into an interface, so that it could be either a RegularEmployee or ContractorEmployee – both classes would have different implementations of the steps, but the CompanyPolicies would not notice, since it would not be coupled to the implementation of the steps anymore – just the names and the order.

Johnny: This solution would have one downside – we would need to significantly change the current code, but you know what? I’m willing to do it, especially that I was told today that the logic is covered by some tests which we can run to see if a regression was introduced.

Benjamin: Cool, what do we start with?

Johnny: The first thing that is between us and our goal are these getters on the Employee class:

1 GetSalary();

2 GetGrade();

3 GetYearsOfService();

They just expose too much information specific to the regular employees. It would be impossible to use different implementations when these are around. These setters don’t help much:

1 SetSalary(newSalary);

2 SetBonusForYear(year, amount);

While these are not as bad, we’d better give ourselves more flexibility. Thus, let’s hide all of it behind more abstract methods that only reveal our intention.

First, take a look at this code:

1 //evaluate raise

2 if(employee.GetSalary() < payGrade.Maximum)

3 {

4 var newSalary

5 = employee.GetSalary()

6 + employee.GetSalary()

7 * 0.1;

8 employee.SetSalary(newSalary);

9 }

Each time you see a block of code separated from the rest with blank lines and starting with a comment, you see something screaming “I want to be a separate method that contains this code and has a name after the comment!”. Let’s grant this wish and make it a separate method on the Employee class.

Benjamin: Ok, wait a minute… here:

1 employee.EvaluateRaise();

Johnny: Great! Now, we’ve got another example of this species here:

1 //evaluate one-time bonus

2 if(employee.GetYearsOfService() == 5)

3 {

4 var oneTimeBonus = employee.GetSalary() * 2;

5 employee.SetBonusForYear(2014, oneTimeBonus);

6 }

Benjamin: This one should be even easier… Ok, take a look:

1 employee.EvaluateOneTimeBonus();

Johnny: Almost good. I’d only leave out the information that the bonus is one-time from the name.

Benjamin: Why? Don’t we want to include what happens in the method name?

Johnny: Actually, no. What we want to include is our intention. The bonus being one-time is something specific to the regular employees and we want to abstract away the details about this or that kind of employee, so that we can plug in different implementations without making the method name lie. The names should reflect that we want to evaluate a bonus, whatever that means for a particular type of employee. Thus, let’s make it:

1 employee.EvaluateBonus();

Benjamin: Ok, I get it. No problem.

Johnny: Now let’s take a look at the full code of the EvaluateIncentivePlan method to see whether it is still coupled to details specific to regular employees. Here’s the code:

1 public void ApplyYearlyIncentivePlan()

2 {

3 var employees = _repository.CurrentEmployees();

4

5 foreach(var employee in employees)

6 {

7 employee.EvaluateRaise();

8 employee.EvaluateBonus();

9 employee.Save();

10 }

11 }

Benjamin: It seems that there is no coupling to the details about regular employees anymore. Thus, we can safely make the repository return a combination of regulars and contractors without this code noticing anything. Now I think I understand what you were trying to achieve. If we make interactions between objects happen on a more abstract level, then we can put in different implementations with less effort.

Johnny: True. Can you see another thing related to the lack of return values on all of the Employee’s methods in the current implementation?

Benjamin: Not really. Does it matter?

Johnny: Well, if Employee methods had return values and this code depended on them, all subclasses of Employee would be forced to supply return values as well and these return values would need to match the expectations of the code that calls these methods, whatever these expectations were. This would make introducing other kinds of employees harder. But now that there are no return values, we can, for example:

- introduce a

TemporaryEmployeethat has no raises, by leaving itsEvaluateRaise()method empty, and the code that uses employees will not notice. - introduce a

ProbationEmployeethat has no bonus policy, by leaving itsEvaluateBonus()method empty, and the code that uses employees will not notice. - introduce an

InMemoryEmployeethat has an emptySave()method, and the code that uses employees will not notice.

As you see, by asking the objects less, and telling it more, we get greater flexibility to create alternative implementations and the composability, which we talked about yesterday, increases!

Benjamin: I see… So telling objects what to do instead of asking them for their data makes the interactions between objects more abstract, and so, more stable, increasing composability of interacting objects. This is a valuable lesson – it is the first time I hear this and it seems a pretty powerful concept.

A Quick Retrospective

In this chapter, Benjamin learned that the composability of an object (not to mention clarity) is reinforced when interactions between it and its peers are: abstract, logical and stable. Also, he discovered, with Johnny’s help, that it is further strengthened by following a design style where objects are told what to do instead of asked to give away information to somebody who then decides on their behalf. This is because if an API of an abstraction is built around answering specific questions, the clients of the abstraction tend to ask it a lot of questions and are coupled to both those questions and some aspects of the answers (i.e. what is in the return values). This makes creating another implementation of abstraction harder, because each new implementation of the abstraction needs to not only provide answers to all those questions, but the answers are constrained to what the client expects. When abstraction is merely told what its client wants it to achieve, the clients are decoupled from most of the details of how this happens. This makes introducing new implementations of abstraction easier – it often even lets us define implementations with all methods empty without the client noticing at all.

These are all important conclusions that will lead us towards TDD with mock objects.

Time to leave Johnny and Benjamin for now. In the next chapter, I’m going to reiterate their discoveries and put them in a broader context.

The need for mock objects

We already experienced mock objects in the chapter about tools, although at that point, I gave you an oversimplified and deceiving explanation of what a mock object is, promising that I will make up for it later. Now is the time.

Mock objects were made with a specific goal in mind. I hope that when you understand the real goal, you will probably understand the means to the goal far better.

In this chapter, we will explore the qualities of object-oriented design which make mock objects a viable tool.

Composability… again!

In the two previous chapters, we followed Johnny and Benjamin in discovering the benefits of and prerequisites for composability of objects. Composability is the number one quality of the design we’re after. After reading Johhny and Benjamin’s story, you might have some questions regarding composability. Hopefully, they are among the ones answered in the next few chapters. Ready?

Why do we need composability?

It might seem stupid to ask this question here – if you have managed to stay with me this long, then you’re probably motivated enough not to need a justification? Well, anyway, it’s still worth discussing it a little. Hopefully, you’ll learn as much reading this back-to-basics chapter as I did writing it.

Pre-object-oriented approaches

Back in the days of procedural programming1, when we wanted to execute different code based on some factor, it was usually achieved using an ‘if’ statement. For example, if our application was in need to be able to use different kinds of alarms, like a loud alarm (that plays a loud sound) and a silent alarm (that does not play any sound, but instead silently contacts the police) interchangeably, then usually, we could achieve this using a conditional like in the following function:

1 void triggerAlarm(Alarm* alarm)

2 {

3 if(alarm->kind == LOUD_ALARM)

4 {

5 playLoudSound(alarm);

6 }

7 else if(alarm->kind == SILENT_ALARM)

8 {

9 notifyPolice(alarm);

10 }

11 }

The code above makes a decision based on the alarm kind which is embedded in the alarm structure:

1 struct Alarm

2 {

3 int kind;

4 //other data

5 };

If the alarm kind is the loud one, it executes behavior associated with a loud alarm. If this is a silent alarm, the behavior for silent alarms is executed. This seems to work. Unfortunately, if we wanted to make a second decision based on the alarm kind (e.g. we needed to disable the alarm), we would need to query the alarm kind again. This would mean duplicating the conditional code, just with a different set of actions to perform, depending on what kind of alarm we were dealing with:

1 void disableAlarm(Alarm* alarm)

2 {

3 if(alarm->kind == LOUD_ALARM)

4 {

5 stopLoudSound(alarm);

6 }

7 else if(alarm->kind == SILENT_ALARM)

8 {

9 stopNotifyingPolice(alarm);

10 }

11 }

Do I have to say why this duplication is bad? Do I hear a “no”? My apologies then, but I’ll tell you anyway. The duplication means that every time a new kind of alarm is introduced, a developer has to remember to update both places that contain ‘if-else’ – the compiler will not force this. As you are probably aware, in the context of teams, where one developer picks up work that another left and where, from time to time, people leave to find another job, expecting someone to “remember” to update all the places where the logic is duplicated is asking for trouble.

So, we see that the duplication is bad, but can we do something about it? To answer this question, let’s take a look at the reason the duplication was introduced. And the reason is: We have two things we want to be able to do with our alarms: triggering and disabling. In other words, we have a set of questions we want to be able to ask an alarm. Each kind of alarm has a different way of answering these questions – resulting in having a set of “answers” specific to each alarm kind:

| Alarm Kind | Triggering | Disabling |

|---|---|---|

| Loud Alarm | playLoudSound() |

stopLoudSound() |

| Silent Alarm | notifyPolice() |

stopNotifyingPolice() |

So, at least conceptually, as soon as we know the alarm kind, we already know which set of behaviors (represented as a row in the above table) it needs. We could just decide the alarm kind once and associate the right set of behaviors with the data structure. Then, we would not have to query the alarm kind in several places as we did, but instead, we could say: “execute triggering behavior from the set of behaviors associated with this alarm, whatever it is”.

Unfortunately, procedural programming does not allow binding behavior with data easily. The whole paradigm of procedural programming is about separating behavior and data! Well, honestly, they had some answers to those concerns, but these answers were mostly awkward (for those of you that still remember C language: I’m talking about macros and function pointers). So, as data and behavior are separated, we need to query the data each time we want to pick a behavior based on it. That’s why we have duplication.

Object-oriented programming to the rescue!

On the other hand, object-oriented programming has for a long time made available two mechanisms that enable what we didn’t have in procedural languages:

- Classes – that allow binding behavior together with data.

- Polymorphism – allows executing behavior without knowing the exact class that holds them, but knowing only a set of behaviors that it supports. This knowledge is obtained by having an abstract type (interface or an abstract class) define this set of behaviors, with no real implementation. Then we can make other classes that provide their own implementation of the behaviors that are declared to be supported by the abstract type. Finally, we can use the instances of those classes where an instance of the abstract type is expected. In the case of statically-typed languages, this requires implementing an interface or inheriting from an abstract class.

So, in case of our alarms, we could make an interface with the following signature:

1 public interface Alarm

2 {

3 void Trigger();

4 void Disable();

5 }

and then make two classes: LoudAlarm and SilentAlarm, both implementing the Alarm interface. Example for LoudAlarm:

1 public class LoudAlarm : Alarm

2 {

3 public void Trigger()

4 {

5 //play very loud sound

6 }

7

8 public void Disable()

9 {

10 //stop playing the sound

11 }

12 }

Now, we can make parts of code use the alarm, but by knowing the interface only instead of the concrete classes. This makes the parts of the code that use alarm this way not having to check which alarm they are dealing with. Thus, what previously looked like this:

1 if(alarm->kind == LOUD_ALARM)

2 {

3 playLoudSound(alarm);

4 }

5 else if(alarm->kind == SILENT_ALARM)

6 {

7 notifyPolice(alarm);

8 }

becomes just:

1 alarm.Trigger();

where alarm is either LoudAlarm or SilentAlarm but seen polymorphically as Alarm, so there’s no need for ‘if-else’ anymore.

But hey, isn’t this cheating? Even provided I can execute the trigger behavior on an alarm without knowing the actual class of the alarm, I still have to decide which class it is in the place where I create the actual instance:

1 // we must know the exact type here:

2 alarm = new LoudAlarm();

so it looks like I am not eliminating the ‘else-if’ after all, just moving it somewhere else! This may be true (we will talk more about it in future chapters), but the good news is that I eliminated at least the duplication by making our dream of “picking the right set of behaviors to use with certain data once” come true.

Thanks to this, I create the alarm once, and then I can take it and pass it to ten, a hundred or a thousand different places where I will not have to determine the alarm kind anymore to use it correctly.

This allows writing a lot of classes that do not know about the real class of the alarm they are dealing with, yet they can use the alarm just fine only by knowing a common abstract type – Alarm. If we can do that, we arrive at a situation where we can add more alarms implementing Alarm and watch existing objects that are already using Alarm work with these new alarms without any change in their source code! There is one condition, however – the creation of the alarm instances must be moved out of the classes that use them. That’s because, as we already observed, to create an alarm using a new operator, we have to know the exact type of the alarm we are creating. So whoever creates an instance of LoudAlarm or SilentAlarm, loses its uniformity, since it is not able to depend solely on the Alarm interface.

The power of composition

Moving creation of alarm instances away from the classes that use those alarms brings up an interesting problem – if an object does not create the objects it uses, then who does it? A solution is to make some special places in the code that are only responsible for composing a system from context-independent objects2. We saw this already as Johnny was explaining composability to Benjamin. He used the following example:

1 new SqlRepository(

2 new ConnectionString("..."),

3 new AccessPrivileges(

4 new Role("Admin"),

5 new Role("Auditor")

6 ),

7 new InMemoryCache()

8 );

We can do the same with our alarms. Let’s say that we have a secure area that has three buildings with different alarm policies:

- Office building – the alarm should silently notify guards during the day (to keep office staff from panicking) and loud during the night, when guards are on patrol.

- Storage building – as it is quite far and the workers are few, we want to trigger loud and silent alarms at the same time.

- Guards building – as the guards are there, no need to notify them. However, a silent alarm should call the police for help instead, and a loud alarm is desired as well.

Note that besides just triggering a loud or silent alarm, we are required to support a combination (“loud and silent alarms at the same time”) and a conditional (“silent during the day and loud during the night”). we could just hardcode some fors and if-elses in our code, but instead, let’s factor out these two operations (combination and choice) into separate classes implementing the alarm interface.

Let’s call the class implementing the choice between two alarms DayNightSwitchedAlarm. Here is the source code:

1 public class DayNightSwitchedAlarm : Alarm

2 {

3 private readonly Alarm _dayAlarm;

4 private readonly Alarm _nightAlarm;

5

6 public DayNightSwitchedAlarm(

7 Alarm dayAlarm,

8 Alarm nightAlarm)

9 {

10 _dayAlarm = dayAlarm;

11 _nightAlarm = nightAlarm;

12 }

13

14 public void Trigger()

15 {

16 if(/* is day */)

17 {

18 _dayAlarm.Trigger();

19 }

20 else

21 {

22 _nightAlarm.Trigger();

23 }

24 }

25

26 public void Disable()

27 {

28 _dayAlarm.Disable();

29 _nightAlarm.Disable();

30 }

31 }

Studying the above code, it is apparent that this is not an alarm per se, e.g. it does not raise any sound or notification, but rather, it contains some rules on how to use other alarms. This is the same concept as power splitters in real life, which act as electric devices but do not do anything other than redirecting the electricity to other devices.

Next, let’s use the same approach and model the combination of two alarms as a class called HybridAlarm. Here is the source code:

1 public class HybridAlarm : Alarm

2 {

3 private readonly Alarm _alarm1;

4 private readonly Alarm _alarm2;

5

6 public HybridAlarm(

7 Alarm alarm1,

8 Alarm alarm2)

9 {

10 _alarm1 = alarm1;

11 _alarm2 = alarm2;

12 }

13

14 public void Trigger()

15 {

16 _alarm1.Trigger();

17 _alarm2.Trigger();

18 }

19

20 public void Disable()

21 {

22 _alarm1.Disable();

23 _alarm2.Disable();

24 }

25 }

Using these two classes along with already existing alarms, we can implement the requirements by composing instances of those classes like this:

1 new SecureArea(

2 new OfficeBuilding(

3 new DayNightSwitchedAlarm(

4 new SilentAlarm("222-333-444"),

5 new LoudAlarm()

6 )

7 ),

8 new StorageBuilding(

9 new HybridAlarm(

10 new SilentAlarm("222-333-444"),

11 new LoudAlarm()

12 )

13 ),

14 new GuardsBuilding(

15 new HybridAlarm(

16 new SilentAlarm("919"), //call police

17 new LoudAlarm()

18 )

19 )

20 );

Note that the fact that we implemented combination and choice of alarms as separate objects implementing the Alarm interface allows us to define new, interesting alarm behaviors using the parts we already have, but composing them together differently. For example, we might have, as in the above example:

1 new DayNightSwitchAlarm(

2 new SilentAlarm("222-333-444"),

3 new LoudAlarm());

which would mean triggering silent alarm during a day and loud one during the night. However, instead of this combination, we might use:

1 new DayNightSwitchAlarm(

2 new SilentAlarm("222-333-444"),

3 new HybridAlarm(

4 new SilentAlarm("919"),

5 new LoudAlarm()

6 )

7 )

Which would mean that we use a silent alarm to notify the guards during the day, but a combination of silent (notifying police) and loud during the night. Of course, we are not limited to combining a silent alarm with a loud one only. We can as well combine two silent ones:

1 new HybridAlarm(

2 new SilentAlarm("919"),

3 new SilentAlarm("222-333-444")

4 )

Additionally, if we suddenly decided that we do not want alarm at all during the day, we could use a special class called NoAlarm that would implement Alarm interface, but have both Trigger and Disable methods do nothing. The composition code would look like this:

1 new DayNightSwitchAlarm(

2 new NoAlarm(), // no alarm during the day

3 new HybridAlarm(

4 new SilentAlarm("919"),

5 new LoudAlarm()

6 )

7 )

And, last but not least, we could completely remove all alarms from the guards building using the following NoAlarm class (which is also an Alarm):

1 public class NoAlarm : Alarm

2 {

3 public void Trigger()

4 {

5 }

6

7 public void Disable()

8 {

9 }

10 }

and passing it as the alarm to guards building:

1 new GuardsBuilding(

2 new NoAlarm()

3 )

Noticed something funny about the last few examples? If not, here goes an explanation: in the last few examples, we have twisted the behaviors of our application in wacky ways, but all of this took place in the composition code! We did not have to modify any other existing classes! True, we had to write a new class called NoAlarm, but did not need to modify any code except the composition code to make objects of this new class work with objects of existing classes!

This ability to change the behavior of our application just by changing the way objects are composed together is extremely powerful (although you will always be able to achieve it only to certain extent), especially in evolutionary, incremental design, where we want to evolve some pieces of code with as little as possible other pieces of code having to realize that the evolution takes place. This ability can be achieved only if our system consists of composable objects, thus the need for composability – an answer to a question raised at the beginning of this chapter.

Summary – are you still with me?

We started with what seemed to be a repetition from a basic object-oriented programming course, using a basic example. It was necessary though to make a fluent transition to the benefits of composability we eventually introduced at the end. I hope you did not get overwhelmed and can understand now why I am putting so much stress on composability.

In the next chapter, we will take a closer look at composing objects itself.

Web, messages and protocols

In the previous chapter, we talked a little bit about why composability is valuable, now let’s flesh out a little bit of terminology to get more precise understanding.

So, again, what does it mean to compose objects?

Roughly, it means that an object has obtained a reference to another object and can invoke methods on it. By being composed together, two objects form a small system that can be expanded with more objects as needed. Thus, a bigger object-oriented system forms something similar to a web:

If we take the web metaphor a little bit further, we can note some similarities to e.g. a TCP/IP network:

- An object can send messages to other objects (i.e. call methods on them – arrows on the above diagram) via interfaces. Each message has a sender and at least one recipient.

- To send a message to a recipient, a sender has to acquire an address of the recipient, which, in the object-oriented world, we call a reference (and in languages such as C++, references are just that – addresses in memory).

- Communication between the sender and recipients has to follow a certain protocol. For example, the sender usually cannot invoke a method passing nulls as all arguments, or should expect an exception if it does so. Don’t worry if you don’t see the analogy now – I’ll follow up with more explanation of this topic later).

Alarms, again!

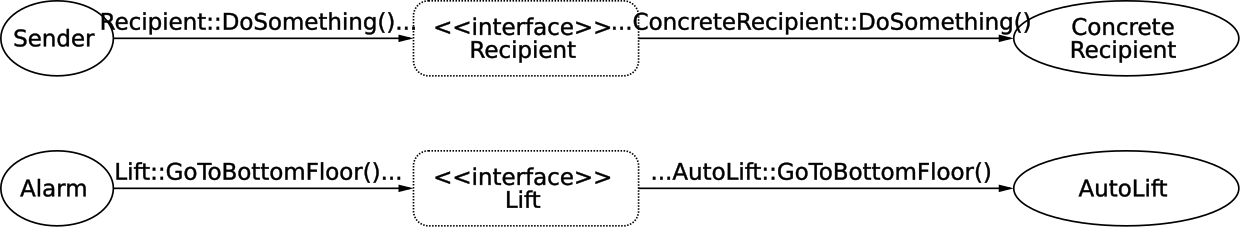

Let’s try to apply this terminology to an example. Imagine that we have an anti-fire alarm system in an office. When triggered, this alarm system makes all lifts go to the bottom floor, opens them and then disables each of them. Among others, the office contains automatic lifts, that contain their own remote control systems and mechanical lifts, that are controlled from the outside by a special custom-made mechanism.

Let’s try to model this behavior in code. As you might have guessed, we will have some objects like alarm, automatic lift, and mechanical lift. The alarm will control the lifts when triggered.

Firstly, we don’t want the alarm to have to distinguish between an automatic and a mechanical lift – this would only add complexity to the alarm system, especially that there are plans to add a third kind of lift – a more modern one – in the future. So, if we made the alarm aware of the different kinds of lifts, we would have to modify it each time a new kind of lift is introduced. Thus, we need a special interface (let’s call it Lift) to communicate with both AutoLift and MechanicalLift (and ModernLift in the future). Through this interface, an alarm will be able to send messages to both types of lifts without having to know the difference between them.

1 public interface Lift

2 {

3 ...

4 }

5

6 public class AutoLift : Lift

7 {

8 ...

9 }

10

11 public class MechanicalLift : Lift

12 {

13 ...

14 }

Next, to be able to communicate with specific lifts through the Lift interface, an alarm object has to acquire “addresses” of the lift objects (i.e. references to them). We can pass these references e.g. through a constructor:

1 public class Alarm

2 {

3 private readonly IEnumerable<Lift> _lifts;

4

5 //obtain "addresses" here

6 public Alarm(IEnumerable<Lift> lifts)

7 {

8 //store the "addresses" for later use

9 _lifts = lifts;

10 }

11 }

Then, the alarm can send three kinds of messages: GoToBottomFloor(), OpenDoor(), and DisablePower() to any of the lifts through the Lift interface:

1 public interface Lift

2 {

3 void GoToBottomFloor();

4 void OpenDoor();

5 void DisablePower();

6 }

and, as a matter of fact, it sends all these messages when triggered. The Trigger() method on the alarm looks like this:

1 public void Trigger()

2 {

3 foreach(var lift in _lifts)

4 {

5 lift.GoToBottomFloor();

6 lift.OpenDoor();

7 lift.DisablePower();

8 }

9 }

By the way, note that the order in which the messages are sent does matter. For example, if we disabled the power first, asking the powerless lift to go anywhere would be impossible. This is a first sign of a protocol existing between the Alarm and a Lift.

In this communication, Alarm is a sender – it knows what it sends (messages that control lifts), it knows why (because the alarm is triggered), but does not know what exactly are the recipients going to do when they receive the message – it only knows what it wants them to do, but does not know how they are going to achieve it. The rest is left to objects that implement Lift (namely, AutoLift and MechanicalLift). They are the recipients – they don’t know who they received the message from (unless they are told in the content of the message somehow – but even then they can be cheated), but they know how to react, based on who they are (AutoLift has its specific way of reacting and MechanicalLift has its own as well). They also know what kind of message they received (a lift does a different thing when asked to go to the bottom floor than when it is asked to open its door) and what’s the message content (i.e. method arguments – in this simplistic example there are none).

To illustrate that this separation between a sender and a recipient exists, I’ll say that we could even write an implementation of a Lift interface that would just ignore the messages it got from the Alarm (or fake that it did what it was asked for) and the Alarm will not even notice. We sometimes say that this is because deciding on a specific reaction is not the Alarm’s responsibility.

New requirements

It was decided that whenever any malfunction happens in the lift when it is executing the alarm emergency procedure, the lift object should report this by throwing an exception called LiftUnoperationalException. This affects both Alarm and implementations of the Lift interface:

- The

Liftimplementations need to know that when a malfunction happens, they should report it by throwing the exception. - The

Alarmmust be ready to handle the exception thrown from lifts and act accordingly (e.g. still try to secure other lifts).

This is another example of a protocol existing between Alarm and Lift that must be adhered to by both sides. Here is an exemplary code of Alarm keeping to its part of the protocol, i.e. handling the malfunction reports in its Trigger() method:

1 public void Trigger()

2 {

3 foreach(var lift in _lifts)

4 {

5 try

6 {

7 lift.GoToBottomFloor();

8 lift.OpenDoor();

9 lift.DisablePower();

10 }

11 catch(LiftUnoperationalException e)

12 {

13 report.ThatCannotSecure(lift);

14 }

15 }

16 }

Summary

Each of the objects in the web can receive messages and most of them send messages to other objects. Throughout the next chapters, I will refer to an object sending a message as sender and an object receiving a message as recipient.

For now, it may look unjustified to introduce this metaphor of webs, protocols, interfaces, etc. Still, I have two reasons for doing so:

- This is the way I interpret Alan Kay’s mental model of what object-oriented programming is about.

- I find it useful for some things want to explain in the next chapters: how to make connections between objects, how to design an object boundary and how to achieve strong composability.

By the way, the example from this chapter is a bit naive. For one, in real production code, the lifts would have to be notified in parallel, not sequentially. Also, I would probably use some kind of observer pattern to separate the instructions give to each lift from raising an event (I will demonstrate an example of using observers in this fashion in the next chapter). These two choices, in turn, would probably make me rethink error handling - there is a chance I wouldn’t be able to get away with just catching exceptions. Anyway, I hope the naive form helped explain the idea of protocols and messages without raising the bar in other topics.

Composing a web of objects

Three important questions

Now that we know that such thing as a web of objects exists, that there are connections, protocols and such, time to mention the one thing I left out: how does a web of objects come into existence?

This is, of course, a fundamental question, because if we are unable to build a web, we don’t have a web. Also, this is a question that is a little more tricky than it looks like at first glance. To answer it, we have to find the answer to three other questions:

- When are objects composed (i.e. when are the connections made)?

- How does an object obtain a reference to another one on the web (i.e. how are the connections made)?

- Where are objects composed (i.e. where are connections made)?

At first sight, finding the difference between these questions may be tedious, but the good news is that they are the topic of this chapter, so I hope we’ll have that cleared shortly.

A preview of all three answers

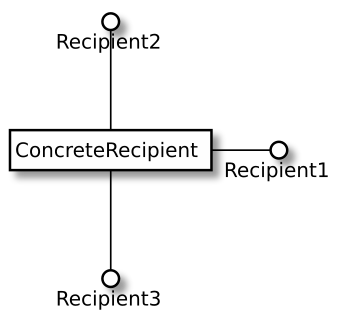

Before we take a deep dive, let’s try to answer these three questions for a naive example code of a console application:

1 public static void Main(string[] args)

2 {

3 var sender = new Sender(new Recipient());

4

5 sender.Work();

6 }

This is a piece of code that creates two objects and connects them, then it tells the sender object to work on something. For this code, the answers to the three questions I raised are:

- When are objects composed? Answer: during application startup (because

Main()method is called at console application startup). - How does an object (

Sender) obtain a reference to another one (Recipient)? Answer: the reference is obtained by receiving aRecipientas a constructor parameter. - Where are objects composed? Answer: at the application entry point (

Main()method)

Depending on circumstances, we may have different sets of answers. Also, to avoid rethinking this topic each time we create an application, I like to have a set of default answers to these questions. I’d like to demonstrate these answers by tackling each of the three questions in-depth, one by one, in the coming chapters.

When are objects composed?

The quick answer to this question is: as early as possible. Now, that wasn’t too helpful, was it? So here goes a clarification.

Many of the objects we use in our applications can be created and connected up-front when the application starts and can stay alive until the application finishes executing. Let’s call this part the static part of the web.

Apart from that, there’s something I’ll call dynamic part – the objects that are created, connected and destroyed many times during the application lifecycle. There are at least two reasons this dynamic part exists:

- Some objects represent requests or user actions that arrive during the application runtime, are processed and then discarded. These objects cannot be created up-front, but only as early as the events they represent occur. Also, these objects do not live until the application is terminated but are discarded as soon as the processing of a request is finished. Other objects represent e.g. items in cache that live for some time and then expire, so, again, we don’t have enough information to compose these objects up-front and they often don’t live as long as the application itself. Such objects come and go, making temporary connections.

- There are objects that have life spans as long as the application has, but the nature of their connections are temporary. Consider an example where we want to encrypt our data storage for export, but depending on circumstances, we sometimes want to export it using one algorithm and sometimes using another. If so, we may sometimes invoke the encryption method like this:

1 database.encryptUsing(encryption1);

and sometimes like this:

1 database.encryptUsing(encryption2);

In the first case, database and encryption1 are only connected temporarily, for the time it takes to perform the encryption. Still, nothing prevents these objects from being created during the application startup. The same applies to the connection of database and encryption2 - this connection is temporary as well.

Given these definitions, it is perfectly possible for an object to be part of both static and dynamic part – some of its connections may be made up-front, while others may be created later, e.g. when its reference is passed inside a message sent to another object (i.e. when it is passed as method parameter).

How does a sender obtain a reference to a recipient (i.e. how connections are made)?

There are several ways a sender can obtain a reference to a recipient, each of them being useful in certain circumstances. These ways are:

- Receive as a constructor parameter

- Receive inside a message (i.e. as a method parameter)

- Receive in response to a message (i.e. as a method return value)

- Receive as a registered observer

Let’s take a closer look at what each of them is about and which one to choose in what circumstances.

Receive as a constructor parameter

Two objects can be composed by passing one into a constructor of another:

1 sender = new Sender(recipient);

A sender that receives the recipient then saves a reference to it in a private field for later, like this:

1 private Recipient _recipient;

2

3 public Sender(Recipient recipient)

4 {

5 _recipient = recipient;

6 }

Starting from this point, the Sender may send messages to Recipient at will:

1 public void DoSomething()

2 {

3 //... other code

4

5 _recipient.DoSomethingElse();

6

7 //... other code

8 }

Advantage: “what you hide, you can change”

Composing using constructors has one significant advantage. Let’s look again at how Sender is created:

1 sender = new Sender(recipient);

and at how it’s used:

1 sender.DoSomething();

Note that only the code that creates a Sender needs to be aware of it having access to a Recipient. When it comes to invoking a method, this private reference is invisible from outside. Now, remember when I described the principle of separating object use from its construction? If we follow this principle here, we end up with the code that creates a Sender being in a totally different place than the code that uses it. Thus, every code that uses a Sender will not be aware of it sending messages to a Recipient at all. There is a maxim that says: “what you hide, you can change”3 – in this particular case, if we decide that the Sender does not need a Recipient to do its job, all we have to change is the composition code to remove the Recipient:

1 //no need to pass a reference to Recipient anymore

2 new Sender();

and the code that uses Sender doesn’t need to change at all – it still looks the same as before, since it never knew Recipient:

1 sender.DoSomething();

Communication of intent: required recipient

Another advantage of the constructor approach is that it allows stating explicitly what the required recipients are for a particular sender. For example, a Sender accepts a Recipient in its constructor:

1 public Sender(Recipient recipient)

2 {

3 //...

4 }

The signature of the constructor makes it explicit that a reference to Recipient is required for a Sender to work correctly – the compiler will not allow creating a Sender without passing something as a Recipient4.

Where to apply

Passing into a constructor is a great solution in cases we want to compose a sender with a recipient permanently (i.e. for the lifetime of a Sender). To be able to do this, a Recipient must, of course, exist before a Sender does. Another less obvious requirement for this composition is that a Recipient must be usable at least as long as a Sender is usable. A simple example of violating this requirement is this code:

1 sender = new Sender(recipient);

2

3 recipient.Dispose(); //but sender is unaware of it

4 //and may still use recipient later:

5 sender.DoSomething();

In this case, when we tell sender to DoSomething(), it uses a recipient that is already disposed of, which may lead to some nasty bugs.

Receive inside a message (i.e. as a method parameter)

Another common way of composing objects together is passing one object as a parameter of another object’s method call:

1 sender.DoSomethingWithHelpOf(recipient);

In such a case, the objects are most often composed temporarily, just for the time of execution of this single method:

1 public void DoSomethingWithHelpOf(Recipient recipient)

2 {

3 //... perform some logic

4

5 recipient.HelpMe();

6

7 //... perform some logic

8 }

Where to apply

Contrary to the constructor approach, where a Sender could hide from its user the fact that it needs a Recipient, in this case, the user of Sender is explicitly responsible for supplying a Recipient. In other words, there needs to be some kind of coupling between the code using Sender and a Recipient. It may look like this coupling is a disadvantage, but I know of some scenarios where it’s required for code using Sender to be able to provide its own Recipient – it allows us to use the same sender with different recipients at different times (most often from different parts of the code):

1 //in one place

2 sender.DoSomethingWithHelpOf(recipient);

3

4 //in another place:

5 sender.DoSomethingWithHelpOf(anotherRecipient);

6

7 //in yet another place:

8 sender.DoSomethingWithHelpOf(yetAnotherRecipient);

If this ability is not required, I strongly prefer the constructor approach as it removes the (then) unnecessary coupling between code using Sender and a Recipient, giving me more flexibility.

Receive in response to a message (i.e. as a method return value)

This method of composing objects relies on an intermediary object – often an implementation of a factory pattern – to supply recipients on request. To simplify things, I will use factories in examples presented in this section, although what I tell you is true for some other creational patterns as well (also, later in this chapter, I’ll cover some aspects of the factory pattern in depth).

To be able to ask a factory for recipients, the sender needs to obtain a reference to it first. Typically, a factory is composed with a sender through its constructor (an approach I already described). For example:

1 var sender = new Sender(recipientFactory);

The factory can then be used by the Sender at will to get a hold of new recipients:

1 public class Sender

2 {

3 //...

4

5 public void DoSomething()

6 {

7 //ask the factory for a recipient:

8 var recipient = _recipientFactory.CreateRecipient();

9

10 //use the recipient:

11 recipient.DoSomethingElse();

12 }

13 }

Where to apply

I find this kind of composition useful when a new recipient is needed each time DoSomething() is called. In this sense, it may look much like in case of the previously discussed approach of receiving a recipient inside a message. There is one difference, however. Contrary to passing a recipient inside a message, where the code using the Sender passed a Recipient “from outside” of the Sender, in this approach, we rely on a separate object that is used by a Sender “from the inside”.

To be more clear, let’s compare the two approaches. Passing recipient inside a message looks like this:

1 //Sender gets a Recipient from the "outside":

2 public void DoSomething(Recipient recipient)

3 {

4 recipient.DoSomethingElse();

5 }

and obtaining it from a factory:

1 //a factory is used "inside" Sender

2 //to obtain a recipient

3 public void DoSomething()

4 {

5 var recipient = _factory.CreateRecipient();

6 recipient.DoSomethingElse();

7 }

So in the first example, the decision on which Recipient is used is made by whoever calls DoSomething(). In the factory example, whoever calls DoSomething() does not know at all about the Recipient and cannot directly influence which Recipient is used. The factory makes this decision.

Factories with parameters

So far, all of the factories we considered had creation methods with empty parameter lists, but this is not a requirement of any sort - I just wanted to make the examples simple, so I left out everything that didn’t help make my point. As the factory remains the decision-maker on which Recipient is used, it can rely on some external parameters passed to the creation method to help it make the decision.

Not only factories

Throughout this section, we have used a factory as our role model, but the approach of obtaining a recipient in response to a message is wider than that. Other types of objects that fall into this category include, among others: repositories, caches, builders, collections5. While they are all important concepts (which you can look up on the web if you like), they are not required to progress through this chapter so I won’t go through them now.

Receive as a registered observer

This means passing a recipient to an already created sender (contrary to passing as constructor parameter where the recipient was passed during creation) as a parameter of a method that stores the reference for later use. Usually, I meet two kinds of registrations:

- a “setter” method, where someone registers an observer by calling something like

sender.SetRecipient(recipient)method. Honestly, even though it’s a setter, I don’t like naming it according to the convention “setWhatever()” – after Kent Beck6 I find this convention too much implementation-focused instead of purpose-focused. Thus, I pick different names based on what domain concept is modeled by the registration method or what is its purpose. Anyway, this approach allows only one observer and setting another overwrites the previous one. - an “addition” method - where someone registers an observer by calling something like

sender.addRecipient(recipient)- in this approach, a collection of observers needs to be maintained somewhere and the recipient registered as an observer is merely added to the collection.

Note that there is one similarity to the “passing inside a message” approach – in both, a recipient is passed inside a message. The difference is that this time, contrary to “pass inside a message” approach, the passed recipient is not used immediately (and then forgotten), but rather it’s remembered (registered) for later use.

I hope I can clear up the confusion with a quick example.

Example

Suppose we have a temperature sensor that can report its current and historically mean value to whoever subscribes to it. If no one subscribes, the sensor still does its job, because it still has to collect the data for calculating a history-based mean value in case anyone subscribes later.

We may model this behavior by using an observer pattern and allow observers to register in the sensor implementation. If no observer is registered, the values are not reported (in other words, a registered observer is not required for the object to function, but if there is one, it can take advantage of the reports). For this purpose, let’s make our sensor depend on an interface called TemperatureObserver that could be implemented by various concrete observer classes. The interface declaration looks like this:

1 public interface TemperatureObserver

2 {

3 void NotifyOn(

4 Temperature currentValue,

5 Temperature meanValue);

6 }

Now we’re ready to look at the implementation of the temperature sensor itself and how it uses this TemperatureObserver interface. Let’s say that the class representing the sensor is called TemperatureSensor. Part of its definition could look like this:

1 public class TemperatureSensor

2 {

3 private TemperatureObserver _observer

4 = new NullObserver(); //ignores reported values

5

6 private Temperature _meanValue

7 = Temperature.Celsius(0);

8

9 // + maybe more fields related to storing historical data

10

11 public void Run()

12 {

13 while(/* needs to run */)

14 {

15 var currentValue = /* get current value somehow */;

16 _meanValue = /* update mean value somehow */;

17

18 _observer.NotifyOn(currentValue, _meanValue);

19

20 WaitUntilTheNextMeasurementTime();

21 }

22 }

23 }

As you can see, by default, the sensor reports its values to nowhere (NullObserver), which is a safe default value (using a null for a default value instead would cause exceptions or force us to put a null check inside the Run() method). We have already seen such “null objects”7 a few times before (e.g. in the previous chapter, when we introduced the NoAlarm class) – NullObserver is just another incarnation of this pattern.

Registering observers

Still, we want to be able to supply our own observer one day, when we start caring about the measured and calculated values (the fact that we “started caring” may be indicated to our application e.g. by a network packet or an event from the user interface). This means we need to have a method inside the TemperatureSensor class to overwrite this default “do-nothing” observer with a custom one after the TemperatureSensor instance is created. As I said, I don’t like the “SetXYZ()” convention, so I will name the registration method FromNowOnReportTo() and make the observer an argument. Here are the relevant parts of the TemperatureSensor class:

1 public class TemperatureSensor

2 {

3 private TemperatureObserver _observer

4 = new NullObserver(); //ignores reported values

5

6 //... ... ...

7

8 public void FromNowOnReportTo(TemperatureObserver observer)

9 {

10 _observer = observer;

11 }

12

13 //... ... ...

14 }

This allows us to overwrite the current observer with a new one should we ever need to do it. Note that, as I mentioned, this is the place where the registration approach differs from the “pass inside a message” approach, where we also received a recipient in a message, but for immediate use. Here, we don’t use the recipient (i.e. the observer) when we get it, but instead, we save it for later use.

Communication of intent: optional dependency

Allowing registering recipients after a sender is created is a way of saying: “the recipient is optional – if you provide one, fine, if not, I will do my work without it”. Please, don’t use this kind of mechanism for required recipients – these should all be passed through a constructor, making it harder to create invalid objects that are only partially ready to work.

Let’s examine an example of a class that:

- accepts a recipient in its constructor,

- allows registering a recipient as an observer,

- accepts a recipient for a single method invocation

This example is annotated with comments that sum up what these three approaches say:

1 public class Sender

2 {

3 //"I will not work without a Recipient1"

4 public Sender(Recipient1 recipient1) {...}

5

6 //"I will do fine without Recipient2 but you

7 //can overwrite the default here if you are

8 //interested in being notified about something

9 //or want to customize my default behavior"

10 public void Register(Recipient2 recipient2) {...}

11

12 //"I need a recipient3 only here and you get to choose

13 //what object to give me each time you invoke

14 //this method on me"

15 public void DoSomethingWith(Recipient3 recipient3) {...}

16 }

More than one observer

Now, the observer API we just skimmed over gives us the possibility to have a single observer at any given time. When we register a new observer, the reference to the old one is overwritten. This is not really useful in our context, is it? With real sensors, we often want them to report their measurements to multiple places (e.g. we want the measurements printed on the screen, saved to a database, and used as part of more complex calculations). This can be achieved in two ways.

The first way would be to just hold a collection of observers in our sensor, and add to this collection whenever a new observer is registered:

1 private IList<TemperatureObserver> _observers

2 = new List<TemperatureObserver>();

3

4 public void FromNowOnReportTo(TemperatureObserver observer)

5 {

6 _observers.Add(observer);

7 }

In such case, reporting would mean iterating over the list of observers:

1 ...

2 foreach(var observer in _observers)

3 {

4 observer.NotifyOn(currentValue, meanValue);

5 }

6 ...

The approach shown above places the policy for notifying observers inside the sensor. Many times this could be sufficient. Still, the sensor is coupled to the answers to at least the following questions:

- In what order do we notify the observers? In the example above, we notify them in order of registration.

- How do we handle errors (e.g. one of the observers throws an exception) - do we stop notifying further observers, or log an error and continue, or maybe do something else? In the example above, we stop on the first observer that throws an exception and rethrow the exception. Maybe it’s not the best approach for our case?

- Is our notification model synchronous or asynchronous? In the example above, we are using a synchronous

forloop.

We can gain a bit more flexibility by extracting the notification logic into a separate observer that would receive a notification and pass it to other observers. We can call it “a broadcasting observer”. The implementation of such an observer could look like this:

1 public class BroadcastingObserver

2 : TemperatureObserver,

3 TemperatureObservable //I'll explain it in a second

4 {

5 private IList<TemperatureObserver> _observers

6 = new List<TemperatureObserver>();

7

8 public void FromNowOnReportTo(TemperatureObserver observer)

9 {

10 _observers.Add(observer);

11 }

12

13 public void NotifyOn(

14 Temperature currentValue,

15 Temperature meanValue)

16 {

17 foreach(var observer in _observers)

18 {

19 observer.NotifyOn(currentValue, meanValue);

20 }

21 }

22 }

This BroadcastingObserver could be instantiated and registered like this:

1 //instantiation:

2 var broadcastingObserver

3 = new BroadcastingObserver();

4

5 ...

6 //somewhere else in the code...:

7 sensor.FromNowOnReportTo(broadcastingObserver);

8

9 ...

10 //somewhere else in the code...:

11 broadcastingObserver.FromNowOnReportTo(

12 new DisplayingObserver())

13 ...

14 //somewhere else in the code...:

15 broadcastingObserver.FromNowOnReportTo(

16 new StoringObserver());

17 ...

18 //somewhere else in the code...:

19 broadcastingObserver.FromNowOnReportTo(

20 new CalculatingObserver());

With this design, the other observers register with the broadcasting observer. However, they don’t really need to know who they are registering with - to hide it, I introduced a special interface called TemperatureObservable, which has the FromNowOnReportTo() method:

1 public interface TemperatureObservable

2 {

3 public void FromNowOnReportTo(TemperatureObserver observer);

4 }

This way, the code that registers an observer does not need to know what the concrete observable object is.

The additional benefit of modeling broadcasting as an observer is that it would allow us to change the broadcasting policy without touching either the sensor code or the other observers. For example, we might replace our for loop-based observer with something like ParallelBroadcastingObserver that would notify each of its observers asynchronously instead of sequentially. The only thing we would need to change is the observer object that’s registered with a sensor. So instead of:

1 //instantiation:

2 var broadcastingObserver

3 = new BroadcastingObserver();

4

5 ...

6 //somewhere else in the code...:

7 sensor.FromNowOnReportTo(broadcastingObserver);

We would have

1 //instantiation:

2 var broadcastingObserver

3 = new ParallelBroadcastingObserver();

4

5 ...

6 //somewhere else in the code...:

7 sensor.FromNowOnReportTo(broadcastingObserver);

and the rest of the code would remain unchanged. This is because the sensor implements:

-

TemperatureObserverinterface, which the sensor depends on, -

TemperatureObservableinterface which the code that registers the observers depends on.