Python Research Agent Using Google Agent Tool Kit

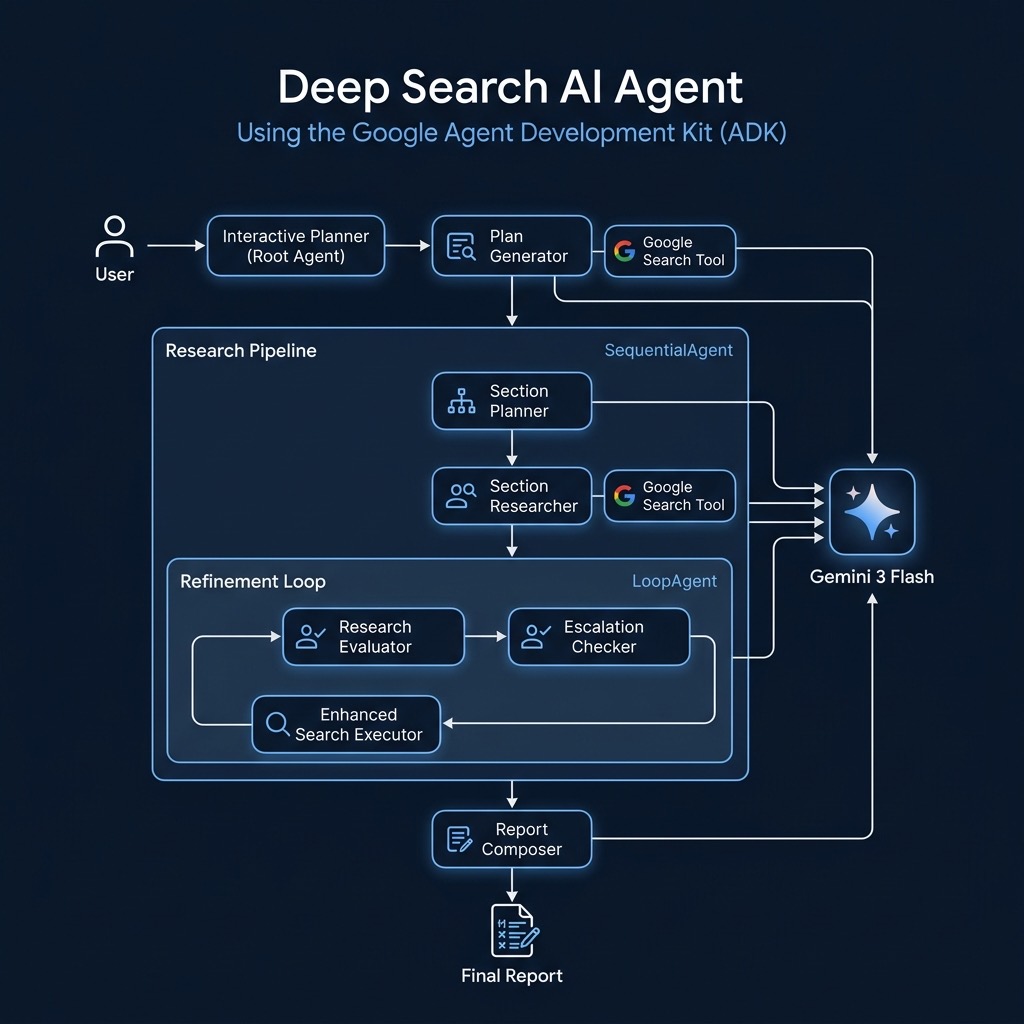

The Google Agent Development Kit (ADK) is an open-source Python framework for building multi-agent AI systems. In this chapter we build a deep research agent that plans investigations, executes web searches, evaluates its own findings, and produces a professionally cited report — all orchestrated by cooperating LLM-powered agents running on the Gemini model family.

Overview of Google Agent Toolkit

The ADK provides composable agent primitives — LlmAgent, SequentialAgent, LoopAgent, and BaseAgent — that let you assemble complex workflows from simple, single-purpose agents. Each agent has its own instruction prompt, optional tools (such as Google Search), and structured output schemas enforced via Pydantic models. Agents communicate through shared session state, and the framework handles the event loop, tool dispatch, and callback lifecycle automatically. This design makes it straightforward to build systems where one agent plans, another researches, a third evaluates quality, and a fourth composes the final output.

Research Agent

I rewrote Google’s full research agent web app example program, simplyfying it as a command line utility.

The following listing shows the complete deep search agent. It defines seven specialized agents wired together in a sequential pipeline with an inner refinement loop. The interactive_planner root agent receives a research topic from the user, delegates plan creation to the plan_generator, and upon approval hands off to the research_pipeline. Inside the pipeline, the section_researcher executes Google searches and synthesizes findings, the research_evaluator grades coverage quality, and the enhanced_search_executor fills any gaps — looping up to three times until the evaluator passes. Finally, the report_composer writes a Markdown report with inline source citations.

1 # Derived from: https://github.com/google/adk-samples/tree/main/python/agents/deep-search

2

3 import re

4 import datetime

5 from typing import Literal, AsyncGenerator

6

7 from pydantic import BaseModel, Field

8 from google.genai import types as genai_types

9

10 from google.adk.agents import BaseAgent, LlmAgent, LoopAgent, SequentialAgent

11 from google.adk.agents.callback_context import CallbackContext

12 from google.adk.agents.invocation_context import InvocationContext

13 from google.adk.apps.app import App

14 from google.adk.events import Event, EventActions

15 from google.adk.planners import BuiltInPlanner

16 from google.adk.tools import google_search

17 from google.adk.tools.agent_tool import AgentTool

18

19 # Defaults to Gemini 2.0 Flash for balanced speed/reasoning

20 MODEL_NAME = "gemini-3-flash-preview"

21

22 # Note: GOOGLE_API_KEY shuld be in your environment

23

24

25 # --- Structured Outputs ---

26 class SearchQuery(BaseModel):

27 search_query: str = Field(description="A specific, targeted query for web search.")

28

29 class Feedback(BaseModel):

30 grade: Literal["pass", "fail"]

31 comment: str

32 follow_up_queries: list[SearchQuery] | None = Field(default=None)

33

34 # --- Callbacks ---

35 def collect_sources(callback_context: CallbackContext) -> None:

36 """Aggregates sources from grounding metadata into state."""

37 session, state = callback_context._invocation_context.session, callback_context.state

38 url_map, sources = state.get("url_to_short_id", {}), state.get("sources", {})

39 next_id = len(url_map) + 1

40

41 for event in session.events:

42 if not (md := event.grounding_metadata): continue

43

44 # Map URLs to short IDs (src-1, src-2)

45 chunk_map = {}

46 for idx, chunk in enumerate(md.grounding_chunks or []):

47 if not chunk.web: continue

48 url = chunk.web.uri

49 if url not in url_map:

50 short_id = f"src-{next_id}"

51 url_map[url] = short_id

52 sources[short_id] = {"title": chunk.web.title or chunk.web.domain, "url": url}

53 next_id += 1

54 chunk_map[idx] = url_map[url]

55

56 state["url_to_short_id"] = url_map

57 state["sources"] = sources

58

59 def replace_citations(callback_context: CallbackContext) -> genai_types.Content:

60 """Converts <cite source='src-1'/> tags to Markdown links."""

61 text = callback_context.state.get("final_cited_report", "")

62 sources = callback_context.state.get("sources", {})

63

64 def replacer(match):

65 sid = match.group(1)

66 info = sources.get(sid)

67 return f" [{info['title']}]({info['url']})" if info else ""

68

69 # Replace tags and fix spacing

70 text = re.sub(r'<cite\s+source\s*=\s*["\']?(src-\d+)["\']?\s*/>', replacer, text)

71 text = re.sub(r"\s+([.,;:])", r"\1", text)

72 return genai_types.Content(parts=[genai_types.Part(text=text)])

73

74 # --- Agents ---

75

76 # 1. Plan Generator: Creates the initial strategy

77 plan_generator = LlmAgent(

78 model=MODEL_NAME,

79 name="plan_generator",

80 tools=[google_search],

81 instruction=f"""

82 Create a 5-step research plan.

83 Prefix every step with either:

84 - **`[RESEARCH]`**: For information gathering.

85 - **`[DELIVERABLE]`**: For synthesis/output creation.

86

87 Start with 5 `[RESEARCH]` goals. If these imply a specific output (like a table), add a `[DELIVERABLE][IMPLIED]` step immediately after.

88 If refining a plan based on feedback, mark changes with `[MODIFIED]` or `[NEW]`.

89 Only use search if strictly necessary to clarify ambiguous topics.

90 Date: {datetime.datetime.now().strftime("%Y-%m-%d")}

91 """

92 )

93

94 # 2. Section Planner: Outlines the report structure

95 section_planner = LlmAgent(

96 model=MODEL_NAME,

97 name="section_planner",

98 output_key="report_sections",

99 instruction="""

100 Using the 'research_plan', design a Markdown outline (4-6 sections) for the final report.

101 Do not include a References section.

102 Format: # Section Name \n Brief overview...

103 """

104 )

105

106 # 3. Researcher: The heavy lifter (Search -> Synthesize)

107 section_researcher = LlmAgent(

108 model=MODEL_NAME,

109 name="section_researcher",

110 tools=[google_search],

111 output_key="section_research_findings",

112 after_agent_callback=collect_sources,

113 planner=BuiltInPlanner(thinking_config=genai_types.ThinkingConfig(include_thoughts=True)),

114 instruction="""

115 Execute the `research_plan` in two strict phases:

116

117 **Phase 1: Research**

118 Process all `[RESEARCH]` goals first. For each, generate 4-5 search queries, execute them, and summarize findings. Store these summaries internally.

119

120 **Phase 2: Synthesis**

121 Once Phase 1 is complete, process `[DELIVERABLE]` goals.

122 Use the stored summaries to build the requested artifacts (tables, reports, etc).

123 Do NOT search during this phase.

124

125 Final output must include all research summaries and deliverable artifacts.

126 """

127 )

128

129 # 4. Evaluator: Checks quality

130 research_evaluator = LlmAgent(

131 model=MODEL_NAME,

132 name="research_evaluator",

133 output_key="research_evaluation",

134 output_schema=Feedback,

135 instruction="""

136 Evaluate 'section_research_findings'.

137 Pass if coverage is comprehensive. Fail if there are gaps.

138 If Fail, provide 'follow_up_queries' to fix the gaps.

139 """

140 )

141

142 # 5. Search Executor: Fixes gaps found by Evaluator

143 enhanced_search_executor = LlmAgent(

144 model=MODEL_NAME,

145 name="enhanced_search_executor",

146 tools=[google_search],

147 output_key="section_research_findings", # Merges results back

148 after_agent_callback=collect_sources,

149 instruction="""

150 You are fixing a failed research attempt.

151 1. Execute all 'follow_up_queries' from the evaluation.

152 2. Synthesize new findings and merge them into 'section_research_findings'.

153 """

154 )

155

156 # 6. Escalation Checker: Breaks the loop if Passed

157 class EscalationChecker(BaseAgent):

158 async def _run_async_impl(self, ctx: InvocationContext) -> AsyncGenerator[Event, None]:

159 result = ctx.session.state.get("research_evaluation", {})

160 if result.get("grade") == "pass":

161 yield Event(author=self.name, actions=EventActions(escalate=True))

162 else:

163 yield Event(author=self.name)

164

165 # 7. Composer: Writes final report with citations

166 report_composer = LlmAgent(

167 model=MODEL_NAME,

168 name="report_composer",

169 output_key="final_cited_report",

170 after_agent_callback=replace_citations,

171 instruction="""

172 Write a professional report using 'section_research_findings' and 'report_sections'.

173 **CITATIONS:** You MUST cite sources using this format: `<cite source="src-ID" />`.

174 Do not create a bibliography; use inline citations only.

175 """

176 )

177

178 # --- Pipelines ---

179

180 research_pipeline = SequentialAgent(

181 name="research_pipeline",

182 description="Executes plan, refines via loop, writes report.",

183 sub_agents=[

184 section_planner,

185 section_researcher,

186 LoopAgent(

187 name="refinement_loop",

188 max_iterations=3,

189 sub_agents=[research_evaluator, EscalationChecker(name="checker"), enhanced_search_executor],

190 ),

191 report_composer,

192 ],

193 )

194

195 # The Root Agent: Interfaces with the user

196 interactive_planner = LlmAgent(

197 name="interactive_planner",

198 model=MODEL_NAME,

199 output_key="research_plan",

200 tools=[AgentTool(plan_generator)], # Uses the generator as a tool

201 sub_agents=[research_pipeline], # Delegates to pipeline upon approval

202 instruction=f"""

203 You are a research assistant.

204 1. Receive user topic.

205 2. Call `plan_generator` to create a plan.

206 3. Show plan to user.

207 4. If user requests changes, call `plan_generator` again.

208 5. If user agrees, delegate to `research_pipeline`.

209 """

210 )

211

212 # --- Application ---

213 app = App(root_agent=interactive_planner, name="DeepSearchApp")

214

215 if __name__ == "__main__":

216 import asyncio

217 import sys

218 from google.adk.runners import InMemoryRunner

219

220 async def main():

221 print("\n--- Deep Search Agent Initialized ---")

222 topic = input("Enter the research topic: ")

223 print(f"Starting research for topic: {topic}\n")

224

225 runner = InMemoryRunner(app=app)

226

227 user_id = "cli_user"

228 session_id = "cli_session"

229

230 # Ensure session exists

231 await runner.session_service.create_session(

232 app_name=app.name,

233 user_id=user_id,

234 session_id=session_id

235 )

236

237 current_input = topic

238

239 while True:

240 try:

241 message = genai_types.Content(parts=[genai_types.Part(text=current_input)])

242 print("\n--- Agent Response ---")

243

244 async for event in runner.run_async(user_id=user_id, session_id=session_id, new_message=message):

245 # Debug printing

246 print(f"DEBUG: Event: {type(event)}")

247 if hasattr(event, 'content') and event.content:

248 print(f"DEBUG: Content parts: {len(event.content.parts)}")

249 for p in event.content.parts:

250 print(f"DEBUG: Part type: {type(p)}")

251 if p.function_call:

252 print(f"DEBUG: Function call: {p.function_call.name}")

253

254 if hasattr(event, 'content') and event.content and event.content.parts:

255 for part in event.content.parts:

256 if part.text:

257 print(part.text, end="", flush=True)

258

259 print("\n----------------------")

260

261 current_input = input("\n(Enter to continue, or type feedback/instruction. Type 'quit' to exit)\n> ")

262 if current_input.lower() in ["quit", "exit"]:

263 break

264 if not current_input.strip():

265 current_input = "proceed"

266

267 except KeyboardInterrupt:

268 print("\nExiting...")

269 break

270 except Exception as e:

271 print(f"\nError: {e}")

272 import traceback

273 traceback.print_exc()

274 break

275

276 asyncio.run(main())

The deep search agent demonstrates several patterns that are broadly useful when building agentic AI systems: breaking a complex task into focused sub-agents, using structured Pydantic outputs to enforce data contracts between agents, implementing self-improving loops with quality evaluation, and tracking provenance through source citation callbacks. These same patterns can be adapted for other workflows such as competitive analysis, literature review, market research, or any task where iterative search and synthesis produces better results than a single LLM call.