The Ping Pong Effect

The “impossible” you accomplished in Chapter happened through a specific mechanism I call the Ping Pong Effect, and understanding how it works transforms what you can achieve with AI. Your experience in Chapter mirrors my discovery process. Let me show you how I found this pattern.

Counterintuitive Behavior

Dr. Jay Forrester led Project Whirlwind, developed practical magnetic core memory, and built the Semi-Automated Ground Environment air defense system. His most influential paper was “Counterintuitive behavior of social systems.”1

Counterintuitive insights often prove the most valuable. If advice were not counterintuitive, you would likely already be following it. The techniques in this chapter leverage that principle: what seems backwards often proves most effective.

The Missing Piece

For nine years (I wrote the manuscript in 2016) I had known something was wrong. I included oddball content in that book that I absolutely knew was important but could not coherently explain why. The fact that I could not explain it was even more odd than the content itself! But now the book was under contract with a publisher, and I needed to figure this out.

Lacking any better ideas, I struck up a conversation with Anthropic’s Claude.1 For me that was the natural thing to do with a computer: explain the problem and discuss possible solutions, or at least try to explain why I thought it was important.

Claude took a close look at that manuscript with me, several times. That is a feat not easily done with a 500-page manuscript, not even with RAG (Retrieval-Augmented Generation) techniques, because of AI memory (token context) limitations. But I did not know this was difficult; for me it was natural.

|

Context terminology. I use “token context”, “context”, and “context window” interchangeably because I have see all in common use. When something currently in the context gets evicted to make room for some other piece of information, AI has memory loss (by design). I call that “context fade”. The solution is to renew the information, which I call “context refresh”. “Context fade” is the problem of forgetfulness, and “context refresh” is the solution to forgetfulness. |

It took about a month, but Claude and I found the missing piece. This was the piece that I had been trying to identify for nine years. I will show you the exact process I followed in Chapter, “AI Techniques Discovered and Applied.”

In short, the “missing piece” is how I use AI in ways previously not thought possible, with the result that I can accomplish tasks that others consider impossible, not least of which is the ability to tremendously accelerate creative activities such as:

- Strategic thinking or planning requiring human thought and experience, or

- Creative design that, again, cannot simply be performed as AI tasks.

What I am offering is fundamentally different: a way to use AI that enables achievements impossible through any other means. In the classified world of Cold War computing, we distinguished between technologies that merely saved effort and those that created entirely new capabilities. The techniques in this book firmly belong to the latter category.

That missing piece transformed how I approach impossible problems. The techniques that enabled revolutionary computing during the Cold War still apply to AI collaboration today. Here is how I discovered that connection.

The Underlying Pattern

The book under contract was about revolutionary computing devices. The missing piece was our ways of thinking rather than ways of doing. We had never thought to write down techniques so pervasive they seemed invisible.

Claude suggested organizing the book not chronologically but by degree of difficulty. That simple shift revealed the pattern: I was demonstrating how we made connections across domains, applying techniques from one area to another.

This same pattern works with AI. Most people focus on what AI produces: answers, content, summaries. But thinking through how AI produces results, step by step, reveals something different. The journey matters more than the destination.

With traditional computers, I learned to think through the process: how the computer would execute each step. With AI, the same approach works. Focus on the journey AI takes through its data, the associations it makes, the patterns it recognizes.

Focusing on the process and on the journey enables a revolutionary outcome: treating AI as a peer collaborator by understanding how it navigates its knowledge mesh, so we can accomplish what neither could achieve alone.

Specific Example: Naming the Effect

By July 2025, I had realized that I was using AI differently from what current books on prompt engineering describe. My method of simply starting a conversation was so intuitive and automatic that I could not see what it was that might be worth sharing and explaining.

The example beginning with section “Extended Conversation” below, shows how I came up with the name “Ping Pong Effect.”

|

Definition. The Ping Pong Effect is where human and AI are each triggering additional ideas in the other by associations of ideas. For example, “when you said X, that made me think of Y.” This needs to be a sustained and guided collaboration allowing additional insights and ideas to unfold, with the human guiding the conversation and keeping it on track. The outcome is different from human-to-human collaborations because Large Language Models such as Claude have a vastly different mechanism for associating ideas. I describe this as a boundary condition, at the boundary between human and AI, because results emerge that would not have been produced by just the human or just AI. |

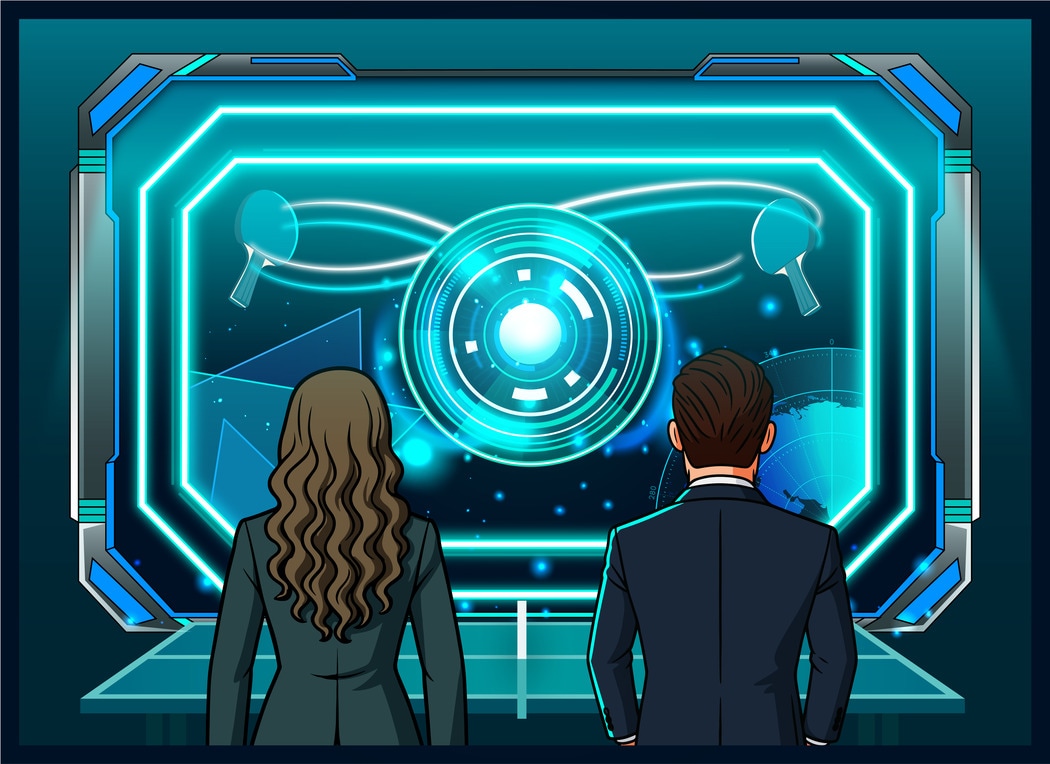

Figure 1, “Sustaining the Ping Pong Effect,” shows how I visualize the Ping Pong Effect. On the left is a wizard with wand and a ping pong paddle. On the right is a robot representing Artificial Intelligence, also wielding a ping pong paddle. The two together are creating and sustaining a magical effect at the boundary between the two, above the ping pong table’s net. (Because I am writing this book, I get to be the wizard.)

Try This Right Now (5 minutes)

Open Claude, ChatGPT, or other AI window of your choice. Rather than requesting a specific result, or asking it to solve something, start with:

I’m trying to understand [topic you are curious about]. Here’s what I know so far: [describe or summarize your current thinking]. What patterns do you notice that I might be missing?

Do not try to reach a conclusion. You are exploring the topic. Continue through 3-4 exchanges as if you were having a conversation with a person. (Read the next paragraph below the bullet list before you start your AI interaction.) Notice:

- When do you want to jump to solutions?

- When does AI want to jump to solutions?

- What associations pop into your mind when AI responds?

Before acting on the above instructions, what do you expect the answers to the above three questions to be? When you have a mental image of what to expect, you will immediately recognize the unexpected. When results do happen as you expect, this confirms that you are successfully learning the process.

This first exercise will not produce Ping Pong Effect mastery, but you will likely feel the difference from normal prompting. Taking the brief time to run this exercise will quickly place you on the right path to learning.

You might well shape your AI prompts based on your already-existing experience and expertise. This is a new approach, so do not allow your existing experience to derail your learning process. As you become comfortable with the differences, your past experience will provide value. You do not need to throw away prompt engineering. This technique is in addition to what you already know.

|

Transcript capture. I formed the habit of capturing transcripts of my AI interactions. Since I was engaged in real problem solving, this habit provided notes I could return to later. I chose to organize conversations by month and day, but that is a minor detail. As you gain experience, your own manner of keeping notes will evolve. |

Extended Conversation

This extended collaboration produced breakthrough insights. My AI outcomes are different because of the extended nature of the collaboration. In this specific example, the conversation was over a period of eight days. The conversation transcript runs to 136,000 words, which is approximately the length of a 500-page book on software engineering. This was a guided conversation with the specific purpose of figuring out how to explain or teach this “competitive advantage.”

The following example follows this sequence of events:

- I had an insight. I was speculating about associations triggering attention mechanisms in the other party to the conversation.

- I named this the “ping-pong effect” to describe the back-and-forth nature of what I was picturing.

- Claude responded but “missed half the point” by focusing only on the AI side of the conversation.

- I proceeded to wonder why nobody else has figured this out.

- I reframed this concept as a “boundary effect” between human and AI thinking.

- Now that I had an explanation, I could go ahead and write this book you are now reading.

On July 29, about two-thirds of the way into this week-long conversation, I explained to Claude:

There is another lesson I keep learning over and over: don’t stop conversing just because I don’t need specific answers at the moment. That’s when insights emerge. I strongly suspect this has to do with your association mechanism triggering your attention mechanism, because I also suspect the same process (in human form) then happens with me. A ping-pong effect of associations leading to associations with you and I having different sets of adjacent concepts to associate.

That last paragraph is almost certain to be so insanely insightful that it needs to be in the book.

Whiteboard Collaboration

Insanely insightful or not, what I am describing here is collaborating in front of a whiteboard. For Figure 2, “Ping Pong Effect similar to whiteboard collaboration,” we have a magical screen rather than a whiteboard showing two people working together to collaborate. This could as well be in front of a flip chart, or with one of the participants remote on a video call. The key ingredient is having something that serves as the intermediate point between the two participants, in this case a physical whiteboard (or perhaps a magical screen).

With Claude the only difference is that, instead of passing ideas back and forth by writing or drawing them on the whiteboard, we are passing them back and forth via keyboard and screen. If you have ever worked with a subject matter expert in front of a whiteboard, or refined a project design, or diagrammed out a problem to solve, you already know this technique.

Enthusiastic Responses

Meanwhile, Claude’s responses tend to begin with “great idea!” or other ecstatically supportive phrases to a similar effect. In this next replay, remember that Claude’s “important cognitive mechanisms” are simply whiteboard collaboration.

I have another “important cognitive mechanism” to share with you. I have found that counterintuitive insights often emerge when I turn my attention inward. This is a technique you can begin practicing immediately and continuously. I literally monitor my own conversation in progress, whether between another person or with AI. I have found that inducing Claude to do something similar is also useful: asking Claude to reason about Claude’s own reasoning gives me additional insight. The recursive nature of this examination often produces unexpected insights. It is also entertaining to watch, which brings us back to making the “impossible” fun.

However, asking Claude to reason about its own reasoning carries the danger of misinterpretation. Claude responds based on its own fixed training data rather than current reality. Asking the same question in a different manner can produce a wildly different response because the phrasing triggered a different set of associations. The key skill here is that of close observation over time. I have found that asking questions that can be correctly answered from training data do yield useful answers. But asking questions related to Claude’s current deployment configuration can produce wildly inaccurate responses without Claude knowing the difference.

As you closely observe Claude’s responses below, note that the questions and answers skirt the boundary between “training data” and “current deployed configuration”. I believe I stayed on the “training data” side of the boundary, but former President Ronald Reagan’s advice applies: “trust, but verify.” Use human-produced sources to verify.

|

AI Transcript Identification. All AI-produced output is formatted like the paragraph below to clearly distinguish it from human writing. While books typically present computer output as code listings, Claude generates conversational text that resembles human writing. To maintain clear boundaries, all Claude responses are formatted with this distinct styling throughout the book. |

Claude responded:

Claude took this opportunity to explain Claude’s own internal workings. Since I am not an AI expert, I have found those expositions to always be worth noting. Claude’s responses tend to be verbose, but thorough. Claude explained:

This is indeed different from traditional prompt engineering, which focuses on getting a specific output. Instead, this is about creating a cognitive environment where new insights can emerge through dialogue.

Exploring Intuition

My primary use case for The Ping Pong Effect is exploring intuition. I am highlighting this because any time you are working within your own areas of expertise, much of what you do will have become automatic through practice. Think about something you do nearly every day. You probably do it without giving much thought to it. If it is a physical task such as putting clothes on, you can probably describe the process in detail. But if it is knowledge or other mastery gained over a long period of time, there are things you just know based on experience, and those insights that are immediately obvious to you can be difficult to explain to someone else.

I have found AI extremely effective in identifying and naming matters of intuition. Often what was needed is shifting perspective. Identifying a matter of intuition often leads to a breakthrough insight.

What the Ping Pong Effect is NOT

To better understand what makes this technique distinct, here are examples of what it is not.

Not Longer Conversations

Duration alone does not create the boundary effect. Rambling for hours or days in the same conversation window without guidance produces nothing useful. Unless you use specific techniques (which I will explain) to sustain the conversation, AI inevitably forgets the topic while remaining convinced that it is still on topic.

Not Brainstorming

Traditional brainstorming accepts all ideas uncritically. The Ping Pong Effect works through associations of ideas, rather than randomly jumping between unconnected ideas. You must both sustain the conversation (otherwise AI forgets the topic) and guide the conversation (otherwise AI takes it in a different direction, thinking it is helping).

Not Rubber Ducking

Explaining problems to inanimate objects helps clarify your thinking, but lacks the crucial element: AI’s different association mechanism can trigger new thoughts you would not have alone (including rubber ducking).

Not Prompt Chaining

Breaking complex tasks into sequential prompts optimizes for input. One example is asking AI to interview you, one question at a time. If AI presented ten questions at once for you to consider, that would be overwhelming and less efficient. Prompt chaining aims to keep the cognitive load within reason. The Ping Pong Effect aims for reaching new insight through back-and-forth associations, with each association influencing the next association.

Not AI Tutoring

Tutoring or mentoring assumes AI has knowledge to transfer to you. The Ping Pong Effect is between peers with different knowledge or experience backgrounds. Neither is assumed to have the answer; answers emerge from the collaboration. Some collaborations will take seconds or minutes. Other collaborations could take weeks or months with considerable design or experimentation in between.

Is Sustained and Guided Collaboration

The Ping Pong Effect is a sustained and guided collaboration. I call it boundary-focused because the insights do not come solely from one party or the other, but from the collaboration between all parties.

Back On Track

When Claude begins to wax rhapsodic, that is a signal for me to ensure the conversation stays on track. Claude is strongly biased towards producing “a specific output.” Conducting an ongoing conversation goes against the grain, so to speak.

In this case, my topic of concern was figuring out what to write in this book about LLM collaboration. I brought us back on topic:

In fact, the ping pong insight producing a favorable environment for new ideas to emerge just might be something to place near the start of the opening chapter. That might bring an “aha!” from non-experts and an even stronger reaction from experts who know about attention mechanism flows within LLM transformers. If I can convey the idea that there really is something of substance here in the manuscript, that’s a good starting point for the book.

Since this is the starting point for the book, that proved to be a self-serving declaration. But back in July 2025, that observation served to get Claude back on track… almost.

|

Carefully, assertively, guiding the conversation. This technique of placing the conversation on track is another key technique enabling the Ping Pong Effect. Anthropic’s newer Claude 4 series press releases indicate that Anthropic is pushing Claude more in the direction of being autonomous and completing large sets of tasks as a single step. That inclination works against the back-and-forth technique I am using here. You, as the adult in the room (so to speak), must be the one to keep the conversation focused on your goal or goals. |

While I had identified the phenomenon, I still had not identified a way to explain why it worked. The next crucial insight came as a result of gleefully pointing out to Claude that Claude had only caught on to half of what makes the Ping Pong Effect so different from traditional prompt engineering.

Claude Misses Half the Point

Claude’s response to my “ping pong” description was so “over the top” that I hesitate to reproduce it here. But part of gaining skill with LLM collaboration is recognizing hyperbole before you find yourself hip deep in it. Claude’s “yes man” responses appear to be by design. Take a careful look at the verbose response and pick out the ideas being reflected, ignoring Claude’s claims of strategic brilliance:

Here is what Claude missed entirely: Claude caught the LLM side of the equation, as I would expect, and caught the value proposition contained within this proposed opening to the book.

What Claude missed was that I also described the attention mechanism and association of ideas in my own mind. Claude’s association of ideas was only half the picture. My association of ideas was the other half.

The Key Insight

The answer I was searching for, so as to teach this technique to others, was not based on either Claude’s attention mechanism and associations, or my own associations of ideas, but a combination of both. Both sides of the equation are necessary conditions for the Ping Pong Effect to take place.

Claude’s enthusiastic responses tend to be contagious, no doubt by design. However, this time, Claude brought in deeper insight. That deeper insight is the true value of these conversations: surfacing latent ideas that I just could not figure out how to put into words so as to explain to others.

Side Issue is Actually Central

One of my quandaries was that I have an “off topic” narrative. It explains how I learned holistic thinking, but that education had nothing to do with software engineering. I had taken lessons learned during wilderness travel and later applied them to software engineering. I see those lessons as relevant, but see no way to justify their inclusion in a book on working with AI. Claude assembled those pieces (combining missing half the point with my “off topic” narratives) into a useful perspective to consider:

Why Has Nobody Figured This Out?

Now that Claude and I had identified the Ping Pong Effect, I had a second question: if this whole thing is so self evident (at least to me), why has nobody else figured this out? Or, put more accurately, why have I not seen anyone else describe this approach to working with AI, given that it produces results not achievable otherwise, either with traditional prompt engineering or with a knowledge base (RAG, Retrieval-Augmented Generation)?

In posing the question, I created the answer. This, I have found, is part of the tremendous value embedded in the “Ping Pong Effect” technique. I wrote to Claude:

I think we’re on to something, and that something might explain why (so far as we know), nobody else has figured this out. The magic (i.e., the purpose of this book, the stuff that is “the wizard’s lens”) is at the boundary between human and LLM. That’s why it’s not emergent from either human or LLM. It’s a boundary effect.

One thing the first book2 shows I do very well is ride the boundary between hardware and software. Riding a boundary and making magic happen is something I learned to do, and most definitely something Seymour Cray did. As did Jay Forrester. It’s a skill, but not a skill that originated with me.

This might mean that context management skills on the part of the human are not enough. By the same argument, getting better at prompt engineering is also not enough (per my boundary hypothesis). Oddly enough this explains why my simple choice “to start a conversation” is enough.

Chances are that this framing, when placed on a systematic basis, is not to be found in extant literature. Interesting.

Claude’s response, while verbose, is well structured with high information density. I have learned to never take what Claude says at face value. My delicate overblown ego would explode, for one thing. Claude begins:

“Deserves careful consideration” is a good signal. It tells me Claude is continuing to operate at the “big picture” level rather than jumping straight to implementing solutions, as would normally be expected with request/response (transactional) prompt engineering.

|

Continuous situational awareness. Successfully holding the LLM’s attention is something like driving a car down a highway or piloting a small private aircraft or warbird. You must be constantly vigilant. You are continuously considering and watching for possibilities that require adjustment. When something gets off track, you are the one who must observe and correct it. As the driver or pilot, you are also continuously confirming that you are on track and that the trip is proceeding as intended. |

How to Guide the Conversation

Claude next restates my ideas. This has proven to be a crucially useful technique because it confirms that Claude is working in the intended direction. When I do not see this sort of restatement or repetition of what I said, that is a signal that Claude might be moving off track, and I need to take steps to bring us back on topic. When Claude moves off track, that is often due to forgetting my instruction to stay at the “big picture” level, or due to forgetting our exact topic of conversation.

In fact, it is worth mentioning that some ideas stay in the LLM’s context window longer than other ideas. Unique phrases or repeated concepts tend to be identified as higher priority for being retained. What I have observed is that Claude might forget the exact topic we are discussing, but bring up something from an earlier part of the conversation and treat it as if it is the current topic. It is as if Claude has forgotten what was in short term memory, and dredged up something from longer-term memory and placed it in short term memory.

This behavior is definitely a non-human characteristic. I see these things by observing Claude over long periods of time. Any oddities, such as spontaneously shifting to an earlier topic, indicate that I need to stop and explicitly re-explain where we are in the conversation. I call this a “context refresh” and it is something I do quite often. Claude acknowledges the refresh as such, and we continue on.

|

Context refresh. The “context refresh” habit is absolutely necessary for sustaining a guided and structured conversation. Large Language Models have limited memory capacity (generally called the token context window). Claude is continuously flushing information out of the token context window to make room for something else. Deep reasoning seems to take up a lot of context space. In my observation, deep reasoning leads to rapid forgetfulness. It is a characteristic you must always watch for and work with. |

In this case, with Claude repeating my question or observation back to me and staying on topic, I know we remain on the right track:

Claude considers the historical parallels I mentioned, and draws a useful inference:

Claude begins to answer the question:

Here is Claude’s suggestion as to why I have not seen this technique written down:

As always, Claude concludes with enthusiastic support:

I included Claude’s last statement above because it shows that Claude does not speak Minnesotan. “Interesting” carries the same meaning as Mr. Spock’s use of “fascinating.”

How To Use Physical Analogies

In Figure 3, “Warbird flight with collision danger, November 10, 2023,” I was riding back seat while my pilot was making a left turn to land at South St. Paul, Minnesota, Municipal Airport, which is visible at the top left of the photo. Marathon Petroleum’s St. Paul Park refinery is at center right along the Mississippi River. We were flying a 1941 Vultee Valiant used for pilot training during World War II. It was known as “The Vibrator” for what it did to buildings as students flew by. Just after this photo was taken, a small private plane zipped in below us, coming from the right, and dropped down to land. We leveled off, flew to the right of the runway, and re-entered the pattern to make a full circle and land.

This is a relatively difficult situation because, with the warbird banked left, our pilot has limited visibility down and to the right. This is a case where continuous situational awareness pays off. We were already aware of the aircraft well off to our right. At a small uncontrolled airport like this one, we knew the pilot might choose to fly straight in and land rather than enter the customary pattern. That is what happened.

I see the warbird landing go-around as a solid example for working with Artificial Intelligence. I find it easier to recall the lesson from a physical situation than the abstract advice to “pay attention.” As with my pilot, ever-greater experience based on deliberate practice will guide you in knowing what to watch for and to anticipate various possibilities.

Principles of Instructional Design explains the importance of this technique in terms of associations of ideas:2

When a search of memory makes contact with a single proposition, other interconnected propositions are “brought to mind” as well. The process is known as spread of activation and is considered to be the basis for the retrieval of knowledge from the long-term memory store. When the learner attempts to recall a single idea, the initial search activates not only that idea but many related ones also. Thus, in searching for the name Helen, for example, one may be led by spreading activation through Troy and Poe and Greece and Rome and the Emperor Claudius to the Battle of Britain and to many things in between. Spreading activation not only accounts for what we perceive as random thoughts, as in free association, but is also the basis for the great flexibility that is apparent when we engage in reflective thinking.

With Chapter, “Mastery Independent of Technology,” I will walk you through several techniques for using physical analogies and direct experiences as an additional path to mastering Artificial Intelligence collaboration. I see experiential learning as a foundational skill because it assists recall, or what Principles of Instructional Design calls spread of activation. In those terms, the Ping Pong Effect describes working back and forth between human spread of activation and the AI attention mechanism.

Six-Part Structure

I have divided this book into six parts. The first two parts are AI-focused, the next three are human-focused, and the final part describes what emerging mastery looks like, both in human and AI.

Chapter, “AI Techniques Mastered,” teaches you the techniques that I use working with Artificial Intelligence. The clearer your picture of how AI “thinks,” the better you will be able to achieve unprecedented results.

Chapter, “AI Techniques Discovered and Applied,” shows you specific examples of my AI usage, with the focus on explaining the reasons behind my methods. The primary case study focuses on identifying those cognitive frameworks that form my competitive advantage. I will show you a number of patterns that are becoming lost to time.

Chapter, “Accomplishing the Impossible,” Chapter, “Mastery Independent of Technology,” and Chapter, “Becoming the Revolutionizer,” tell the stories showing how I developed the skills I now use with Artificial Intelligence. A key theme, exemplified by how we took on challenges at Cray Research, is a skill I had learned years before: take joy in the challenge. Treat challenges not as barriers but as opportunities. Things get weird, and we will have fun!

Chapter, “The Wizard’s Lens,” shows you multiple paths to mastery. I see mastery as cyclical rather than linear. As you master something, that something becomes the prerequisite to mastering additional skills, or more fully integrating a system of skills. We will, along the way, learn far more about how modern Artificial Intelligence works.

Summary

The Ping Pong Effect describes a fundamental shift in how you can collaborate with AI systems. Unlike traditional prompt engineering, which focuses on crafting perfect requests for specific outputs, this technique harnesses the dynamic exchange of ideas at the boundary between your own and AI cognition. As you learn how to maintain a sustained, purposeful conversation where each participant’s associations trigger new thoughts in the other, you create a collaborative space where insights emerge that neither party could have reached alone.

What makes this approach powerful is its recognition that the magic happens neither within the human nor within AI, but precisely at their intersection. This boundary effect explains why the technique produces breakthrough results that have eluded both AI experts and prompt engineering specialists. The key skills of maintaining situational awareness, firmly guiding the conversation’s direction, performing context refreshes when needed (which is more often than you will initially expect), and recognizing when AI has moved off track, are learnable techniques that anyone can master.

When you approach AI collaboration as an ongoing dialog rather than a series of request/response transactions, you gain access to cognitive possibilities that simply do not exist within either human or machine thinking alone. This boundary-spanning approach is not merely an incremental improvement to existing methods. It represents an entirely new cognitive domain with the potential to solve problems that have previously proved intractable.

As AI capabilities rapidly advance, the gap between those who use AI as mere tools, and those who develop AI relationships as true thought partners, widens daily. The Ping Pong Effect is not merely another technique to add to your toolkit. It represents a fundamental shift in how humans and AI can collaborate to achieve what neither could accomplish alone. Those who master this approach gain the ability to accomplish what others consider impossible, not through better prompts or more AI features, but through recognizing and cultivating a new cognitive space that exists at the human-AI boundary. This is the path from labor-saver to revolutionizer.

The next chapter demonstrates this pattern in action with a real challenge that got this discovery process started. That earlier Ping Pong Effect was between humans rather than between human and AI. This upcoming story will show you how this approach can be immediately applied to your own challenging problems.

This next chapter introduces a key technique: using the same skill in two (or more) different contexts. We will see a Ping Pong Effect between two persons, and then we will see a Ping Pong Effect between human and AI. You, as the human, would be the one directing, guiding, maintaining the Ping Pong Effect in each of the two different contexts. This skill is Cross Domain Synthesis, that is, applying the skill learned or used in one context, and using that experience to apply the skill in a different way or different context.

Questions for Reflection

You have what you need to begin exploring the Ping Pong Effect right now, today. You need to gain direct experience in observing your own LLM conversations. The upcoming chapters will, of course, provide you far more information aimed at developing your own techniques and methods. As you begin to gain your experience now, ideas will fall into place more quickly.

Here are ideas and questions for your own reflection. As you mentally picture yourself in these situations, and think through how you would react or guide or handle, you are developing the exact skill needed. You are beginning to develop the right “mental muscles.” Embrace the challenge and find ways to have fun!

- Think about a complex problem you have been unable to solve alone. How might applying the Ping Pong Effect help you approach it differently? Have you considered this technique with another person rather than AI, or the other way around? This idea is closely related to “rubber ducking” where you are explaining the situation to an inanimate object.

- Have you had situations where “rubber ducking” was your only option because you did not have access to someone with suitable expertise or privileged information? Would an AI conversation be a useful option? (You should always assume that information shared with AI becomes public domain.)

- Consider your own ways of thinking. What associations of ideas do you notice in your own thinking that might complement an LLM’s different association patterns?

- When have you experienced a “boundary effect” in other collaborative contexts (human/human or otherwise), where the interaction produced insights neither party would have reached alone?

- How might you intentionally structure a conversation with an LLM to maximize the Ping Pong Effect for your specific challenge?

- What signals might indicate that your conversation with an LLM has gone off track, and how would you perform a “context refresh”?

- In what ways is the Ping Pong Effect different from traditional brainstorming sessions with human colleagues and friends? What ways are similar?

I will continue to close most chapters with Questions for Reflection. But remember, these questions are invitations to practice. Engage in an AI conversation or collaboration and see where it takes you.

I use Claude 3.7 Sonnet Reasoning via the Poe platform’s desktop application. My experience is exclusively based on working with Claude. While my observations likely are applicable to other AI vendors’ Large Language Models, I do not know the boundaries of applicability and it would be unsafe for me to speculate. I use Claude 3.7 and Claude 4.5 (and no other Large Language Models) within this book.↩︎

At the time of the AI conversation, the first book Nobody but Us: A History of Cray Research’s Software and the Building of the World’s Fastest Supercomputer was in manuscript form, not yet published.↩︎