Chapter 2: The 2026 Policy Landscape

A Yearly Snapshot of the Sector

The CampusCISO IT Policy Framework describes the elements of a well-developed higher education IT policy program. This guide shows you how to interpret and apply that framework. This chapter shows you what the sector has actually built against it as of 2026.

We publish a new edition of the framework each year, grounded in fresh data from hundreds of colleges and universities. The 2026 edition draws on 410 institutions, including every R1 research university in the United States. The data behind this chapter comes from that review.

The snapshot changes each year. Ransomware response procedures we found at 57% of Tier 1 institutions today will land at a different percentage next year, because more institutions will write them. AI governance policies at 47% today will either climb sharply or stall out, and either outcome tells you something. International Travel Security standards at 6% today are the kind of number that has nowhere to go but up.

Because the numbers move, the chapter moves with them. You’re reading the 2026 edition. If you’re reading this in 2027 or later, pick up the newer edition for the current measurements. The other chapters in this guide won’t have changed much. This one will.

About the Data

Instead of starting with what frameworks tell institutions to do, we looked at what they’re doing. For each institution, we identified the policy repository, mapped every document to our framework inventory, and evaluated how their governance structure coordinates security decisions.

Throughout this guide, we organize institutions into CampusCISO Institutional Tiers for benchmarking. CampusCISO uses these tiers across our diagnostic services to provide granularity without identifying specific institutions.

The tiers are currently based on the Carnegie Classification of Institutions of Higher Education, but we designed the system to accommodate non-US institutions in future updates.

| CampusCISO Tier | Current Composition (2026 Sample Size) |

|---|---|

| Tier 1 | R1: Doctoral universities, very high research activity (187) |

| Tier 2 | R2/RCU: Doctoral universities, high research activity (86) |

| Tier 3 | Masters and non-research graduate institutions (39) |

| Tier 4 | Baccalaureate colleges (35) |

| Tier 5 | Community and associate’s colleges (38) |

| Special Focus | Specialized and tribal institutions (25) |

We aligned the framework to common standards, including NIST CSF 2.0, NIST SP 800-171, CIS Controls v8, and ISO 27001/27002. The goal is to complement those standards, not compete with them.

This framework has three limitations worth noting.

- The research measures publicly documented policies, so institutions with robust but unpublished policies may appear less developed.

- Assessments reflect a point in time (December 2025 through January 2026).

- The presence of a policy document doesn’t guarantee effective implementation.

This framework analyzes governance architecture, not operational effectiveness.

What we found wasn’t a single coherent standard. It was hundreds of institutions solving the same problems in different ways, with different names, unique structures, and varying levels of completeness. We distilled those diverse approaches into a common framework of 17 policies that establish governance authority and institutional intent, plus 24 standards that specify technical requirements and operational procedures. Throughout this book, we’ll refer to policies and standards using reference numbers like P-01 for policies and S-01 for standards.

Each year’s research refines the framework as institutional practice evolves. The 2026 edition provides a map of where the sector has built consensus and where critical gaps remain. Some policies are nearly universal. Others are quickly gaining traction but haven’t reached critical mass. And some, including ones that federal regulators already ask about, barely exist at all.

Three Patterns That Define the Landscape

Governance Debt doesn’t accumulate evenly. When we analyzed the data, three distinct patterns emerged, each revealing something different about where higher education stands today. You’ll probably recognize some of them in your own environment.

| Pattern | What it measures | What our review found |

|---|---|---|

| Authentication Floor | Whether basic identity controls (passwords S-03, access control S-06) are documented | Over 80% at every tier. The one place the sector has consensus. |

| Ransomware Cliff | Whether a ransomware response plan is documented | 57% at Tier 1, 41% at Tier 2, 2% at Tier 4. The plan thins out fast at smaller institutions. |

| Oral Tradition Liability | Whether technical standards are written down, not just policies | Policies exceed 75% at every tier, but published standards fall from 76% at Tier 1 to 37% at Tier 5. |

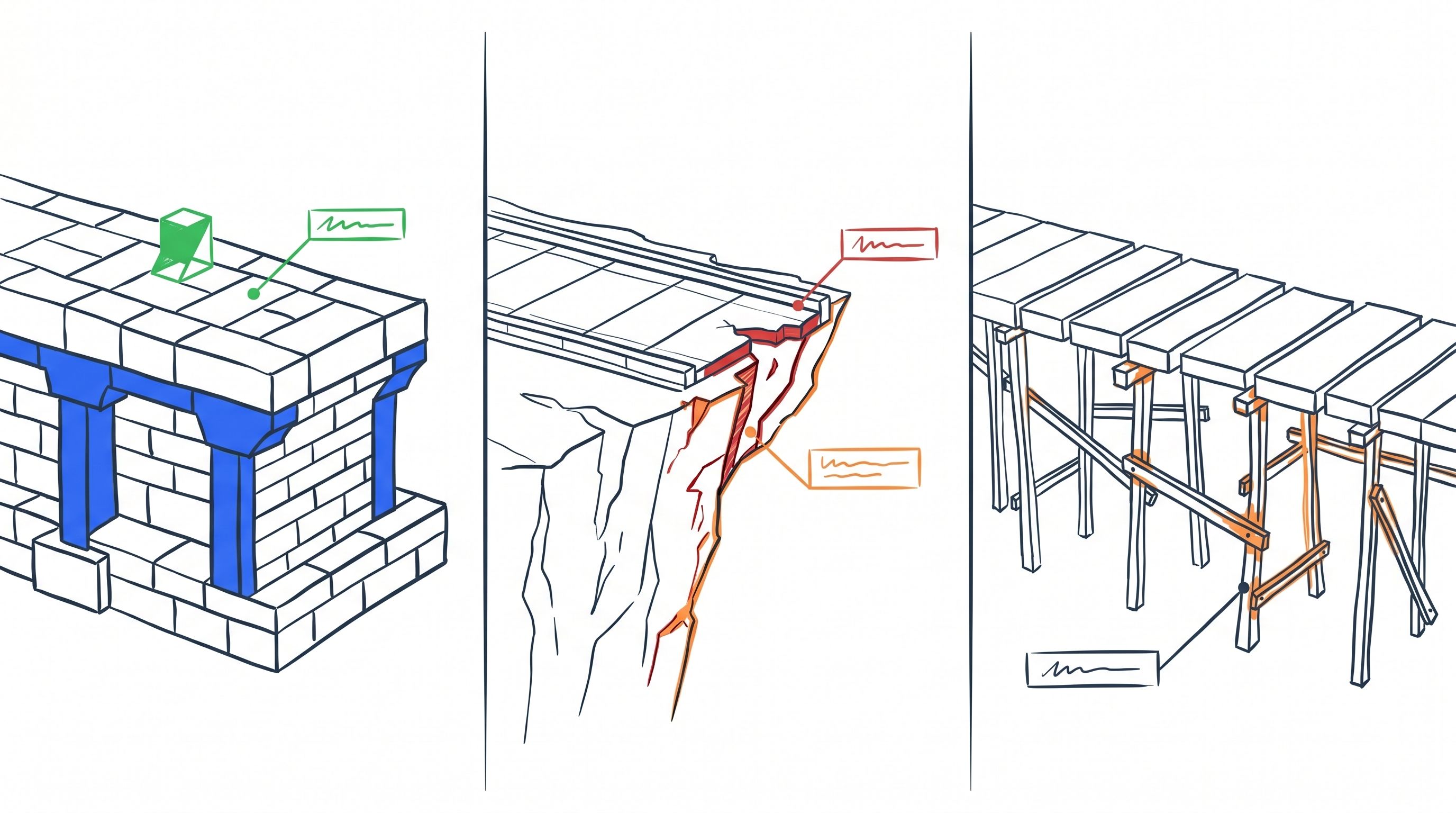

The Authentication Floor

The good news is that some things are working. Over 80% of institutions at every tier have adopted standards for passwords (S-03) and access controls (S-06). Regardless of size, budget, or complexity, nearly every institution has built at least this much. This is the floor, and it proves that sector-wide consensus on security controls is possible. The question is why so few policies and standards have reached such widespread adoption.

The floor is real, but it measures breadth, not depth. A password standard that still mandates old complexity rules (eight characters, one uppercase, one special character) instead of new guidance (longer length, passkeys, and phishing-resistant MFA) still counts as present, while giving a false sense of safety. And few institutions have standards that govern the service accounts, API keys, machine credentials, and AI agent identities that now outnumber human users on most campus networks.

The Ransomware Cliff

You already know cyber threats are getting worse. By Q1 2025, weekly cyberattacks against the education sector had increased 73% year-over-year. By Q2 2025, education was absorbing an average of 4,388 attacks per week, more than double the global cross-sector average 1. Studies consistently show education is one of the most-attacked sectors globally.

So how many institutions have documented what to do when ransomware activates at 2 AM? At Tier 1 institutions, 57%. At Tier 2, 41%. At Tier 4 (baccalaureate) institutions, 2%. The institutions most likely to lack dedicated security staff are also the least likely to have written down a response plan.

The Oral Tradition Liability

The backup schedule lives in a sysadmin’s head. The patching timeline is “when we get to it.” The access review process is “we know who should have an account.”

Across the sector, more than 75% of institutions at every tier publish their IT policies to public repositories. But the technical standards that turn those policies into operational practice tell a different story. Published standards drop from 76% at Tier 1 to just 37% at Tier 5. We call this decline Tier Decay, reflecting how documented practice thins out as you move down the tiers.

Sometimes the gap reflects documentation hidden behind authentication that an outside assessment can’t reach. But in our advisory work with campuses of all sizes, we often find something more fundamental. The work is usually getting done. It just isn’t written down. The documentation either doesn’t exist, or it hasn’t been updated in years and stays locked away rather than exposing the accumulated Governance Debt.

Governance Debt hits smaller institutions especially hard. Often, one or two people carry the entire operational playbook in their heads. But the principle applies to institutions of all sizes. When your team doesn’t know what’s expected, you can’t expect the outcomes you need. Staff turnover erases undocumented practices overnight. Auditors and insurance underwriters can’t verify what isn’t written down. And decentralized IT units can’t follow standards that don’t exist in a form they can reference.

The Authentication Floor proves the sector can reach consensus. The other two patterns show where Governance Debt is compounding, and why understanding the current state matters before you start measuring your own institution against it.

Additional Gaps Across the Sector

Beyond the three diagnostic patterns, we identified several additional gaps worth calling out. These are areas where the regulatory environment or the threat landscape has moved faster than institutional documentation.

Research Security Documentation. Federal research security requirements have expanded significantly in recent years. NSPM-33 (National Security Presidential Memorandum 33) arrived in 2021, the CHIPS and Science Act followed in 2022, and CMMC 2.0 took effect in December 2024. Institutional documentation has not kept pace. At Tier 1 institutions, we found International Travel Security (S-24) published at just 6% and Security Exception Management (S-23) at 7%. These are operational policies that make federal research compliance possible.

AI Governance Policy. At Tier 1, we found documented AI governance policies at 47% of institutions. At Tier 3 (non-research graduate), adoption dropped to 32%. AI tools are everywhere, but governance hasn’t caught up. Institutions’ exposure to AI risks that affect data protection and academic integrity grows every day.

Third-Party Risk Monitoring. 95% of institutions assess vendors during procurement, but only 15% continue monitoring afterward 2. Breaches involving third party providers like MOVEit 3 and Blackbaud 4 showed what can happen after the contract is signed.

Zero Trust Architecture. We found a Zero Trust standard at only 7% of Tier 1 institutions, dropping to nearly zero at smaller institutions. Even though 70% of security professionals want training on Zero Trust 5, almost no institution has documented strategies.

Identifying policy gaps at your institution may feel overwhelming, but we’ll help you prioritize which to correct first.

Interpreting the Data

Two conventions shape how the data in this chapter should be read. The first distinguishes prevalence from applicability. The second defines the prevalence tiers the rest of the book uses.

Prevalence vs. Applicability

Before we go further, there’s one thing to be aware of. This guide shows the “prevalence” of different policies and standards. This represents what percentage of institutions have documented a policy or standard, not how many institutions need one.

For example, we found Identity Theft Prevention (P-16) policies at only 11% of Tier 1 institutions. That doesn’t mean identity theft prevention isn’t important. The FTC Red Flags Rule has required written identity theft prevention programs since 2009 for any institution that extends credit to students (including tuition payment plans). The 11% figure likely means the sector has a policy documentation gap, not that 89% of institutions made a deliberate decision to skip it.

The same logic applies to emerging items like AI Governance (P-12) at 47% and International Travel Security (S-24) at 6%. Low prevalence can show that a requirement is new, that institutions are slow to document existing practices, or that the sector has a genuine blind spot. Low adoption rates don’t make the item optional for your institution. Your regulatory profile (Chapter 7) determines which policies and standards you need. Prevalence data tells you where you stand relative to peers, not where you should stop building.

Prevalence Tiers

In the framework, we calculate prevalence as the percentage of Tier 1 institutions that have publicly published a policy or standard. Prevalence tells you how common an item is across the sector. It doesn’t tell you whether that item applies to your institution.

Based on the prevalence data, we divide the IT Policy Framework into three tiers.

| Tier | Definition |

|---|---|

| Universal | Found at 90% or more of Tier 1 institutions. Typically legally or operationally essential. |

| Common | Found at 50-89% of Tier 1 institutions. Indicators of well-developed security programs. |

| Emerging | Found at fewer than 50% of Tier 1 institutions. Growing in importance. |

What the Data Leaves Out

The framework measures what’s written down. It doesn’t measure why.

Why has the sector reached broad consensus on authentication standards but not on ransomware response? Why do 94% of Tier 1 institutions publish policies while only 37% of Tier 5 institutions publish their standards? Why are institutions with the smallest security teams also the least likely to have a documented plan for the threats they face most?

The numbers surface the patterns. They don’t explain them.

The rest of the book works on the explanation. Chapter 3 maps the cultural and operational site conditions that shape what higher education institutions can build. From there, the book walks through framework alignment, the regulatory loads institutions carry, the 41-item inventory in detail, the research enterprise, and the operational model that holds it all together.

The data in this chapter is the starting point. The rest of the book is how you use it.

Chapter 2 Key Takeaways

Chapter 3 looks at the ground the sector builds on: shared governance, academic freedom, the open campus, and the cultural dynamics that make higher education security programs fundamentally different from anything in the corporate playbook.

Sophos, The State of Ransomware in Education 2025 (2025).↩︎

EDUCAUSE, “EDUCAUSE QuickPoll Results: Third-Party Risk Management Practices in Higher Education,” August 2024, https://er.educause.edu/articles/2024/8/educause-quickpoll-results-third-party-risk-management-practices-in-higher-education.↩︎

Dark Reading, “MOVEit Breach Impact on Higher Education,” 2023, https://www.darkreading.com/cyberattacks-data-breaches/moveit-breach-impact-higher-education.↩︎

Inside Higher Ed, “US Institutions Affected by Blackbaud Cyberattack,” July 2020, https://www.insidehighered.com/quicktakes/2020/07/31/us-institutions-affected-blackbaud-cyberattack.↩︎

ISC2, “Zero Trust Skills Gap,” June 2024, https://www.isc2.org/Insights/2024/06/Zero-Trust-Skills-Gap.↩︎