An Introduction to Artificial Intelligence

I don’t believe that we can talk about AI in a meaningful way without addressing our own human cognition. Since this is primarily a programming book on how to write AI systems, the material in this chapter can be skipped or read later if you want to move on to the cookbook material. Here, I want to here provide some non-programming background material that I hope you find interesting.

The word cognitive refers to thinking. When we talk about cognitive computing we intend to understand how we can model the human brain in sufficient detail to write programs that simulate processing input into an internal representation and generate output. In later chapters we will start with training simple feed forward neural network classification models. When we speak of learning a model we mean the process of learning a set of parameters that map input patterns to desired outputs, that is, to be able to make predictions given data similar to the data used to train a model. In the case of neural networks, the parameters of the numeric values of the connection weights in the neural network. Modern neural network architectures mimic the human brain closely enough to make studying human cognition a practical addition to the math and software implementation techniques for simulated neural networks.

There are two extremes to modeling the human brain: abstracting away the biological details of the brain, like how neurons fire, with the goal of solving practical problems, or, create detailed (and computationally expensive) low level biological models. We will use the first approach. That said, we want to understand how our brains work, largely because it is intellectually stimulating to understand how we can quickly learn new things, process episodic data and keep it in isolation in the hippocampus, chain together deductions, etc.

Overview

Here are most interested in practical applications of AI technology. I hope that working through this book will have a positive effect on your career.

We will look briefly at how the human brain works and then list the practical engineering techniques developed in the field of AI.

An Overview of How the Human Brain Works

In the following subsections we will look at neural network models of memory: artificial and biological neural networks.

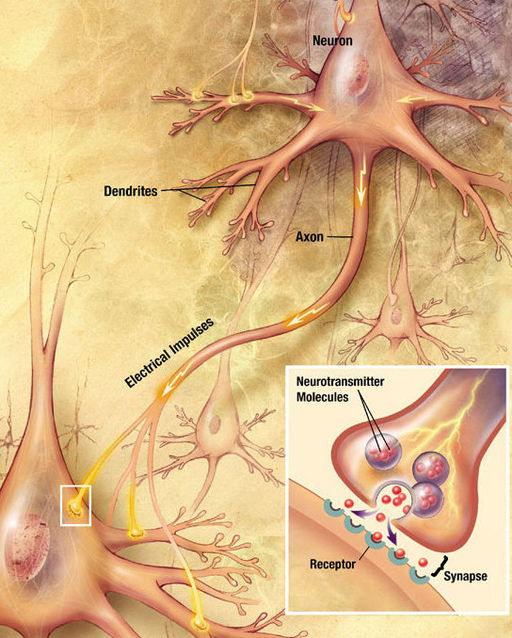

The following figure shows a representation of human neurons:

We will use this figure to discuss a model for biologic neurons collecting activation, spiking, and firing. When a neuron fires it sends a signal outwards through a synapse that in turn connects to the inputs (dendrites) of other neurons. Dendrites branch out from a neuron, like branches on a tree to collect the output from other neurons when they fire.

Inputs to neurons usually carry a positive (excitatory) signal but they may also carry a negative (inhibitory) signal. Later when we look at artificial neural networks, these types of connections will be called positive and negative weights. Neurons also get small input from leaking from nearby tissue. Later when we use artificial neural networks, this leakage signal will be called a “bias” input.

This process of activiation energy flowing through a real (human or animal) neural network is largely “feed forward” in one direction and performs pattern matching, memory storage, and reactive control. The human brain can also retain episodic memories: sequences of events. The hippocampus is largely responsible for these time sequenced memories. Later when we look at artificial neural networks, we will sometimes use feedback loops that can remember sequences in addition to patterns that a feed forward network can remember. Artificial neural networks with feedback loops are called recurrent neural networks that mimic processes in our brains. Current state of the art practice uses Long Short Term Memory (LSTM) which is a type of recurrent neural network that is capable of recognizing time sequenced patterns that are separted in time. The Tensorflow library has support for experimenting with LSTM. An anology that I like is recognition of a recurring theme when listening to a symphony. The hippocampus provides neural loops that learn specific patterns and can recognize these patterns in the future.

The chemical processes in a human brain are fairly well understood and certainly much more complicated than the relatively simple models for artificial neurons and the connections between them, but I find it reassuring that these simple artificial neural network models that are so effective for solving engineering problems are also similar in spirit to how our brains work.

Have you wondered how your brain can do such a good job at pattern recognition? We can recognize people in different clothing or hair styles after years of not seeing them and so on. This flexibility is possible because neurons and the connections between them in the neocortex form hierarchical memory structures. At the top of these hierarchical clusters, closest to input signals, collections of neurons with their interconnections learn to recognize fine grained features, like shape of elements of face (nose, eyes, mouth, etc.), hair color, beards and mustaches, and eye color. As we proceed down this hierarchy, neural clusters recognize increasingly abstract patterns; for example, is the person in front of us a man or woman. The main point here is that the memory structures in our brain that provide this flexibility are both distributed and hierarchical. Another key feature of our brains is that neuron activation tends to be sparse; that is, relatively few neurons are firing in response to vision, hearing, and touch at any instant in time and those that are, are firing in response to either these direct external inputs or by the signals propagated by connected neurons that are firing from early external stimulus. Later we will use deep learning neural networks that learn how to self-organize similar hierarchical clusters that recognize specific features in data. Deep learning neural networks often use sparse connections between simulated neurons.

One defining feature of human brains is what scientist David Chalmers calls “the hard problem” which is explaining why we have inner thoughts (qualia) that can be daydreams or other thoughts that are independent of external stimulous from our environment. As I write this in February 2017 I know of no AI systems that have such qualia.

Introduction to Artificial Neural Networks

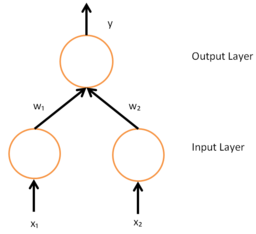

The following figure shows the simplest type of artificial neural networks which from now on I will simply call “neural networks.” Two input signals X1 and X2 are used to set the activation energy of the two neurons at the bottom of the figure. The connection between the input neuron on the left and the single output neuron is characterized by a weight value W1 and the other input neuron is connected by a weight with a strength W2. To calculate the activation of the output neuron, we multiply the first input neuron’s activation by W1 and add this value from multiplying the second input neuron’s activation by the value W2. In order to keep activation values bounded, the output of a neuron (like the output neuron in this figure) is often gated by a “squashing” function.

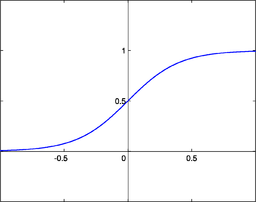

The following figure shows the shape of a typical Sigmoid “squashing function.” Details vary, here the range is [0,1] but some people use a range of [-0.5, 0.5]:

We will use the Sigmoid function for simple feed forward neural networks. Later we will also use the hyperbolic tangent function as a squashing function but the general shape and effect is similar. We will also be using the derivative of the Sigmoid function for learning the connection weights.

Imagine a simple neural network (no hidden neurons) with three inputs X1, X2, and X3. These three values define the location of a point in a three-dimensional space, and the “decision surface” is now a two-dimensional plane through this three-dimensional space. The fun begins when we have many inputs. For example, if we have 20 inputs, that defines a 20-dimensional space and if there are no hidden layer neurons then the “decision surface” is a 19-dimensional hyperplane. Don’t worry if you have a difficult time visualizing this - everyone does!

If we add a layer of hidden neurons, with two input neurons forming a two-dimensional space, then the decision surface is still a line but the line can be curved.

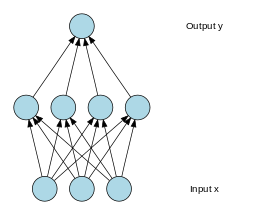

The following figure shows 3 input neurons, 4 “hidden” neurons, and 1 output neuron:

In this figure we have three input neurons on the bottom, each input neuron is connected to each of four hidden layer neurons, and each of the four hidden layer neurons is connected to the single output neuron.

This neural model is defined by 16 parameters: the values that are learned for each weight in the neural network. Later when we use TensorFlow to work with deep learning we will use neural networks with many layers and thousands or tens of thousands of parameters. For work I train networks with millions of parameters while companies like Google and Microsoft use networks with billions of parameters for tasks like speech recognition and generating natural language descriptions of input images.

Symbolic Representation of Facts and Rules (Expert Systems)

Early artificial intelligence (AI) systems used symbolic representations of the world. Symbolic representations refer to using a symbolic label like “car” to represent cars in the real world. We attempt to represent knowledge with these placeholder symbols and instead of reasoning about real world objects and events we reason with the abstract symbol representations of the real world.

As a simple and concrete example, a “person” represents the class of human beings and we might associate attributes like age, weight, and name with instances of this class of entities. In a rule based system, we might define a class “driver” which is a subclass of “person” and a rule that “an instance of class ‘driver’ must have a value greater or equal to 16 for the attribute ‘age’. If we have an instance of “person” named Sam and Sam is 14 years old, then this rule could be used to reason that Sam can not be a driver.

Expert systems are built with manually written rules, a storage system for “facts” in the system, and an interpreter for matching rules with facts in a system, running (or “firing”) rules which can add, delete, or modify facts in the system.

Historically one of the most important production system interpreters was OPS5 that I used frequently in my work in the 1980s. Another production system is Soar that is a cognitive architecture that includes a rule based system.

OPS5 and Soar are not much used now but they have great historic importance in the field of AI. I spent several years in the 1980s working on symbolic expert systems, symbolic knowledge representation schemes like Conceptual dependency theory, and symbolic Natural Language Processing (NLP).

Symbolic Models for Natural Language Processing

Much of the interesting research and practical applications of Natural Language Processing (NLP) are now done using statistical models and deep learning models. We will cover both of these technologies with code examples in some depth in later chapters. Here, for historic reasons, I would like to briefly cover symbolic approaches to NLP.

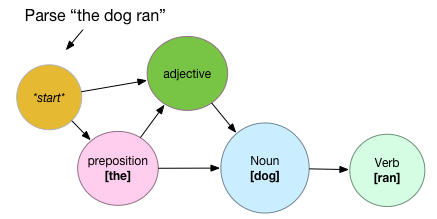

In my work in the 1980s I used Augmented transition networks (ATNs). ATNs were used to parse text using a network where individual nodes can have attached memory to remember things like the subject of a sentence, attributes of nouns used in a sentence, etc. ATNs have the advantage of being fairly simple to write, and a disadvantage that they need to crafted for limited vocabularies. A lot of work can be involved trying to extend them to vocabularies for more than simple topics or applications.

![Simple ATN to recognize patterns [preposition -> noun -> verb], [adjective -> noun -> verb], and also [preposition -> adjective > noun -> verb]](/preview_site_images/python-ai/ATN.png)

In this diagram, the node “preposition” will recognize a word that is a preposition, remove the word from the word sequence, and attempt to pass the remaining part of the word sequence to each node connected with an outgoing arrow. When any node sucessfully recognizes the last node in the word sequence, then the trail of nodes represents the parse of the sentence. Consider the following example:

Here the ATN assigns the part of speech “preposition” to the word “the,” part of speech “noun” to “dog” and the part of speech “verb” to the word “ran.” ATN based systems get very complex when they need to support large vocabularies and they are difficult to maintain.

Using Deep Learning for Natural Language Processing

TBD

An Overview of Linguistics

Linguistics is the science of human languages. Although our brains are very general purpose “devices” there is ample evidence that the brain is partially pre-wired to learn languages. Different human languages differ substantially in structure and for simplicity we will consider English.

We learn to speak at an early age by interacting with older people. During this process a model of language is formed containing understanding of individual words, words that occur frequently together, and eventually sentence structure, parts of speech, and grammar. Language is so ingrained into how we think that we don’t realize how much we know about language and how it maps to the real physical world and events. At the end of the last chapter we discussed symbols representing things in the real world, but the difference with the human brain is that there is a distributed representation of things stored in neurons and the connections between neurons. When we study deep learning, we will see how these representations are stored in artificial neural networks.

Linguistics deals with the study of sounds, the syntactic structure of language, the underlying meanings of words (semantics), and the association of language with background world knowledge that we all have (pragmatics).

Computational linguistics is covered in some detail in the next chapter. Here we cover the main ideas of linguistics and define terms that will be useful later. We will use the context of looking at the requirements for a robot that can carry on conversations to take a high level view of the science of linguistics.

A robot needs to:

- Listen to all sounds in a room.

- Separate out sounds that are probably human speech in the environment.

- Separate out just the speech of the person the robot is talking with.

- Perform some form of Fourier analysis to convert frequency information in speech to a power spectral density (think of the volume display on your hifi that shows the volume of bass, mid-range, and higher frequency).

- Recognize sub-word sounds (phonemes) and isolate and recognize individual words.

- Use an understanding of the structure of sentences and statistics of which words tend occur together to correct errors in word identification.

- Understand the context of the human being conversed with and attempt to match incoming words with commands, requests for information, etc.

- Build a conversation model from input words, real world understanding, and multiple hypothesis of what the human might want.

- Generate speech for the human listener and update the model for what the conversation is about.

Phonology

When we listen to speech, our ears process sound waves that are characterized by power (sound level) and frequency. The ear drum, curved passages in the inner ear, choclea, and choclea nerves capture this information for specific areas of the brain that process sound.

Sounds are thus transformed to a distributed representation of phonemes (standard building blocks for constructing words) and sequences of phonemes are transformed into a representation of words, then sentences that we hear.

Morphology

Morphology is the construction of words from sub-parts called morphemes. Consider the word “unhappy” that consists of morphemes “un” and “happy.” The meaning of this word depends on understanding what each constituent morpheme contributes to the meaning of the word.

Syntax

Syntax is different for different languages like Engish, German, Spanish, Chinese, etc. We looked briefly in the last chapter at an ATN that recognized the syntax for a very small subset of English, namely the ability to recognize patterns prepositions -> noun -> verb and prepositions -> adjective -> noun -> verb. When we hear a sentence that does not make sense it might be because we can’t “parse” the syntax or the meaning (semantics) of the sentence makes no sense to us.

As an example, “car red go” makes no sense to use syntactically, but viewing the sentence as a “bag of words” (BOW) where we don’t care about word order, we get the general idea the a red car is moving.

As another example, “the tree ran fast” does not make sense semantically because in our mental model of the world a tree does not move except for slow growtrh and its branches moving in the wind. A tree can only run in a cartoon.

Semantics

Semantics is the meaning in language. Semantics relies on the understanding of syntax and also on our model for the world (pragmatics).

Coupled with semantics is the “grounding problem.” We interpret the color blue through our experiences of seeing a blue sky, blue articles of clothing, etc. An artist thinks more deeply about a color than a typical person. It is an open question whether an AI without a body and the ability to interact with the physical world could ever be a general purpose intelligence.

Pragmatics

Pragmatics is a refinement of semantics: the understanding of language in a specific context. This can involve understanding who is in a conversation and what we know about them, and a model of what they may know or not know, and what topics are being discussed.

Pragmatics is understanding, or at least partially understanding language.

While cognitive computing deals with very specific tasks like adding a calendar entry from a speech request to your cellphone or recognizing what animals are in a picture, general AI (often called AGI - artificial general intelligence) is the science of combining knowlege of the world, language, knowlege of performing tasks, etc. into integrated systems.

NLP, including pragmatics, is a good step towards development of general AIs.

Linguistics Wrap Up And Practical Applications

We just took an overview of the field of linguistics and we studied how linguistics fits into the study of cognition. Later we will ground the theory seen here with practical examples of processing natural language text. I use the terms Natural Language Processing (NLP) and Computational Linguistics to mean almost the same thing with the difference being that NLP is more a collection of software engineering techniques and practices to process and extract information from text while Computational Linguistics deals more with the science of understanding linguistics and thus to some degree cognition.

We will later look at several practical examples of NLP:

- Using Convolutional Neural Networks for classifying text

- Using the spaCY library for part of speech tagging, and entity detection

- Using the OpenNLP library for classifying text

- Using the OpenNLP library for Recognizing Entity Names in Text

The Public’s Perception of Artificial Intelligence

I started working in the field of AI in the early 1980s and there were relatively few of us back then. Now various subfields of AI and cognitive computing are some of the fastest growing segments of the tech sector. For the general public there are at least three topics that have attracted attention:

- IBM’s Deep Blue defeating world chess champion Garry Kasparov in 1997

- Google/DeepMind’s AlphaGo defeating Lee Sedol who is one of the strongest Go players in the world in 2016

- AI and automation “taking people’s jobs”

The game of Go is of particular interest to me personally because I wrote the world’s first commercially available Go playing program “Honinbo Warrior” for the Apple II computer. I wrote this program in UCSD Pascal. Around the same time I also had the opportunity to play the national Go champian of South Korea and the women’s world Go champion. I am a big fan of the game and I argue that it is much more complex than chess. AlphaGo’s victory over Lee Sedol in the spring of 2016 was a watershed moment in AI and I watched the games played live on the Internet. I felt that I was living through an important moment in history. Exciting times!

The issue of AI computing systems replacing most human workers is almost a certainty and the sociological challenge of dealing with unemployment is probably greater than the technical challenges for accomplishing this. It is currently an open debate whether future AIs will pose a danger to the human race but in spite of rapid advances in our field, I don’t worry about this problem - at least not yet.