4. Planning Meetings

One of the things I enjoy about my work as a consultant on Agile is visiting teams and observing iteration planning meetings. If you’ve never observed another team’s planning meeting, or if you’ve never seen one, you might expect them to be fairly similar. Indeed they are but, as is so often the case, the devil is in the detail. I am routinely surprised by the ability of teams to interpret, find and invent different ways to doing things in the meeting.

Take for example deciding how much work a team can undertake. Some teams just have the product owner propose stories and accept stories until they feel they have ‘enough’. Some teams are strict in only accepting stories they are sure they can get done - the Scrum idea of ‘commitment’; other teams will take on more work than they expect to do.

Some teams will use velocity to judge how much they can do. They determine how many points they can do at the start of the planning meeting and then accept stories up to that limit, give or take a bit. Some teams set the upper limit by simply looking at how many points they did last time and rolling that forward, called ‘yesterday’s weather’ in XP. Some teams play planning poker to decide how many points they can do. Some teams think about who’s on holiday, who’s not, who was ill, what got in the way and a million other things.

Seeing these variations is often educational for me, and vice versa, I sometimes suggest changes to a team’s current practice. However it does mean that when describing a planning meeting to someone, there are a lot of details that could be different without necessarily being wrong.

Personally I advise teams not to spend a lot of time deciding how many points they can do. I don’t see much point in asking “Who’s in this week” or “Who is out?”. You could add more and more detail without adding any more accuracy. All the team needs is a rough guide to judge how much work it is worth planning in detail.

To keep it simple, I would say just take the average velocity from the last four or five sprints, and ignore holidays, illness and other factors that might have, or will, disrupt work. Recalculate this average at the start of each iteration, i.e. use a rolling average.

Then - and this is both important and slightly controversial - schedule slightly more work than the team expects to do. That is, if the team expects to do 10 points, then schedule 12 or 13. If they expect to do 20, schedule 24 or 25.

The team needs to accept enough work that it doesn’t run out of things to do, but should not accept more than is reasonable to expect. Preparing work that will not get done is wasteful.

What follows is my take on a planning meeting and how it runs. This description matches the A1 Planning Sheet <www.dialoguesheets.com> I have devised to help new teams run planning meetings. This is the core of Xanpan.

After this description I detail some of the activities which might occur outside the planning meeting but which contribute to a smooth running planning meeting.

I will also detail some variations I’ve seen.

4.1 The Players

- The Product Owner: this role is usually played by a product manager, or a business analyst acting as a proxy for the real customer. On occasions the real customer might play the role. Some companies employ subject matter experts who can play the role of Product Owner at times. Sometimes someone else needs to play the role. Whoever plays Product Owner needs to do their homework (see below) and be ready to propose stories, answer questions, prioritise and make decisions as the meeting progresses.

While it is common practice for there to be only one Product Owner, there may be more than one. If more than one Product Owner (for the same product) attends the planning meeting, they should agree priorities and approaches beforehand .

- The Creators: software engineers and testers mainly, although sometimes others, such as user interface designers, are involved. These are the people who will build the things the Product Owner asks for.

- The Facilitator: sometimes there is a dedicated facilitator who is not the Product Owner or a member of the building team. They may be, for example, a project manager, Scrum master or Agile coach.

Some teams are too small to have a dedicated facilitator, so a developer steps into the role - in which case they wear two hats during the meeting. Experienced teams may not need a facilitator, but inexperienced teams who lack a facilitator may find the meetings long and difficult. Whoever plays facilitator should have some experience of planning meetings and facilitation. They should also have respect and authority from the team to play the role.

Usually it is not a good idea for the Product Owner to double up as facilitator, because someone needs to watch for and resolve disputes between the Product Owner and developers. The Product Owner usually has more than enough to do during the planning meeting in any case.

I consider the total of all the people in these roles, and possibly some more as well, to be: the development team. The Product Owner and facilitator are, in my book, as much part of the team as the coders and testers.

4.2 The Artefacts

There are several artefacts, or props, which are normally used in a planning meeting. When teams rehearse planning meetings in training courses, I use dice to simulate the work in an iteration. While dice are not used in real-life planning meetings, they do simulate the nature variability that occurs in reality.

- Blue cards: describe bits of functionality that are useful to the business in a language the business representatives understand. These are vertical slices of business functionality that are conceivably deliverable on their own. Often a ‘user story’ format (Cohn 2004) is used and the cards are usually created before the planning meeting.

- White cards: each card describes one task needed in building the thing described on the blue card. White cards are normally written during the planning meeting, and there are usually multiple white task cards for each blue card.

- Red Cards: these are bugs and other expedited items. Normally reds are not broken down into tasks, but they are occasionally. What colour you use for tasks related to a red card is up to you: white or red would both be appropriate.

- Planning board: usually a magnetic white board divided into columns. Often these boards are 1.2m by 90cm but the size is part particularly important, other sizes and types of boards may be used. While many teams use electronic tracking systems, I strongly advise teams initially to adopt physical boards and cards, and only progress to electronic systems when they have some physical experience. Even then both systems may be run in parallel.

- Planning poker cards: the description that follows assumes the team is playing planning poker. Not all teams play planning poker; it isn’t compulsory, so feel free to use whatever method works for you. That said, whatever method you use should address some of the point discussed below.

Special sets of planning poker cards are available - these can be surprising difficult to buy, but are often freely available from Agile training and consulting companies - just ask! Different planning poker sets have slightly different sequences.

The exact colour of the story and task cards can vary from team to team. Keeping to the colours outlined here does keep things consistent.

Some teams use additional colours to signal other types of work. One team started using yellow cards to signal unplanned work; this Xanpan convention has been described elsewhere. By their nature yellow cards will only appear at a planning meeting when they represent carry-over work.

4.3 The Meeting Sequence

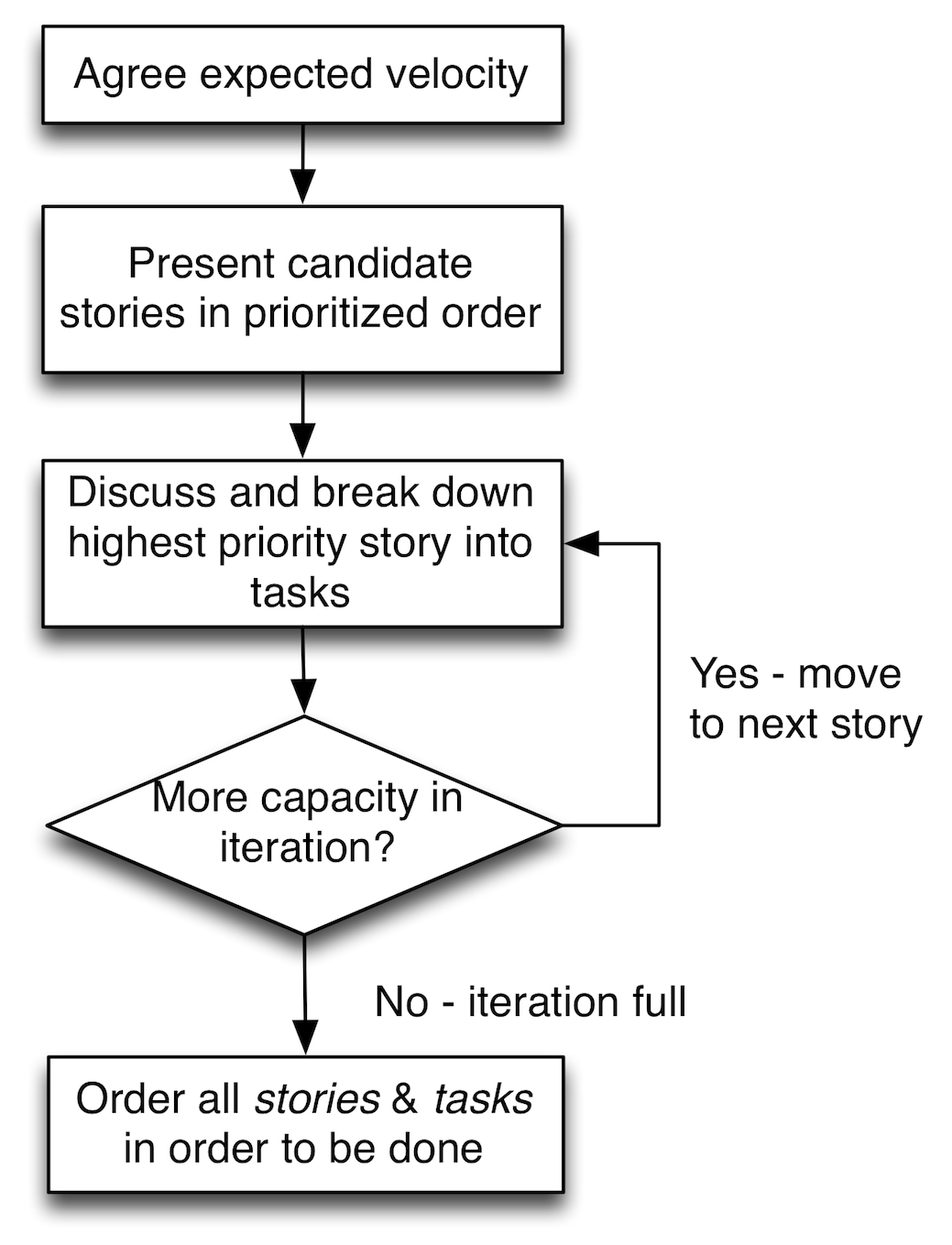

The basic format of the meeting is shown in the diagram below. The team agree how much work they will attempt, the Product Owner presents the work they would like done and the team works through each item in priority order. They discuss each item, break it down to tasks and estimate the tasks.

After each item is done (i.e. discussion and breakdown has ended) team members count up how much work they have accepted and compare this with what they expect to do. If they have spare capacity, the next highest priority item is pulled from the Product Owner and the discussion, breakdown and estimation process repeats.

When the team has accepted work to its capacity, its members review what they have with the Product Owner and agree any changes.

First meeting

The first planning meeting a team holds is always the hardest. This is when their experience is least and the unknowns greatest; naturally it will take longer to navigate the meeting. In the worst cases the team’s lack of experience can derail the meeting altogether should it encounter difficulties.

The first meeting is also the most difficult for another reason: the team has no reference points. They have little idea of what they need from a story, how long it will take to do a story, or quite what acceptance criteria should look like. Even if they have practised for these questions during training, doing it in real life will be harder.

Significantly the team will also have no data on how fast it can go - it will only have a vague idea of how big ‘one point’ is or how many points it should accept into an iteration. In my experience teams tend to accept far more work into the first sprint than they will ever get done: they are inherently optimistic.

Second and subsequent

Because the first meeting takes so long and over-plans, the second meeting, two weeks later, tends to be one of the shortest. So much work is carried over in one form or another that the second meeting finds little to plan. After that the meetings start to settle down. The team has two meetings under its belt and two data points.

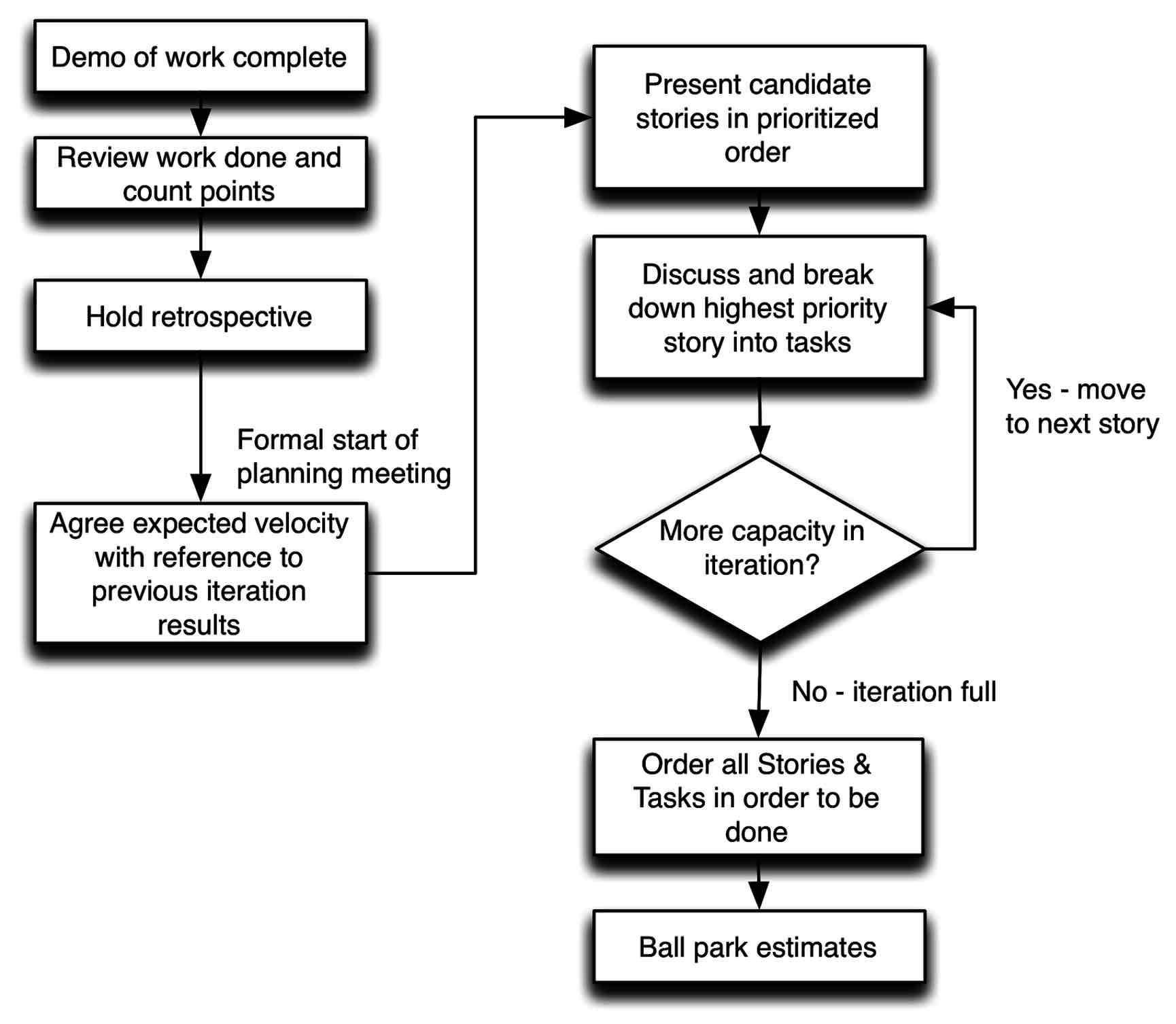

The meeting format also changes after the first meeting. At first the meeting is entirely forward-looking, but subsequent meetings have a backward-looking element. Prior to the start of the meeting, or right at the start, the team will demonstrate the work done in the previous iteration. It will then review the work done, usually by counting the points and updating any charts.

Formally the demonstration, review and retrospective might be separate meetings. But they are likely to be arranged back-to-back, perhaps with a short break between each. So whether one regards them as one long meeting or several shorter meetings is debatable.

When teams only delivered at the end of iteration - or less regularly - the demonstration of developed functionality used to be an essential part of the end-of-iteration and start of the next iteration. As more teams move to continuous delivery, it is worth questioning whether the demo adds anything if people can already use the actual software.

Teams may also hold a retrospective as part of the iteration end routine, although not all teams hold retrospectives, and even those who do may not hold them at every iteration.

The schedule of the second and subsequent meetings is something like the diagram below.

In the second meeting the team has a rough idea of how many points of work it can accept, because members can sum up the points completed in the previous iteration. In the third meeting it has a better idea because it can average points from two iterations. By the fourth meeting the average is fairly accurate, plus reasonable high water (best case) and low water (worse case) marks can guide capacity thinking.

These meetings should happen regularly at the end/start of every iteration - typically every two weeks. They can be scheduled in everyone’s calendar and room bookings made months in advance. If you are using an electronic scheduling system (such as Microsoft Outlook) you can set up a recurring meeting with no end date.

4.4 The Planning Game

The game opens with the Product Owner(s) presenting to the team the blue (business facing) stories they wish to have developed. These are presented in absolute priority order - 1, 2, 3, 4 etc. No duplicate priorities are allowed, i.e. there can be only one priority one, one priority two and so on.

If two items are deemed to be of equal priority by the Product Owner (e.g. two cards are both assigned priority three), then the development team is allowed to decide the ordering. If the Product Owner disagrees with the ordering then they have, by their disagreement, determined the ordering. In general it is considered an abdication of responsibility if a Product Owner does not guide the team in prioritisation.

Each blue card is broken down by the development team to a set of white technical tasks. The white cards describe the things that need to be done in order to build the blue. One might think of the whites as the building blocks for the blues, or of the whites as verbs and blues as nouns.

Blues are the domain of the Product Owner, whites of the technical team. The breakdown is partly an act of design, partly an act of requirements elaboration, and partly an act designed to produce the smallest practical work items. (I will discuss the breakdown in more detail later.)

Of course sometimes only one white is needed to create one blue. In these cases the white is omitted and the team can work directly at the blue level. In some ways this represents the idea scenario; however, for this to work, the blue must be no bigger than one white, i.e. if the blue can be broken down to multiple whites, it should be. (Of course there comes a point where further breakdown is excessive, but to start with teams find breakdown difficult. Once they have mastered it, they might consider whether some tasks are too small.)

The breakdown is a cooperative process between the development team and Product Owner - both should be present. There should be conversation between both sides: developers should ask about the requirements in detail, Product Owners may promote white cards to blue cards if they think the task itself has business value, and remove technical tasks if they want - even against the advice of developers - although in general the two sides should strive for consensus.

Giving Product Owners the ability to promote whites to blues can be a useful tool in extracting business value. It also gives the Product Owner the power to override much of the technical team’s advice. On occasion this may be useful, but it may also indicate problems. For example, a Product Owner who frequently finds whites to promote may not be devoting the time and attention to preparing small blues in advance. Or it may be a sign that they do not trust the technical team and are attempting to micro-manage the work.

When white task cards have been broken out, those who will be responsible for undertaking the work - i.e. development team, all developers and testers, but not the Product Owner - estimate the work in terms of abstract points using Planning Poker. (See discussion below).

Teams track velocity from iteration to iteration. Unlike in financial services, past performance is considered a good indicator of future performance, or at least of the next iteration. I normally recommend using a rolling average across the last four or five iterations to judge upcoming capacity.

Once breakdown of a blue has started, it makes sense to see it through to the end. Even if part way through the team realise they cannot fit the tasks into this iteration, it probably doesn’t make sense to stop mid-breakdown. This might result in the team accepting more work than it planned to, but this isn’t a problem, as there is no commitment.

Of course once it becomes clear that a blue will not be completed in the iteration, the Product Owner could drop the blue and suggest a substitute. There is no hard and fast rule here. It makes little sense to plan the first tasks for a blue, but the order in which tasks are done does not necessarily correspond to the order in which they were identified and written on whites.

The team may or may not achieve all the scheduled work; they may perform below or above expected velocity in any given iteration. If the team do more then expected than the work is available, and over time the rolling average used to calculate the expected velocity will rise. Conversely, if the team find the iteration harder than expected, then less will be achieved and, after a couple of iteration, the rolling average will fall.

If a particular task or feature must be achieved within the iteration, it should be scheduled first and within the minimum recent velocity, low-water mark. This does not guarantee that the work will be done, but provides a very high probability. Teams are advised to track the time it takes for cards to traverse the tracking board and develop statistically reliable averages and deviations to replace the Planning Poker estimation process.

Testing

Different teams handle testing in different ways. Some teams have professional testers and formal test processes, while some teams have neither. The level of automated testing is also widely different between teams.

White cards are generally not testable by professional testers. They should be tested by developers using automated unit tests and other tools to ensure they are acceptable. If there is something a tester could test, they may well be involved.

Generally professional testers work at the blue card level. In work breakdown a team might write a white task card to test a blue. This card would only be done once all the other whites were done and the whole blue was ready to test.

However, testers may prefer to write two task cards: one to write the test script and one to execute the test. If the former is fully automated, the latter need not exist, or will happen automatically.

Other teams forego tasks associated with testing and instead model tests via their task board. As cards move across the board they will need to pass through test columns where the testing will happen. Thus completion of all the white cards associated with a blue would trigger the move of the blue into a testing column and for testing to commence.

Trivia and Spikes

Truly trivial tasks, or work to be undertaken by people outside the teams, may be assigned zero points. Such zero-point cards represent work that the team needs to keep track of but which does not represent noticeable work for the team. For example, a ‘buy domain name’ task would probably merit a zero-point score, as it would take about 10 minutes. But a task of ‘Obtain quote for domain name, seek approval to spend money, buy domain name, file expenses claim’ may warrant an estimate larger than zero.

Spike cards are written when the development team feel they do not have enough knowledge - usually technical knowledge - to begin a breakdown and estimate. Here a spike card will be written to attempt the work, but once the work is done it will be thrown away, spiked.

The objective of a spike card is to gain an understanding of what needs to be done. Typically the output from a spike will be a set of (white) cards describing the tasks which need to be done. Ideally these cards will be held until the next planning meeting, where they can be discussed, estimated and scheduled, or deferred.

Sometimes when time is pressing the resulting cards might be estimated and scheduled into the iteration immediately. While this is entirely practical, it does mean forecasts for what the iteration will produce are difficult or impossible.

Spikes are not estimated in the same way that other cards are estimated - rather they are hard time-boxed: an amount of time is decided on and written on the card. This time, no more, no less, is the time allowed for this card. When the time is up research work must stop and the task cards must be written using the knowledge gained.

Working in time - as opposed to points - for spikes makes velocity calculations more difficult. Some approximate, rule-of-thumb, back-of-the-envelope calculations need to be done in order to apportion a number of points to a time-boxed spike card.

Counting ‘Done’

In the review part of the meeting the work completed in the previous iteration is removed from the board and reviewed. The main part of this review is simply counting the points done and updating any charts or other tracking systems. The review may also take time to examine any cards left on the board and decide whether they should be left as carry-over.

Teams are encouraged to adopt a definition of ‘done’ to help with defining what is done and what is not done. The definition of ‘done’ is a short checklist of things the team agree will be done for every card they claim is ‘done’. This checklist applies to all cards in addition to any acceptance criteria placed on blues.

I normally recommend a definition of ‘done’ for whites’ tasks, although some teams also apply it to blues. Occasionally teams feel the need for one definition for blues and another for whites.

The estimated points on completed whites are counted as part of the team’s iteration velocity. Only 100%-completed whites are counted and the estimate is counted as-is, even if people feel it does not reflect the actual work. For example, if a card is estimated at five points it is counted as five points, regardless of whether people feel it was actually closer to one or 10.

No attempt is made to capture or work with ‘actual’ time or points. Human perception of ‘how long things take’ is subjective, and Vierordt’s Law suggests we underestimate retrospectively as well as prospectively. The historical velocity data provides the necessary feedback on how long things took. By allowing abstract points to ‘float’, the system becomes self-adjusting.

Anyone who has worked with software teams for more than a few years will have seen the ‘80% done’ scenario in which a piece of work remains 80% done for 80% of the time allowed. Therefore no matter how much a developer claims that “It is 95% done”, incomplete cards are not counted.

In software development we have no way of telling objectively what is 95% done and what is 9% done. We have no way of knowing if an unexpected problem lurks in the final 5%, or if someone will go ill before the day is out.

This rule also sets up a small incentive for people to complete work before the end of iteration review and thus score more points. This adds a little extra motivation.

When an average velocity is used it becomes unimportant - for capacity measurement - exactly for which iteration the points are scored. Suppose a 10-point card is genuinely 90% done in iteration 13, but is not counted. It is then completed early in iteration 14, and thus counted in that iteration. While the variability of velocity will increase iteration-to-iteration, the effect of averaging over several sprints will still allow reasonable planning. The aim of estimation and forecasting is not to be precise about any single item, but to be generally accurate.

I just don’t believe that adjusting for ‘actuals’ on a card-by-card basis, or allowing some points from partially done cards to be counted, is a productive use of time. Both are subjective measures that invite discussion and disagreement - both time-consuming activities.

Some see it as odd that I allow whites to be counted even when blues are not. “But the business functionality is incomplete”, they say, “and it’s the business need that counts”. This is reasonable - and certainly follows the rules of Scrum - but I find it leads to less predictability in the process and evens out flow.

Counting whites as they are completed smoothes the flow. Only counting blues will lead to more variability, higher peaks and deeper troughs. While this complicates planning, it does not undermine it. One team I know of only counted points when blues were done, and they still delivered on time.

As before, the use of averages means that if a team decides to count only points from completed blues rather than whites, the velocity benchmarking system will still work. The drawback is that it might take slightly longer to stabilise and provide a stable capacity benchmark.

4.5 Velocity and currency

Velocity is a measure of how fast a team is working, or rather, how much work it is getting through. It is calculated by counting the points a team scored (i.e. completed) in the previous iteration.

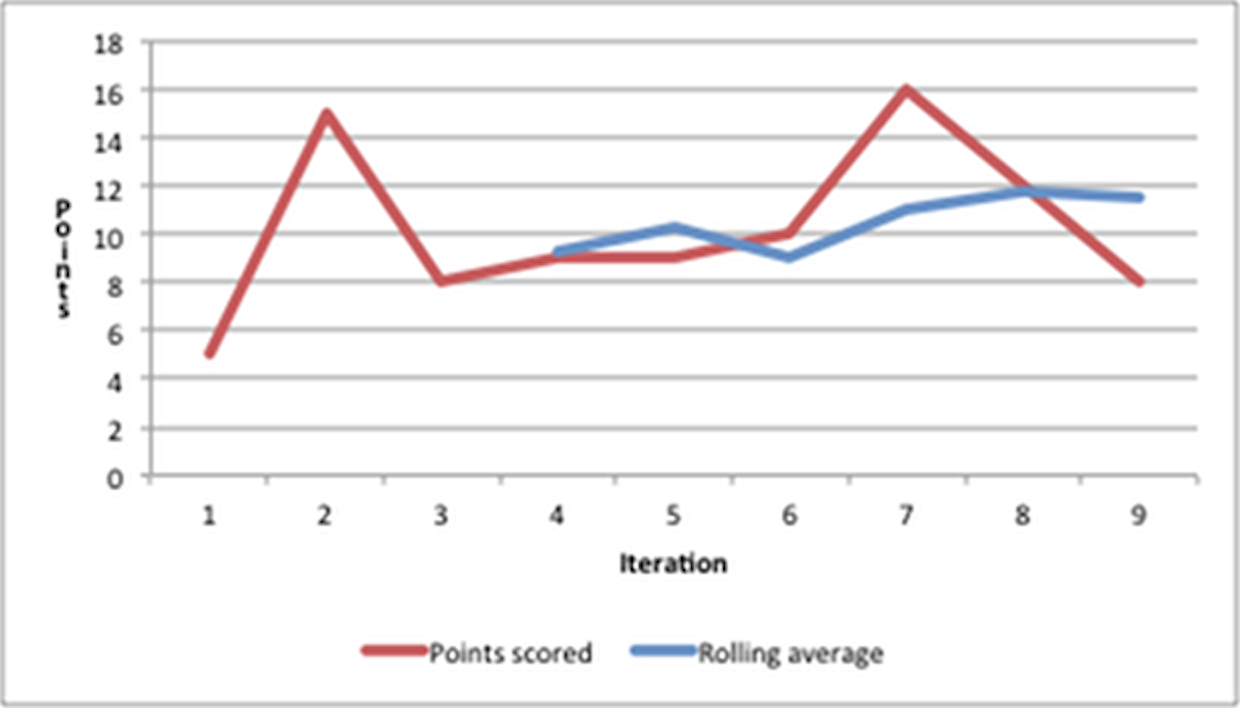

Over a series of several iterations, say four, a team should be able to come up with a rolling average, a high- and a low-water mark which can be used for planning purposes. For example, consider the team shown in this graph:

This team scored 5, 15, 8, 9, 9, 10, 16, 12 and 8 points in the nine iterations shown here. At the end of iteration four the team could calculate an average of the last four iterations; this could be rolled forward at the end of each iteration, giving rolling averages of 9.25, 10.25, 9, 11, 11.75 and 11.5.

I would advise the team to plan for 12 points of work (because their recent average is 11.5) but accept 16 or 17 points worth - up to their recent high-water mark. Those in the planning meeting will be aware of the possible outcomes, but for those outside the meeting there needs to be some management of expectations.

It is pretty much certain the team will achieve the first 8 points worth of work. The team might get points 9 to 12 done, or they might not. If they are very lucky they will do points 13 to 16.

If someone needs to know how much time the team spent on a particular task, then it is simply a question of maths. Assume there are five developers employed for 40 hours a week in the previous example. That is 200 hours of work, producing on average slightly more than 11 points of work, so each point took on average a little over 18 hours, thus a two-point card took about 36 hours.

I would prefer not to make this calculation too well known, because once it entered general knowledge it will undermine the points system. I would also prefer that this calculation be regularly updated since, as shown in the table, the averaging changes.

It is vital to note that points float. Like the US Dollar, Euro or Pound Sterling, points are a currency and change their value over time. Each team has its own currency that is not directly transferable to another team.

As with currencies and other economic indicators, setting targets for velocity can create problems. Goodhart’s Law applies: if a team tries to target a certain number of points, it will meet its goal, but may not do any more work. Such teams exhibit inflation in estimates: exactly as with financial inflation, the numbers are bigger but the value less. (More about Goodhart’s Law later, or see http://en.wikipedia.org/wiki/Goodharts_law).

Carry-over work

For a strict Scrum team there is no issue of work carry-over, because teams only commit to work they guarantee will be done, and thus all work committed to is done. While many Scrum teams find carrying work over from sprint-to-sprint an anathema, I advise teams to carry over work. Indeed, carrying over work to improve flow is a central feature of Xanpan.

For Xanpan teams work carry-over is a fact of life. As part of the review process preceding the planning meeting the team should look at the work remaining on the board from the closing iteration and decide which, if any, work will be carried over to the next iteration.

When work has not started on a blue and associated whites, the Product Owner may decide to pull the card completely or roll the whole thing over. Assuming they roll it over, it will need to be prioritised against the new blues being added. That is to say, just because a blue is rolled over does not give it special priority.

When some tasks associated with a blue have been done and some tasks have not the situation is more complicated. While the Product Owner may still pull the blue or assign it a low priority, it probably makes more sense to finish work that has been started, and finish it soon, rather than leaving it in a partially done state.

There may also be engineering reasons why the blue should be taken to completion before anything else. For example, some of the new blues may involve the same areas of code.

In a few cases work is incomplete because more tasks came to light after it began. While I do not allow teams to change estimates on whites once they are accepted into an iteration, the team may write new whites for additional work which emerged. They may even estimate and begin work on these whites during the iteration if need be. However I prefer it if new work can be held until the planning meeting, where it can be discussed, prioritised and scheduled by the team.

Obviously this approach raises the possibility of never-ending work, blues that are never done. Senior team members need to be watchful for this and work to diagnose the underlying issues causing it.

How long is a planning meeting?

I would expect a well-practised team to complete a planning meeting in half a day; my preference is for afternoon meetings. Obviously there is some variability, depending on how big the team is, how much work is being planned and whether the team is carrying over any work, but half a day should be enough.

The exception is the first meeting, which will frequently take much longer, perhaps as much as a day. Meetings can also stretch out when Product Owners are poorly prepared for the meeting or take issue with estimates. Design questions can also derail meetings, but on the whole most design issues can be followed up later.

A team holding a retrospective before the meeting should allow 60 to 90 minutes, depending on the techniques and exercises being used. I find a dialogue sheet retrospective takes 60 minutes for the sheet, plus up to 30 minutes for post-sheet discussion and action items.

4.6 Product Owner Preparations (Homework)

One of the recurring reasons I see for planning meetings not going smoothly is a lack of preparation on the part of the Product Owner. The planning meeting is not the place for the Product Owner to decide what is required. Although they may make trade-offs and substitutions during the meeting, they need to go into the meeting knowing very clearly what they want to ask for. This does not mean everything they want will be accepted and scheduled, but they should be prepared.

The Product Owner needs to be on top of their brief: they need to be able to answer developer questions and clarify what is being asked for. If they cannot, they need to either bring someone who can, or be prepared to make changes to what they want. Thus, if the testers and developers ask questions about issues the Product Owner cannot answer immediately, they might bring another story into play while they find out the answers. This may mean that the first story is postponed until the following iteration - or even later - or it may be possible to schedule the difficult story later in the iteration.

As you might guess from this, it helps if the Product Owner is not only prepared for the iteration they are planning now, but also has a rough idea of what they plan to ask for in future iterations. These plans shouldn’t be too detailed - because things change both in priority and detail - but the Product Owner needs to have some ideas.

Medium-term plans, about the next few iterations, were traditionally called release plans. I believe the name quarterly plans both better describes the plan and moves away from the association with releases. (This is discussed in a later section, Planning Beyond the Iteration.)

It is also critical that the Product Owner has authority from the organisation and team to make decisions during the planning meeting: on priorities, on changes to priorities, on details of features and on trade-offs. Nothing is more disruptive - and morale-sapping - than completing a planning meeting one day only to discover a day or two later that somebody else has overruled the Product Owner and has changed what was agreed in the meeting.

There is sometimes a need to have more than one Product Owner in the planning meeting. When this happens all Product Owners concerned need to be in agreement about what is going to be asked for and what the priorities are, and be prepared for problems. The Product Owners may benefit from having their own small meeting prior to the full planning meeting.

An Iteration Planning Sheet is available to accompany this description. Visit http://www.softwarestrategy.co.uk/dlgsheets/planningsheet.html to download a sheet or to buy a printed sheet.

4.7 References

Cohn, M. 2004. User Stories Applied. Addison-Wesley.