7 Application Security Industry

This section has the following chapters:

- Secure coding (and Application Security) must be invisible to developers

- Blogger in HTTP only What Happened to HTTPS

- CI is the Key for Application Security SDL integration

- Etsy.com - A case study on how to do security right

- Open question to Etsy security team - How can OWASP help

- FLOSSHack TeamMentor and the sausage making process that is software application development

- I never liked the term Rugged Software what about Robust Resilient Software

- Is there a spreadsheet template for Mapping WebServices Authorization Rules

- The next level App Security Social Graph

- Trustworthy Internet Movement and SSL Pulse

- Where to have AppSec Q n A threads (what about Reddit)

- Is the TeamMentor OWASP Library content released under an open License

- Reaching out to Developers, Aspect is doing it right with Contrast

- My comments on the SATEC document (Static Analysis Tool Evaluation Criteria)

7.1 Secure coding (and Application Security) must be invisible to developers

At OWASP a while back we come up with the idea that _’…Our [OWASP] mission is to make application security visible…’ _and for a while I used to believe in the idea that if only everybody had full visibility into ‘Application Security’ then we would solve the problem.

But after a while I started to realize that what we need to create for developers, is for ‘Application Security’ / ‘Secure Coding’ to be INVISIBLE 99% of the time. It is only the decision makers (namely the buyers) that need visibility into an application secure state

We will never get secure applications at a large scale if we require ALL developers (or even most) to be experts at security domains like Crypo, Authentication, Authorization, Input validation/sanitation, etc…

Note that I didn’t say that NOBODY should be responsible for an Application’s security. Of course that there needs to be a small subset of the players involved that really cares and understands the security implications of what is being created (we can call these the security champions).

**The core idea is that developers should be using Frameworks, APIs and Languages that allow them to create secure applications by design **(where security is there but is invisible to developers).

And when they **(the developers or architects) **create a security vulnerability, at that moment **(and only then), they should have visibility into what they created (i.e. the side effects) and be shown alternative ways to do the same thing in a secure way.**

**

**This is how we can scale, which is why it is critical that OWASP (and anybody who cares about solving the application security problem) needs to focus in improving our Framework’s ability to create secure apps.

One key problem that we still have today (April 2012) which is preventing the mass ‘invisibilitycation of security’ at Framework level, is that we are still missing Security-focused SAST/Static-Analysis rules

**

****How we fixed Buffer Overflows**

A very good and successfully example of making security ‘invisible’ for developers was the removal of ‘buffer overflows’ from C/C++ to .Net/Java (i.e. from unmanaged to managed code).

Do .NET/Java developers care about overflowing their buffers when handing strings? No, since that is handled by the Framework :)

THAT is how we make security (in this case Buffer Overflow protection) Invisible to developers

The Cooking Analogy

**

**If you are looking for an analogy, “a chef cooking food” is probably the better one.

Think of software developers that are cooking with a number of ingredients (i.e. APIs).

Do you really expect that chef to be an expert on how ALL those ingredients (and tools he is using) were created and behave?

It is impossible, the chef is focused on creating a meal!!!

Fortunately the chef can be confident that some/all of his ingredients+tools will behave in a consistent and well documented way (which is something we don’t have in the software world).

I like the food analogy because, as with software, one bad ingredient is all it takes to ruin it.

Related Posts:

- “Making Security Invisible by Becoming the Developer’s Best Friends” presentation

- Security evolution into Engineering Productivity

7.2 Blogger in HTTP only? What happened to HTTPS?

Now that I’m blogging more, I’m finding the need to blog from insecure locations (like a coffee shop or conference).

But unfortunately it doesn’t seem to be possible to use SSL with Blogger? WTF! in 2012?

After this 2009 letter Google moved some of its web apps to SSL (see Google’s answer at HTTPS security for web applications) but blogger seems to have been missed!

At the moment it doesn’t seem to be a way to write a blog post (like this one) without risking my sessionID being compromised. Am I missing something obvious?

Here is are thread Can I use an HTTPS connection for editing and posting on Blogger? (which points to a non-existing thread) that implies that Google doesn’t do this due to performance issues.

Also annoying is the fact that https://diniscruz.blogspot.co.uk/ doesn’t work! So how can I know that this blog’s content is read as it was written (ie. without its content being tampered with)

On the topic of OWASP, note how there is no mention to it on the letter. Yes this letter is from 2009 but if it was written today, would OWASP be there? (this is what I’m now calling OWASP MIA (Missing In Action))

On that topic, why don’t we write another letter to Google asking for them to extend their security efforts into blogger!

Also, if Google doesn’t care about this and give us no solution, what other options do we have? What about a ‘cloud’ service that gives me secure access to this blog?

7.3 CI is the Key for Application Security SDL integration

The more time I spent with CI (namely with TeamCity) the more my instinct is saying_ ‘this is how we should be delivering and automating security knowledge!’_.

CI environments (namely its scheduling capabilities) could be use to:

- Create scannable artifacts (i.e. projects, dlls, jars, etc…) for SAST engines

- Create ‘live versions’ of the target site (in a clean and pre-populated-with-data states) for DAST engines (and pentest activities)

- Automatically run SAST engines (like cat.net for example)

- Run Unit-tests with further analysis

- Trigger security actions (based on events like Git commits)

- Trigger ‘consolidation’ analysis (for example of results from multiple tools) and publishing to results into other SDL tools (namely bug tracking systems)

- Modify source-code (to automatically inject security guidance and fixes) – see Fixing/Encoding .NET code in real time (in this case Response.Write) for a cool PoC

- Inject security guidance into the application (maybe even exposing developers to it in the source code :) )

- Create and sent reports to multiple stake-holders

- Be the receiving end of security reports

Its the ability to create schedules and triggerable actions that is really getting me excited :)

So maybe what we (app security teams) should be doing is to start our engagements by setting up an CI environment (which would be integrated with the client’s CI environment if they had one)

This also goes to the core of the idea that “If we want to fix Security …. we have to fix Development”

In a way that is why was so I interrested in the idea of integrating IBM’s AppScan products with their Rational Jazz tools (which have a number of CI/Collaboration capabilities). In fact that is exactly what I described in my IBM AppScan 2011 post.

Isn’t it amazing that IBM and HP have all the tools needed to create a real powerful (and effective) security remediation ecosystem, but just can’t do it for cultural and political reasons?

And btw, OWASP is also completely MIA in the CI field

7.4 Etsy.com - A case study on how to do security right?

First a quick disclaimer that as far as I can think of, I don’t know anybody at Etsy.com or had any conversations with them in the past.

Following from Nick’s presentation on Amazing presentation on integrating security into the SDL , my look into Etsy’s Code as Craft blog and my experiment with Graphite (see Measure Anything, Measure Everything, AppSensor and Simple Graphite Hosting).

I have to say that I have been more and more impressed with Etsy’s pragmatic and focused approach to application security.

For example check these out:

- Scaling User Security (where they described their experience in: ‘Rolling out Full Site SSL’ and ‘Two factor authentication’

- Announcing the Etsy Security Bug Bounty Program

- Couple more posts tagged as ‘security’: http://codeascraft.etsy.com/category/security/

- Etsy has been one of the best companies I’ve reported holes to. (reddit thread)

- Effective approaches to web application security (haven’t read it but looks like another really ‘must see’ presentation’

This is ‘real-world’ stuff and its what happens when there is a good awareness on the importance and need for doing security.

As you can see, here is a team (from management to engineering) that ‘gets’ application security, and these are the guys that should be driving a number of OWASP’s initiatives, since they represent the ‘real-world’. Please correct me if I’m wrong, but a google and owasp search (for ‘OWASP Etsy’) didn’t show a lot of joint activity (the best ones where Nick’s participation in the AppSec USA and this job post mentioning the OWASP Top 10). It would be great to see Etsy’s guys pushing projects like: AppSensor, ESAPI, Zap, Testing+Developer+Code-Review guides, O2, Exams/Certification, etc…

We (OWASP) need to find ways go get these guys more involved and put them on the driving seat.

In fact, for the next OWASP Summit, we have to make sure these guys are there, working collaboratively with the best minds in Application Security :)

7.5 Open question to Etsy security team: How can OWASP help?

Since I don’t have a direct contact at Etsy’s security team (apart from security-reports@etsy.com), here is the question I would like to ask them (which hopefully will reach the right person).

_Dear Etsy security team, _

How can OWASP help?

By Owasp, I mean OWASP Community (it’s projects, chapters, people, ideas, activities, energy).

_From the information posted on your website and presented at conferences, you really take security seriously. _

You have been able to create a productive environment where secure code ‘happens’, and more importantly, there is a productive and pragmatic relationship between you (the security team), your developers and your management.

So, assuming that you still have a couple things you would like to do better, is there a way (or place, or activity) where OWASP’s community can help?

- Maybe it is in creating better documentation or education materials for your developers/testers?

- Maybe its an improved schema for AppSensor that would allow your multiple teams to create even better data (or metadata) for your amazing graphs?

- Maybe it is a an special Summit on an topic that you care about? (see the amazing talent that we were able to gather in our last one)

- Maybe is better SAST or DAST rules for your tools?

- Maybe is better technical (and security focused) information on how Frameworks work and its security implications? (which will help with code reviews and code standards)

- Maybe its a working group on CSP (Content Security Policies) to share best-practices and ideas on how to implement them? (with the key players from the browser vendors participating)

- _Maybe creating a series of events (or even a tour) around OWASP chapters and conferences where you can present your latest ideas and challenges? (the format is up to your imagination and availability) _

- Maybe its better connectors, parsers or data-transformations for the data you collect using StastD?

- ….fell free to propose your own (these are just ideas to kickstart the dialogue)

The idea is to start a collaboration with you.

There is a lot that OWASP can learn from what you are doing, and the more we are able to capture it, the more we can help others who also want to protect their customers, business and applications.

Thanks for your time

Dinis Cruz

Owasp Contributor

_Related Etsy posts:

- Etsy.com - A case study on how to do security right?

- Amazing presentation on integrating security into the SDL

- Measure Anything, Measure Everything, AppSensor and Simple Graphite Hosting

7.6 FLOSSHack TeamMentor and the ‘sausage making process’ that is software/application development

OWASP’s FLOSSHack events are a really powerful initiative.

_”…Free/Libre Open Source Software Hacking (FLOSSHack) events are designed to bring together individuals interested in learning more about application security with open source projects and organizations in need of low cost or pro bono security auditing. FLOSSHack provides a friendly, but mildly competitive, workshop environment in which participants learn about and search for vulnerabilities in selected software. In turn, selected open source projects and qualified non-profit organizations benefit from additional quality assurance and security guidance….” _

_

_See FLOSSHack_One for the details (and vulnerabilities discovered) of the first event.

OWASP’s FLOSSHack is one of those ‘magical’ spaces where the OWASP’s community and its projects can come together and add a lot of value.

In fact I remember the idea of doing something like this at the last Summit(s) but we couldn’t find a FLOSS or commercial vendor that wanted to ‘play the game’ :)

And, just for record, I will be happy to help if an OWASP chapter (or University) wants to do a similar FLOSSHack on TeamMentor

Although TeamMentor (TM) is not OpenSource, it is very close, since the source code is available and SI allowed me to ‘open it’ as much (if not more) as other OpenSource projects (note that TeamMentor uses O2 Platform’s FluentSharp APIs, and there has been significant changes/features in the latest version of O2 which are a direct consequence of my TeamMentor development activities (for example the O2 VisualStudio Extension or the Real-Time Vulnerability Feedback in VisualStudio PoC)).

I’m quite proud of the level of openness that TM has, and I hope that other commercial tools follow these ideas/activities. Here are a couple blog posts I wrote about TM’s Security:

- TeamMentor Vulnerability Disclosures: CSRF , ClickJacking and Get Password Hash from Browser Memory - checkout the emdeded pdfs with details of the vulnerabilities

- Couple XSS issues and XSS-By-Design (in TeamMentor) - and why they were not fixed in the current 3.2 release

- ‘About’ page broken due to ClickJacking protection - good example of the Security TAX that we (developers) have to pay due to security fixes

- Creating an TeamMentor Security Bounty Program - still need to publicly launch this, but for all practical purposes it is active

- Test and Hack TeamMentor server with 3.2 RC5 code and SI library - lastest ‘please hack TM’ invite

- ”…O2 in Seattle…” and “…Please Hack TeamMentor (beta)…” - first ‘please hack TM’ invite sent last year

- On Testing TM WebServices

- Documenting how to test WebServices using scripts - the story so far - see how hard it is to test WebServices in a real-world app

- Creating a spreadsheet with WebService’s Authorization Mappings

- Roadmap for Testing an WebService’s Authorization Model

- What is the formula for the WebServices Authentication mappings? - spreadsheet template with Authorisation mappings

- Testing TeamMentor 2.0 security using O2 - how I used a mix of Static and Dynamic Analysis to test the security of the first TM WebService’s refactoring

- SecDDev - Security Driven Development - an interesting idea :)

Note that we really embraced Git and GitHub as part of TeamMentor’s development and workflow:

- Pretty cool visualisation of the ‘GitHub based’ TeamMentor Development+QA+Release workflow

- Master source code: https://github.com/TeamMentor/master

- Bugs and issues: https://github.com/TeamMentor/master/issues

- Version with OWASP Top 10 Library (https://github.com/TeamMentor-OWASP/Master) which you can see in action at http://owasp.teammentor.net (note that this is the full engine with the OWASP LIbrary content released under a CC License)

- Bunch of misc code repositories: https://github.com/TeamMentor

My objective is to create a super secure+powerful application, with maximum visibility+openness, while creating documentation on how it happened (which you can see by the current blog posts)

I think that TeamMentor is a good case study for the challenges of writing secure code, since it is a real-world app, with real-world complexity, real-world legacy stuff and real-world security compromises. This is a great learning opportunity to look at the ‘sausage making process’ that is software/application development (with a bunch of .Net, Asmx, jQuery, Javascript, and xml files which can be easily deployed to the ‘cloud’). We always talk how OWASP needs to engage with developers, work with them, help them to secure the app…. well here is a good opportunity to do just that.

I want/need help in securing TeamMentor, and Its not an easy task :)

One area that I really want to move next, is the implementation of AppSensor-like-capabilities so that malicious activities can be detected and mitigated

Oh, and I could really do with a good layer of .NET ESAPI controls/capabilities :)

7.7 I never liked the term ‘Rugged Software’, what about Robust/Resilient Software?

I still have not fully rationalised why I don’t like (as security professional and as a developer) the term (and some parts of the concept) of the Rugged Software

Recently when talking about similar concepts (i.e. writing secure code/applications) I found myself talking about the need to create Robust/Resilient Applications.

Isn’t Resilient Software a better term to describe applications/code that are able to correctly handle, mitigate and react to malicious behaviour/input?

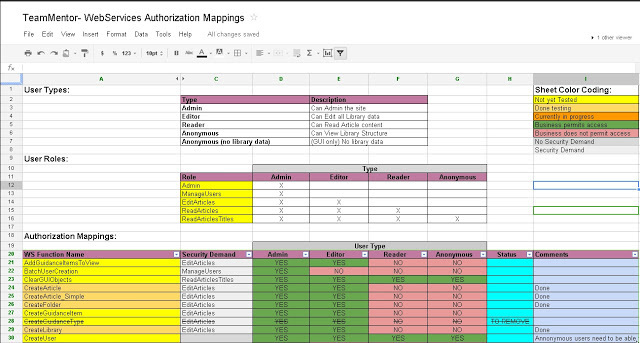

7.8 Is there a spreadsheet/template for Mapping WebServices Authorization Rules?

What is the best way to map/document the Authorization Rules? (for example of WebServices)

I’m looking for a spreadsheet/template that allows the business-rules (i.e. ‘who has access to what’) to be mapped, visualized and analyzed.

I looked at owasp.org and this is what I found (did I missed something?)

- Guide to Authorization

- Codereview-Authorization

- Testing for Authorization

- Reviewing Code for Authorization Issues

- Cheat Sheets (no Authorization one)

In the past I have created a couple of these (some even with O2 Automation), but NDAs prevented me from sharing. So today, since I’m helping Arvind to create a set of Python scripts to test TeamMentor’s WebServices, I took the time to create a model which I think came out quite well.

You can read about it here: Creating a spreadsheet with WebService’s Authorization Mappings and this is what it looks like:

https://docs.google.com/a/owasp.org/spreadsheet/ccc?key=0AhHDFVmo550OdDZUcDU5eXpGVGFKWDZjS3VGUHdUTXc

Inline images 1

Since I’m going to integrate this with O2 next, it is better to change it into a better format/standard now (vs later).

I also think that we should have a couple of these templates in an easy to consume format on the OWASP WIki (I have lost count the amount of times that I have tried to explain the need for ‘such authorization tables/mappings’ without having good examples at hand).

Note that creating these mappings is just one part of the puzzle! Also as important is the ability to keep it well maintained, up-to-date and relevant.

7.9 The next level App Security Social Graph

My core belief is that openness and visibility will eventually create a model/environment where the ‘right thing’ tends to happen, since it is not sustainable (or acceptable) to do the ‘wrong thing’ (which without that visibility is usually not exposed and contested). See the first couple minutes on the Git and Demoracy presentation for a real powerful example of this ‘popular/viral awareness’ in action.

When I look at my country (Portugal and now UK) or my industry (WebAppSec) I see countless examples of scenarios where if information was being disclosed and presented in a consumable way, A LOT of what happens would not be tolerated.

For example, we (in WebAppSec) industry know how bad the software and applications created every day are. And we (and the customers) have accepted that vulnerabilities are just part of creating software, and that the best we can do is to improve the SDL (and reduce risk).

But, if the real scale of the problem was known, would we (as a society or industry) accept it? Would we accept that large parts of our society are built on top of applications that very few people have any idea of how they work? (might as well if they are secure).

So while OWASP is busy booking meetings to have meetings, the rest of the world is moving on, and is trying to find ways to connect data sets in a way that ‘reality is understandable/visible’, so that what is really going on, is exposed in a easy to consume and actionable way.

For example take a look at the Next Level Doctor Social Graph for an attempt at driving change while trying to figure out a commercially viable way of doing it (check out their ‘“Open Source Eventually” idea)

From that page, here is is their description of the problem:

_“It is very difficult to fairly evaluate the quality of doctors in this country. Our State Medical Boards only go after the most outrageous doctors. The doctor review websites are generally popularity contests. Doctors with a good bedside manner do well. Doctors without strong social skills can do poorly, even if they are good doctors. It is difficult to evaluate doctors fairly. Using this data set, it should be possible to build software that evaluates doctors by viewing referrals as “votes” for each other.” _(see related reddit thread here)

_

_This is what they call the _Next Level Doctor Social Graph _, and when I was reading it I was thinking about doing the same for software/apps under the title: The next level App Security Social Graph

Here is the same text with some minor changes (in bold) on what the **The next level App Security Social Graph **could be:

“It is very difficult to fairly evaluate the quality of __software/application’s security_ in this country. Our _regulators_ only go after the most outrageous **incidents/data-breaches**. The **product/services** websites are generally popularity contests. _Applications_ with a good _marketing do_ well. _Applications_ without strong _presentation_ skills can do poorly, even if they are _secure applications_. It is difficult to evaluate _security_ fairly. Using this data set, it should be possible to build software that evaluates _application’s security_ by viewing **….. (to be defined)**“_

_

It would be great if the current debate was on that _….. (to be defined) bit (ideally with a number of active experiments going on to figure out the best metrics) … but we quite far away from that world ….

… meanwhile another 8763 vulnerabilities (change this value to a quantity you think is right) have just been created since you started reading this post. These ‘freshly baked’ vulnerabilities are now in some code repository and will be coming soon to an app that you use (and your best defence is to hope that you are not caught by its side-effects)

7.10 Trustworthy Internet Movement and SSL Pulse

Ivan’s interesting work at Qualys continues with the launch of the Trustworthy Internet Movement (TIM) and SSL Pulse at RSA.

There are a number of interesting developments here:

- Great presentation and message

- Real nice project page for SSL-Pulse: https://www.trustworthyinternet.org/ssl-pulse/

- Good funded project: Its looks like they started with 500k USD investment from Philippe Courtot

- Some efforts at creating a community (with a Join the Movement) although it doesn’t say what happens next

- Reuse of Ivan’s SSL Labs great work gives this ‘Movement’ a good momentum

- Now look at they fundamentals (‘Innovation, Collaborate, Individual Expertise’), principle (‘TIM’s mission is to resolve major lingering security issues on the Internet, such as SSL governance and the spread of botnets and malware, by ensuring security is built into the very fabric of private and public clouds, rather than being an afterthought.’) and Target Audience (‘Experts, Innovators and Technical gurus, Stakeholders, Corporations, Academic institutions and non-profit organizations, Angel investors and VCs’)

- Quite a targeted audience

- Will be interesting to see who joins and provides financial backing

- Its quite SSL focused, there is a lot more to cloud security than SSL :)

- No reference to openness :)

- It sounds a lot like the model Mark Curphey wishes OWASP would follow :)

So at the moment this is basically a good Qualy’s branding exercise, and will help a bit to improve the WebApp security world, but the key question is if there will be community adoption/participation and if others will join the party.

There is nothing wrong with what Qualys is doing, and the fact that this investment (on Application Security) is happening outside of OWASP shows that OWASP doesn’t currently have a model/structure that promotes this type of collaboration. And that is very unfortunate, since in terms of worldwide community and reach there is SO much OWASP could do to help this type of initiative.

7.11 Where to have AppSec Q&A threads (what about Reddit?)

Note: I wrote this a while back but somehow was stuck on my ‘Drafts’ folder (but the question is still relevant in March 2013)

So it looks like StackExchange Security is not going to work for WebAppSec and OWASP (since this question is exactly the type of question we should would like to see there How to implement url encryption on .xsl page using OWASP ESAPI? and that has been closed)) . That said, there are a couple good Q&A on the OWASP tag: http://security.stackexchange.com/questions/tagged/owasp

/div>

And yes we have the Security 101 maling list but that is not really working (look at the traffic) and, practically mailman sucks for this kind of things since we really need something with a threaded/social discussion environment (like StackExchange or Reddit)

**So what about Reddit? **I really like its GUI/Workflow, already use it quite a lot and we already have a nice home there: http://reddit.com/r/owasp

For example I just posted this question there: http://www.reddit.com/r/owasp/comments/10ayls/secure_spring_frameworkuser_management/ (and in Security StackExchange)… let’s see what happens :)

For this to work we need to make sure that owasp reddit community gets some viewing and that there is a way to create regular updates on what is going on in there.

What do you think?

7.12 Is the TeamMentor’s OWASP Library content released under an open License?

Following the FLOSSHack TeamMentor thread, Jerry Hoff asked “Is the content in http://owasp.teammentor.net/teamMentor creative commons? Can we use it to freely fill out more of the cheat sheets and use in tutorial videos and so forth?”

And the answer is: YES

Here is the repository for the XML files: https://github.com/TeamMentor-OWASP/Library_OWASP

There are a bunch of (O2 based) tools to consume this content directly, or alternatively you can use the TeamMentor CoreLib from NuGet (which has all the classes and APIs needed)

Note that you can also link directly to the content (articles, libraries, folders or views) :

- by title https://owasp.teammentor.net/article/How_to_Protect_From_Injection_Attacks_in_ASP.NET

- by title https://owasp.teammentor.net/article/How_to_Encrypt_Configuration_Sections_in_ASP.NET_Using_DPAPI

- by title (on articles with the same title):

- https://owasp.teammentor.net/article/All_Database_Input_Is_Validated (Asp.Net 3.5 version)

- https://owasp.teammentor.net/article/All_Database_Input_Is_ValidatedOWASPJava (Java version)

- by GUID: https://owasp.teammentor.net/article/56b0552d-2ceb-4714-a8f1-20a6a8609874

- by View or folder: sometimes is more user friendly to only expose to the end user (for example) the articles in the A08: Failure to Restrict URL Access view (instead of the whole TM GUI: https://owasp.teammentor.net)

In addition to the ‘article’ pages (linked above) you can also see/consume the content using:

- raw: https://owasp.teammentor.net/raw/All_Database_Input_Is_Validated (this is what the xml file stored in disk looks like)

- html: https://owasp.teammentor.net/html/56b0552d-2ceb-4714-a8f1-20a6a8609874 (direct html page with no AJAX loading or editing capabilities) - TM suports wikitext, xml and xsl content, but I think that all articles in this library are HTML based

- content: https://owasp.teammentor.net/content/56b0552d-2ceb-4714-a8f1-20a6a8609874 (the article’s Html content with no TM Branding)

- jsonp: https://owasp.teammentor.net/jsonp/56b0552d-2ceb-4714-a8f1-20a6a8609874 (to allow the easy consumption of TM content without worrying about that annoying same origin policy security protection :) )

- wsdl: http://owasp.teammentor.net/aspx_pages/tm_Webservices.asmx - note: if you want to fuzz this, I can set-up a dedicated cloud version for you (on AppHarbor or Azure)

For reference the TM Documentation is at: https://docs.teammentor.net

The page https://docs.teammentor.net/xml/Eval contains 4 videos and a download link (that points to the GitHub version) which allow you to run TM locally (btw look at the source code of that page and see some XML+XSL foo action :) )

7.13 Reaching out to Developers, Aspect is doing it right with Contrast

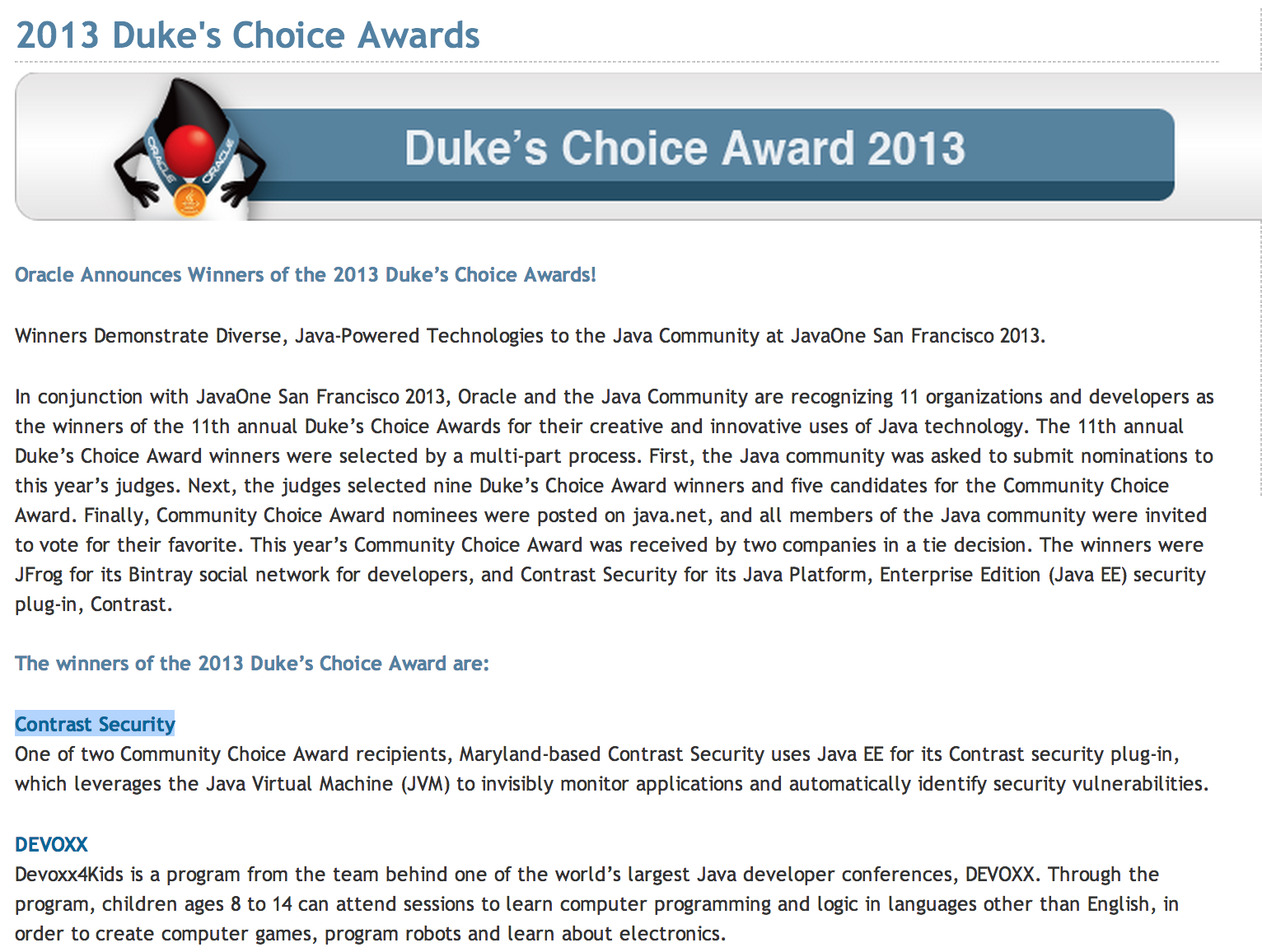

UPDATE: I got the dates wrong when I posted this. The Contrast blog post and presentation are from 2012, it is the award that is from 2013:

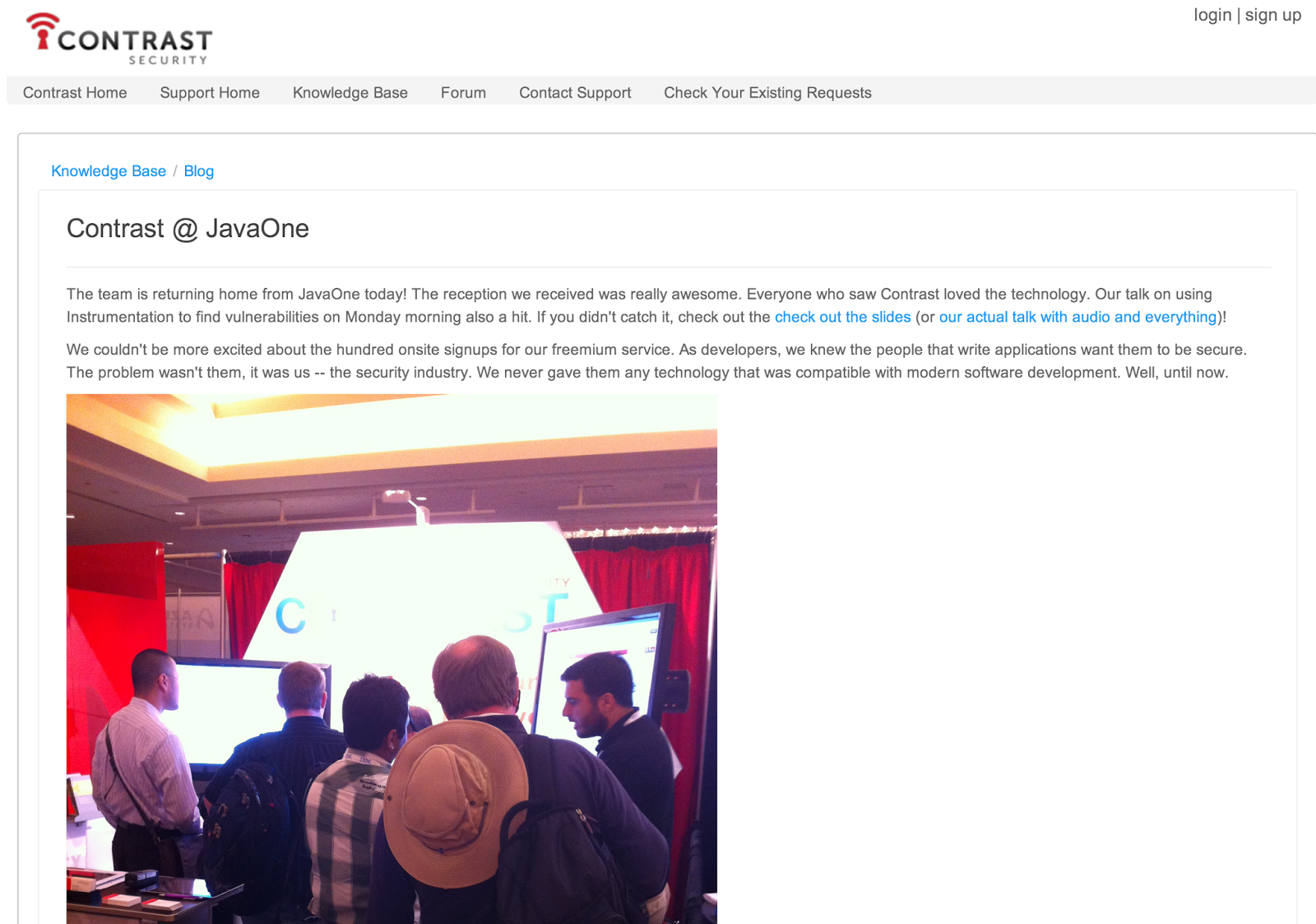

In case you missed it OWASP’s long time contributor Aspect Security were at Java One conference in presenting their (commercial) product Contrast.

I was not there, but from the noises I’m hearing it was quite a successull event, with lots of developers reached.

Here is a cool picture from their Contrast @ JavaOne post (which contains a link to their presentation (also embedded below));

The presentation is a good overview of how their technology works, and although those ‘fake tweets’ are bit too much me, this is a great ‘soft’ sales pitch for their product.

I wished they had resisted the cheap-shots at the other Dynamic/Static products/solutions, since to solve the web application security problem, we need all available technologies to work together (not against each other).

It would also had been amazing if this technology was open source, but that is another example of the failure of Open Source to create viable business models for companies like Aspect.

That said, compared with the other tool vendors Aspect and Contrast are a breath of fresh air (and I still have to follow up on Jeff’s and Arshan’s offer to get a proper demo of Contrast (I need to find a project to use it)

So congratulations to Aspect for focusing on developers, for trying to inject security deep into the SDL (where it needs to be) and for winning a 2013 Duke’s Choice Awards:

**Presentation: Using ****Instrumentation to Find Security Vulnerabilities in JaveEE Applications **

…. as used by commercial product: Contrast

… I wonder if there are open source alternatives of this technique :)

7.14 My comments on the SATEC document (Static Analysis Tool Evaluation Criteria)

(submitted today to the wasc-satec@lists.webappsec.org list)

A bit late (deadline for submission is today) but are my notes on the version currently at http://projects.webappsec.org/w/page/41188978/Static%20Analysis%20Tool%20Evaluation%20Criteria

My comments/notes are marked as Conted to add in underscore, bold and Italic or [content to be deleted in red]

When I wanted to make a comment on particular change or deletion, I did it on a new line:

DC Comment: … a comment goes here in dark blue

Of course that this is my opinion, and these notes are based on the notes I took in ‘analogue mode’ (i.e. on paper :) )

Table of Contents:

Introduction:

Static Code Analysis is the analysis of software code [without actually executing the binaries resulting from this code].

DC Comment: we don’t need the extra definition, since it is possible to do static code analysis based on information/code/metadata obtained at run-time or via selective execution/simulation of the code/binaries. The key concept is that static analysis is about analysing and applying rules to a set of artefacts that have been extracted from the target application. From this point of view, we can do static analysis on an AST (extracted from source code), an intermediate representation (extracted from a .net or java binary) or run-time traces (extracted from a running application). We can also do static analysis on an application config files, on an application’s authorisation model or even on application specific data (for example the security controls applied to a particular asset)

Static code analysis aims at automating code analysis to find as many common [quality and] security software issues as possible. There are several open source and commercial analyzers available in the market for organizations to choose from.

DC Comment: it doesn’t make sense to add ‘quality’ to mix (in fact the more I read this document the more I thought that the word ‘security’ should be part of the title of this document/criteria. Quality is a massive area in its own right, and apart form this small comment/reference, there is not a lot of ‘software quality’ on this document. This is a a document focused on Software security issues :) , and yes security is a sub-set of quality (which is ok if referenced like that)

Static code analysis analyzers are rapidly becoming an essential part of every software organization’s application security assurance program. Mainly because of the analyzers’ ability to analyze large amounts of source code in considerably shorter amount of time than a human could, __and the ability to automate security knowledge and workflows__

__

__

DC Comment: The key advantage of static analysis is that it can codify an application security specialist knowledge and workflows. By this, I mean that for the cases where it is possible to codify a particular type of analysis (and not all types of analysis can be automated), these tools can perform those analysis in a repeatable, quantifiable and consistent way. Scanning large code-bases is important, but more important is the ability to scale security knowledge, specially since I’ve seen cases where ‘large code scans’ where achieved by dropping results or skipping certain types of analysis-types (in fact most scanners will scan an app of any size if you delete all its rules :) )

The goal of the SATEC project is to create a vendor-neutral document to help guide application security professionals during __the creation of an __source-code driven security __programme__ [assessments]. This document provides a comprehensive list of features that should be considered when __evaluating__ [conducting] _** a security code **__Tool__** **[review]_. Different users will place varying levels of importance on each feature, and the SATEC provides the user with the flexibility to take this comprehensive list of potential analyzer features, narrow it down to a shorter list of features that are important to the user, assign weights to each feature, and conduct a formal evaluation to determine which scanning solution best meets the user’s needs.

The aim of this document is not to define a list of requirements that all static code analyzers must provide in order to be considered a “complete” analyzer, and evaluating specific products and providing the results of such an evaluation is outside the scope of the SATEC project. Instead, this project provides the analyzers and documentation to enable anyone to evaluate static code analysis analyzers and choose the product that best fits their needs. NIST Special Publication 500-283, “Source Code Security Analysis Analyzer Functional Specification Version 1.1”, contains minimal functional specifications for static code analysis analyzers. This document can be found at http://samate.nist.gov/index.php/Source_Code_Security_Analysis.html.

**Target Audience: **

The target audience of this document is the technical staff of software organizations who are looking to automate parts of their source code driven security testing using one or more static code analyzers,__and application security professionals (internal or external to the organisation) that responsible for performing application security reviews__. The document will take into consideration those who would be evaluating the analyzer and those who would actually be using the analyzer.

**Scope: **

The purpose of this document is to develop a set of criteria that should be taken into consideration while evaluating Static Code Analysis Tools [analyzers] for security testing.

DC Comment (and rant): OK, WTF is this ‘Analysis Analyzers’ stuff!!! This is about a Tool right? of course that a tool that does software analysis, is an analyzer, but saying that it we are talking about a code analysis analyzer sounds quite redundant :) There is TOOL in the name of the document, and we are talking about tools. In fact, these static analysis tools perform a bunch of analysis and more importantly (as multiple parts of this document cover), the **Analyzer **part of these analysis tools is just **one **of its required/desired features (for example enterprise integration and deployability are very important, and have nothing to do with __the ‘Analyzer’ part.

_

_

If I can’t change your mind to change the redundant Analyzer, them you will need to rename this document to SAAEC (Static Analysis Analyzer Evaluation Criteria), actually what about the SAAEA (Static Analysis Analyzer Evaluation Analysis) :)

Every software organization is unique in their environment. The goal is to help organizations achieve better application security in their own unique environment, the document will strictly stay away from evaluating or rating analyzers. However, it will aim to draw attention to the most important aspects of static analysis Tools [analyzers] that would help the target audience identified above to choose the best Tool [analyzer] for their environment and development needs.

Contributors:

Aaron Weaver (Pearson Education)

Abraham Kang (HP Fortify)

Alec Shcherbakov (AsTech Consulting)

Alen Zukich (Klocwork)

Arthur Hicken (Parasoft)

Amit Finegold (Checkmarx)

Benoit Guerette (Dejardins)

Chris Eng (Veracode)

Chris Wysopal (Veracode)

Dan Cornell (Denim Group)

Daniel Medianero (Buguroo Offensive Security)

Gamze Yurttutan

Henri Salo

Herman Stevens

Janos Drencsan

James McGovern (HP)

Joe Hemler (Gotham Digital Science)

Jojo Maalouf (Hydro Ottawa)

Laurent Levi (Checkmarx)

Mushtaq Ahmed (Emirates Airlines)

Ory Segal (IBM)

Philipe Arteau

Sherif Koussa (Software Secured) [Project Leader]

Srikanth Ramu (University of British Columbia)

Romain Gaucher (Coverity)

Sneha Phadke (eBay)

Wagner Elias (Conviso)

Contact:

Participation in the Web Application Security Scanner Evaluation Criteria project is open to all. If you have any questions about the evaluation criteria, please contact Sherif Koussa ( sherif dot koussa at gmail dot com)

Criteria:

_

_

DC Comment: I think this criteria should be split into two separate parts:

- **Operational Criteria **- These are generic items that are desired on any application that wants to be deployed on an enterprise (or to a large number of users). Anything that is not specific to analysing an application for security issues (see next point) should be here. For example installation, deployability, standards, licensing, etc.. (in fact this could be a common document/requirement across the multiple WASC/OWASP published criterias)

- _**Static Analysis Criteria - **Here is where all items that are relevant to an static analysis tool should exist. These items should be specific and non-generic. For example _

- **‘the rules used by the engine should be exposed and consumable’ **is an operational criteria (all tools should allow that)

- ‘the rules used by the engine should support taint-flow analysis’ is an static analysis criteria (since only these tools do taint-flow analysis)

Below I marked each topic with either [Operational Criteria] or [Static Analysis Criteria]

1. Deployment:** **

Static code analyzers often represent a significant investment by software organizations looking to automate parts of their software security testing processes. Not only do these analyzers represent a monetary investment, but they demand time and effort by staff members to setup, operate, and maintain the analyzer. In addition, staff members are required to check and act upon the results generated by the analyzer. Understanding the ideal deployment environment for the analyzer will maximize the derived value, help the organization uncover potential security flaws and will avoid unplanned hardware purchase cost. The following factors are essential to understanding the analyzer’s capabilities and hence ensuring proper utilization of the analyzer which will reflect positively on the analyzer’s utilization.

1.1 Analyzer Installation Support:** **[Operational Criteria]

A static code analyzer should provide the following :

- **Installation manual: **specific instructions on installing the analyzer and its subsystems if any (e.g. IDE plugins) including minimum hardware and software requirements.

- **Operations manual: **specific and clear instructions on how to configure and operate that analyzer and its subsystems.

- **SaaS Based Analyzers: **since there is no download or installation typically involved in using a SaaS based analyzer, the vendor should be able to provide the following:

- Clear instructions on how to get started.

- Estimated turn-around time since the code is submitted until the results are received.

- What measures are being taken to keep the submitted code or binaries as well as to the reports confidential.

1.2 Deployment Architecture:** **[Operational Criteria]

Vendors provide various analyzer deployment options. Clear description of the different deployment options must be provided by the vendor to better utilize the analyzer within an organization. In addition, the vendor must specify the optimal operating conditions. At a minimum the vendor should be able to provide:

- The type of deployment: server-side vs client-side as this might require permissions change or incur extra hardware purchase.

- Ability to run simultaneous scans at the same time.

- The analyzers capabilities of accelerating the scanning speed (e.g. ability to multi-chain machines, ability to take advantage of multi-threaded/multi-core environments, etc)

- The ability of the analyzer to scale to handle more applications if needed.

1.3 Setup and Runtime Dependencies:** **[Static Analysis Criteria]

The vendor should be able to state whether the Tool [analyzer] uses a compilation based analysis or source code based analysis.

- Compilation based analysis: where the Tool [analyzer] first compiles the code together with all dependencies, or the analyzer just analyses the binaries directly. Either ways, the analyzer requires all the application’s dependencies to be available before conducting the scan, this enables the analyzer to scan the application as close to the production environment as possible.

- Source code based analysis: does not require dependencies to be available for the scan to run. This could allow for quicker scans since the dependencies are not required at scan time.

- __Dynamic based analysis: where the tool analyzes data collected from real-time (or simulated) application/code execution (this could be achived with AOP, code instrumentation, debugging traces, profiling, etc..)__

2. Technology Support:** **[Static Analysis Criteria]

Most organizations leverage more than one programming language within their applications portfolio. In addition, more software frameworks are becoming mature enough for development teams to leverage and use across the board as well as a score of 3rd party libraries, technologies, libraries which are used both on the server and client side. Once these technologies, frameworks and libraries are integrated into an application, they become part of it and the application inherits any vulnerability within these components.

2.1 Standard Languages Support:** **[Static Analysis Criteria]

Most of the analyzers support more than one programming language. However, an organization looking to use [acquire] a static code analysis Tool [analyzer] should make an inventory of all the programming languages, and their versions, used within the organizations as well as third party applications that will be scanned as well. After shortlisting all the programming languages and their versions, an organization should compare the list against the Tool’s [analyzer’s] supported list of programming languages and versions. Vendors provide several levels of support for the same language, understanding what level of support the vendor provides for each programming language is key to understanding the coverage and depth the analyzer provides for each language. One way of understanding the level of suppor__t__ for a particular language is to inspect the Tool’s _[analyzer’s] _signatures (AKA Rules or Checkers) for that language.

DC Comment: very important here is to also map/define if these rules are generic or framework/version specific. For example do all java rules apply to all java code, or are there rules that are specific to particular version of Java (for example 1.4 vs 1.7) or Framework (for example spring 1.4 vs 2.0).

_

_

This is really important because there are certain vulnerabilities that only exist on certain versions of particular frameworks. For example, I __believe that the HttpResponse.Redirect in the version 1.1 of the .NET Framework was vulnerable to Header Injection, but that was fixed on a later release. Static code analysis should take this into account, and not flag all unvalidated uses of this Redirect method as Header Injection vulnerabilities.

2.2 Programming Environment Support:** **[Static Analysis Criteria]

Once an application is built on a top of a framework, the application inherits any vulnerability in that framework. In addition, depending on how the application leverages a framework or a library, it can add new attack vectors. It is very important for the analyzer to be able to be able to trace tainted data through the framework as well as the custom modules built on top of it.

DC Comment: No, I don’t agree with the underscored line above. What is important is to understand HOW the frameworks work/behave

_

_

Also this comment doesn’t make a lot of sense in the way most (if not all) current static analysis is done. There are two key issues here

_

_

#1) what is the definition of a ‘Framework’

#2) what does it mean to ‘trace tainted data through the framework’

_

_

On #1, unless we are talking about C/C++ (and even then) most code analysis is done on Frameworks. I.e. everything is a framework (from the point of view of the analysis engine). The analysis engine is ‘told’ that a particular method behaves in a particular way and it bases its analysis based on that

_

_

From the point of view of a scanning engine, there is no difference between the way asp.net aspx works, vs the way the asp.net mvc framework behaves. Same thing for java, where from the scanning engine point of view there is no difference between the classes in a JRE (see _http://hocinegrine.com/wp-content/uploads/2010/03/jdk_jre.gif) and Spring Framework classes_

_

_

In fact most static analysis is done based on:

_

_

- sources: locations of the code that are known to have malicious data (which we call tainted data)

- taint propagators : methods that will pass tainted data to one of its parameters or return value

- validators: methods that will remove taint (ideally not blankly but based on a particular logic/vulnType)

- reflection/hyper-jumps/glues: cases where the application flow jumps around based on some (usually framework-driven) logic

- sinks: methods that are known to have a particular vulnerability (and should not be exposed to tainted data)

- application control flows : like if or switch statements which affect the exposure (or not) to malicious/taint data

- application logic: like mapping the authorization model and analysing its use

_

_

The way most analysis is done, is to have rules that tell the engine how a particular method works. So in the .NET framework, the tools don’t analyse Request.Querystring or Response.Write. They are ‘told’ that one is a source and the other is a sink. In fact, massive blind spots happen when there are wrappers around these methods that ‘hide’ their functionality from the code being analysed.

_

_

Even on C, there is usually a rule for _strcpy which is used to identify buffer overflows. Again most scanners will miss methods that have the exact same behaviour as _**strcpy **but are called something differently (in fact, I can write such methods C# that are vulnerable to buffer overflows which will missed by most (if not all) current tools :) ).

On the #2 point, yes ideally the scanners should be scanning the inside of these methods, but most scanners (if not all) would blow up if they did.

And even if they did it, it wouldn’t really work since each vulnerability has a particular set of patterns and context.

So what does it mean ‘to trace tainted data through frameworks’? Are we talking about being able to follow taint over a sequence like this:

a) request starts on a view that is posting data to a

b) controller that sends the data to the

c) **business/db layer **which does something with it, and sends the result to a

d) view that displays the result to user?

THIS is what I think it is important. I.e. we are able to analyze data based on the actual call-flow of the application.

So in a way, we don’t need to ‘trace data’ through the frameworks (as in ‘what is going on inside’) but on top of the frameworks **(as in **‘what code is touched/executed)

This is actually where the new type of scanners which do a mix of static and dynamic analysis (like seeker, contrast, glass box stuff from IBM, etc…) have a big advantage (vs traditional AST or binary-based scanners), since they can actually ‘see’ what is going on, and know (for example) which view is actually used on a particular controller.

At large, frameworks and libraries can be classified to three types:

- Server-side Frameworks:frameworks/libraries that reside on the server, e.g. Spring, Struts, Rails, .NET etc

- Client-side Frameworks:which are the frameworks/libraries that reside on browsers, e.g. JQuery, Prototype, etc

- __where is the 3rd type?__

DC Comment: these ‘types of framework’ doesn’t make sense here (i.e these are not really different ‘types of frameworks’, just different execution engines.

Now on the topic of client-side and server-side code, the real interesting questions are:

- Can the tool ‘connect’ traces from server-side code to traces on the client-side code?

- Can the tool understand the context that the server-side code is used on the client side (for example the difference between a Response.Write/TagLib been used to output data into a an HTML element or an HTML attribute)

Understanding the relationship between the application and the frameworks/libraries is key in order to detect vulnerabilities resulting from the application’s usage of the framework or the library, and the following in particular:

- identify whether the application is using the framework in a insecure manner.

- The analyzer would be able to follow tainted data between the application and the framework.

- The analyzer’s ability to identify security misconfiguration issues in the framework\library.

- Well-known vulnerability identified by the Common Vulnerabilities and Exposures (CVE)

DC Comment: see my point above about everything being a framework, and in fact, what happens is that most apps are made of:

a) language APIs

b) base class APIs

c) 3rd party frameworks that extend the base class APIs with new functionality

d) in-house APIS

Which all behave like ‘frameworks’

Which means, that the first important question to ask is: What is the level of Framework support that a particular tool has?

The 2nd (and what I like about the items listed above) is the import question of: Is the Framework(s) being used securely?

**

**

The 2nd point is very important, since even frameworks/apis that are designed to provide a security service (like an encoding/filtering/authentication api) can be used insecurely

In a way, what we should be asking/mapping here is: What are the known issues/vulnerabilities that the tool is able to detect?

Note: one of the areas that we (security industry) is still failing a lot, is in helping/pushing the framework vendors to ‘codify how their frameworks’ behaves, so that our tools/manual analysis know what to look for

2.3 Industry Standards Aided Analysis:** **

Industry standard weaknesses classification, e.g. OWASP Top 10, CWE/SANS Top 25, WASC Threat Classification, DISA/STIG etc provide organizations with starting points to their software security gap analysis and in other cases these calssifications become metrics of minimum adherence to security standards. Providing industry standard aided analysis becomes a desirable feature for many reasons.

DC Comment: I don’t understand the relevance of this 2.3 item (in this context). These ‘standards’ are more relevant in the list of issues to find and in vulnerability discovery __repairing

2.4 Technology Configuration Support:** **[Static Analysis Criteria]

Several tweaks provided by the analyzer could potentially uncover serious weaknesses.** **

- ****Configuration Files Redefinition: **** configurations to other file types (e.g. *.ini, *.properties, *.xml, etc). It is a desirable and a beneficial feature to configure the analyzer to treat a non-standard extension as a configuration file.

- **Extension to Language Mapping: **the ability to extend the scope of the analyzer to include non-standard source code file extensions. For example, JSPF are JSP fragment files that should be treated just like JSP files. Also, HTC files are HTML fragment files that should be treated just like HTML files. PCK files are Oracle’s package files which include PL/SQL script. While a analyzer does not necessarily have to understand every non-standard extension, it should include a feature to extend its understanding to these extensions.

_DC Comment: The key issue here is for the Tool to understand how the target app/framework behaves. And that is only possible if the artefacts used by those frameworks are also analyzed. _

_

_

I would propose that we rename this section as ‘Framework configuration support’ and add more examples of the types of ‘thing’s that need to be looked at (for example the size of Models in MVC apps which could lead to Mass-Assignment/Auto-Binding vulnerabilities)

3. Scan, Command and Control Support:** **[Operational Criteria]

The scan, command and control of static code analysis analyzers has a significant influence on the user’s ability to configure, customize and integrate the analyzer into the organization’s Software Development Lifecycle (SDLC). In addition, it affects both the speed and effectiveness of processing findings and remediating them.

3.1 Command line support:** **[Operational Criteria]

The user shouldbe able to perform scans using the command line which is a desirable feature for many reasons, e.g. avoiding unnecessary IDE licensing, build system integration, custom build script integration, etc. For SaaS based tools, the vendor should be able to indicate whether there are APIs to initiate the scan automatically, this becomes a desirable feature for scenarios involving large number of applications.

3.2 IDE integration support:** **[Operational Criteria]

The vendor should be able to enumerate which IDEs (and versions) are being supported by the analyzer being evaluated, as well as what the scans via the IDE will incorporate. For example, does an Eclipse plugin scan JavaScript files and configuration files, or does it only scan Java and JSP files.

DC Comment: the key question to ask here is __**WHO is doing the scan? **I.e is the scan actually done by the IDE’s plugin (like on Cat.net case) or the plug-in is just a ‘connector’ into the main engine (running on another process or server). Most commercial scanners work in the later way, where the IDE plugins are mainly used for: scan triggers, issues view, issues triage and reporting

**3.3 Build systems support: **[Operational Criteria]

The vendor should be able to enumerate the build systems supported and their versions (Ant, Make, Maven, etc). In addition, the vendor should be able to describe what gets scanned exactly in this context.

3.4 Customization:** **[Static Analysis Criteria]

The analyzer usually comes with a set of signatures (AKA as rules or checkers), this set is usually followed by the analyzer to uncover the different weaknesses in the source code. Static code analysis should offer a way to extend these signatures in order to customize the analyzer’s capabilities of detecting new weaknesses, alter the way the analyzer detect weaknesses or stop the analyzer from detecting a specific pattern. The analyzer should allow users to:

- **Add/delete/modify core signatures: **Core signatures come bundled with the analyzer by default. False positives is one of the inherit flaws in static code analysis analyzers in general. One way to minimize this problem is to optimize the analyzer’s core signatures, e.g. mark a certain source as safe input.

- Author custom signatures: authoring custom signature are used to “educate” the analyzer of the existence of a custom cleansing module, custom tainted data sources and sinks as well as a way to enforce certain programming styles by developing custom signatures for these styles.

- **Training: **the vendor should state whether writing new signatures require extra training.

_

_

DC Comment: customisation is (from my point of view) THE most important __differentiator of an engine (since out-of-the-box most, most commercial scanners are kind-of-equivaleant (i.e. they all work well in some areas and really struggle on others).

_

_

Here are some important areas to take into account when talking about customization:

- Ability to access (or even better, to manipulate) the internal-representations of the code/app being analysed

- Ability to extend the current types of rules and findings (being able to for example add an app/framework specific authorization analysis)

- Open (or even known/published) schemas for the tool’s: rules, findings and intermediate representations

- Ability for the client to publish their own rules in a license of their choice

- REPL environment to test and develop those rules

- Clearly define and expose the types of findings/analysis that the Tools rules/engine are NOT able to find (ideally this should be application specific)

- _Provide the existing ‘out-of-the-box’ rules in an editable format (the best way to create a custom rules is to modify an existing one that does a similar job). This is a very important point, since (ideally) ALL rules and logic applied by the scanning engine should be customizable _

- Ability to package rules, and to run selective sets of rules

- _Ability to (re)run an analysis for one 1 (one) type of issue_

- _Ability to (re)run an analysis for one 1 (one) reported issue (or for a collection of the same issues)_

- Ability to create unit tests that validate the existence of those rules

- Ability to create unit tests that validate the findings provided by the tools

The last points are very important since they fit into how developers work (focused on a particular issue which they want to ‘fix’ and move on into the next issue to ‘fix’)

3.5 Scan configuration capabilities:** **[Operational Criteria]

This includes the following capabilities:

- Ability to schedule scans: Scans are often scheduled after nightly builds, some other times they are scheduled when the CPU usage is at its minimum. Therefore, it might be important for the user to be able to schedule the scan to run at a particular time. For SaaS based analyzers, the vendor should indicate the allowed window of submitting code or binaries to scan.

- **Ability to view real-time status of running scans: **some scans would take hours to finish, it would be beneficial and desirable for a user to be able to see the scan’s progress and the weaknesses found thus far. For SaaS based analyzers, the vendor should be able to provide accurate estimate of the results delivery.

- **Ability to save configurations and re-use them as configuration templates: **Often a significant amount of time and effort is involved in optimally configuring a static code analyzer for a particular application. A analyzer should provide the user with the ability to save a scan’s configuration so that it can be re-used for later scans.

- **Ability to run multiple scans simultaneously: **Organizations that have many applications to scan, will find the ability to run simultaneous scans to be a desirable feature.

- **Ability to support multiple users: **this is important for organizations which are planning to rollout the analyzer to be used by developers checking their own code. It is also important for organizations which are planning to scan large applications that require more than one security analyst to assess applications concurrently.

- _[Static Analysis Criteria] _**Ability to perform incremental scans: **incremental scans proves helpful when scanning large applications multiple times, it could be desirable to scan only the changed portions of the code which will reduce the time needed to assess the results.

_DC Comment: the ability to perform incremental scans is not really a ‘configuration’ but it is a ‘capability’ _

_

_

DC Comment: On the topic of deployment I would also add a chapter/sections called:

_

_

**“3.x Installation workflow **__[Operational Criteria]

**

**

_There should be detailed instructions of all the steps required to install the tool. Namely how to go from a _

a) clean VM with XYZ operating system installed, to

_b) tool ready to scan, to _

c) scan completed”

_

_

“3.x Scanning requirements **_[Static Analysis Criteria] _**

**

**

There should be detailed examples of what is required to be provided in order for a (or THE optimal) scan to be triggered. For example some scanners can handle a stand-alone dll/jar , while others need all dependencies to be provided. Also the scanners that do compilation tend to be quite temperamental when the scanning server is not the same as the normal compilation/CI server”

_

_

3.6 Testing Capabilities:** **[Static Analysis Criteria]

**

**

DC Comment: In my view this whole section (3.6) should be restructured to match the types of analysis that can be done with static analysis tools.

_

_

For example XSS, SQLi, File transversal, Command Injection, etc… are all ‘__source to sink’ vulnerabilities. Where what matters is the tools ability to follow tainted data across the application (and the ability to add new sources and sinks)

_

_

What I really feel we should be doing here is to map out the capabilities that are important for a static analysis tool, for example:

- _Taint propagation (not all do this, like FxCop) _

- Intra-procedue

- Inter-procedue

- **Handing of Collections, setters/getters, Hashmaps **(for example is the whole object tainted or just the exact key (and for how long))

- Reflection

- **Event driven flows **(like the ones provided by ASP.NET HttpModules, ASP.NET MVC, Spring MVC, etc…)

- Memory/objects manipulations (important for buffer overflows)

- String Format analysis (i.e. what actually happens in there, and what is being propagated)

- String Analysis (for regex and other string manipulations)

- Interfaces (and how they are mapped/used)

- **Mapping views to controllers **, and more importantly, mapping tainted data inserted in model objects used in views

- **Views nesting **(when a view uses another view)

- Views use of non-view APIs (or custom view controls/taglibs)

- Mapping of Authorization and Authentication models and strategies

- Mapping of internal methods that are exposed to the outside world (namely via WEB and REST services)

- Join traces (this is a massive topic and one that when supported will allow the post-scan handling of a lot of the issues listed here)

- Modelling/Visualization of the real size of Models used in MVC apps (to deal with Mass-Assignment/Auto-binding), and connecting them with the views used

- Mapping of multi-step data-flows (for example data in and out of the database, or multi-step forms/worflows). Think reflected SQLi or XSS

- _Dependency injection _

- AoP code (namely cross cuttings)

- Validation/Sanitisation code which can be applied by config changed, metadata or direct invocation

- Convention-based behaviours , where the app will behave on a particular way based on how (for example) a class is named

- **Ability to consume data from other tools **(namely black-box scanners, Thread modelling tools, Risk assessment, CI, bug tracking, etc..), including other static analysis tools

- **List the type of coding techniques that are ‘scanner friendly’ **, for example an app that uses hashmaps (to _move data around) _or has a strong event-driven architecture (with no direct connection between source and sink) is not very static analysis friendly

- ….there are more, but hopefully this makes my point….

As you can see, the list above is focused on the capabilities of static analysis tool, not on the type of issues that are ‘claimed’ that can be found.

_

_

All tools say they will detect SQL injection, but __what is VERY IMPORTANT (and what matters) is the ability to map/rate all this ‘capabilities’ to the application being tested (i.e asked the question of ‘**can vuln xyz be found in the target application given that it uses Framework XYZ and is coded using Technique XYZ’ **)

_

_

This last point is key, since most (if not all tools) today only provide results/information about what they found and not what they analyzed.

**

**

I.e if there are no findings of vuln XYZ? does that mean that there are no XYZ vulns on the app? or the tool was not able to find them?

_

_

In a way what we need is for tools to also report back the level of assurance that they have on their results (i.e based on the code analysed, its coverage and current set of rules, how sure is the tool that it found all issues?)

_

_

Scanning an application for weaknesses is an important functionality of the analyzer. It is essential for the analyzer to be able to understand, accurately identify and report the following attacks and security weaknesses.

- API Abuse

- Application Misconfiguration

- Auto-complete Not Disabled on Password Parameters

- Buffer Overflow

- Command Injection

- Credential/Session Prediction

- Cross-site Scripting

- Denial of Service

- Escalation of Privileges

- Insecure Cryptography

- Format String

- Hardcoded Credentials

- HTTP Response Splitting

- Improper Input Handling

- Improper Output Encoding

- Information Leakage

- Insecure Data Caching

- Insecure File Upload

- Insufficient Account Lockout

- Insufficient Authentication

- Insufficient Authorization

- Insufficient/Insecure Logging

- Insufficient Password Complexity Requirements

- Insufficient Password History Requirements

- Insufficient Session Expiration

- Integer Overflows

- LDAP Injection

- Mail Command Injection

- Null Byte Injection

- Open Redirect Attacks

- OS Command Injection

- Path Traversal

- Race Conditions

- Remote File Inclusion

- Second Order Injection

- Session Fixation

- SQL Injection

- URL Redirection Abuse

- XPATH Injection

- XML External Entities

- XML Entity Expansion

- XML Injection Attacks

- XPATH Injection

**4. Product Signature Update: **** **[Operational Criteria]

Product signatures (AKA rules or checkers) are what the static code analysis analyzer use to identify security weaknesses. When making a choice of a static analysis analyzers, one should take into consideration the following:

4.1 Frequency of signature update:** **

Providing frequent signature update to a static code analysis Tool [analyzer] ensure the analyzer’s relevance to threat landscape.Hence, it is important to understand the following about a analyzer’s signature update:

- Frequency of signature update: whether it is periodically, on-demand, or with special subscription, etc.

- Relevance of signatures to evolving threats: Information must be provided by the vendor on how the products signatures maintain their relevance to the newly evolving threats.

4.2 User signature feedback:** **

The analyzers must provide a way for users to submit feedback on bugs, flawed rules, rule enhancement, etc.

5. Triage and Remediation Support:** **[Static Analysis Criteria]

A crucial factor in a static code analysis Tool [analyzer] is the support provided in the triage process and the accuracy, effectiveness of the remediation advice. This is vital to the speed in which the finding is assessed and remediated by the development team.

**5.1 Finding Meta-Data: **** **

Finding meta-data is the information provided by the analyzer around the finding. Good finding meta-data helps the auditor or the developer to understand the weakness and decide whether it is a false positive quicker. The analyzer should provide the following with each finding:

- Finding Severity: the severity of the finding with a way to change it if required.

- Summary: explanation of the finding and the risk it poses on exploit.

- Location: the source code file location and the line number of the finding.

- Data Flow: the ability to trace tainted data from a source to a sink and vise versa.

DC Comment: The tool should provide as much as possible (if not all) data that it created (for each issue reported, and the issues NOT reported). There should be a mode that allows the use of the internal representations of the analysis performed, and all the rules that were triggered/used

_

_

5.2 Meta-Data Management:** **

- The analyzer should provide the ability to mark a finding as false positive.

- Ability to categorize false positives. This enforces careful consideration before marking a finding as false positive, it also allows the opportunity to understand common sources for false positives issues, this could help in optimizing the results.

- Findings marked as false positives should not appear in subsequent scans. This is helps avoid repeating the same effort on subsequent scans.

- The analyzer should be able to merge\diff scan results. This becomes a desirable feature if\when the application is re-scanned, the analyzer should be able to append results of the second scan to the first one.

- The vendor should be able to indicate whether the analyzer support the ability to define policies that incorporate flaw types, severity levels, frequency of scans, and grace periods for remediation.

5.3 Remediation Support:

- The analyzer should provide accurate and customizable remediation advice.

- Remediation advise should be illustrated with examples written in the same programming language as the finding’s.

DC Comment: Ability to extend the reports and Join traces is also very __important

_

_

6. Reporting Capabilities:** **[Operational Criteria]

The analyzer’s reporting capability is one of its most visible functionalities to stakeholders. An analyzer should provide different ways to represent the results based on the target audience. For example, developers will need as much details as possible in able to remediate the weakness properly in a timely fashion. However, upper management might need to focus on the analysis’s high level summary, or the risk involved more so than the details of every weakness.

**6.1 Support for Role-based Reports: **** **

The analyzer should be able to provide the following types of reports with the ability to mix and match:

- Executive Summary: provides high-level summary of the scan results.

- Technical Detail Reports: provides all the technical information required for developers to understand the issue and effectively remediate it. This should include:

- Summary of the issue that includes the weakness category.

- Location of the issue including file name and line of code number.

- Remediation advice which must be customized per issue and includes code samples in the language of choice.

- Flow Details which indicates the tainted data flow from the source to the sink.

- Compliance Reports:Scanners should provide a report format that allows organizations to quickly determine whether they are in violation of regulatory requirements or other standards. These reporting capabilities should be considered if certain regulations are important to the organization. The following list provides some potentially applicable standards:

- OWASP Top 10

- WASC Threat Classification

- CWE/SANS Top 25

- Sarbanes-Oxley (SOX)

- Payment Card Industry Data Security Standard (PCI DSS)

- Health Insurance Portability and Accountability Act (HIPAA)

- Gramm-Leach-Bliley Act (GLBA)

- NIST 800-53

- Federal Information Security Management Act (FISMA)

- Personal Information Protection and Electronic Documents Act (PIPEDA)

- Basel II

6.2 Report Customization:** **

The analyzer should be able to support report customization. At a minimum, the analyzer should be able to provide the following:

- Ability to include the auditor’s findings notes in the report.

- Ability to mark findings as false positives, and remove them from the report.

- Ability to change the report’s template to include the organization’s logo, header, footer, report cover,etc.

6.3 Report Formats:** **

The vendor should be able to enumerate the report formats they support (PDF, XML, HTML, etc)

**7. Enterprise Level Support: **** **[Operational Criteria]

When making a choice on a static analysis analyzer in the Enterprise, one should take into consideration the ability to integrate the analyzer into various enterprise systems, such as bug tracking, reporting, risk management and data mining.

7.1 Integration into Bug Tracking Systems:** **

Vendors should be able to enumerate the supported bug tracking applications, in addition to how are they being supported (direct API calls, CSV export, etc)

DC Comment: More importantly: HOW is that that integration done?

**

**

For example, if there are 657 vulnerabilities found, are there going to be 657 new bug tracking issues? or 1 bug? or 45 bugs (based on some XYZ criteria)?

7.2 Integration into Enterprise Level Risk Management Systems:** **

Information security teams and organizations need to present an accurate view of the risk posture of their applications and systems at all times. Hence, the analyzer should provide integration into enterprise level risk management systems.

DC Comment: same as above, what is important here is to ask ‘how is it done?’

_

_

And for the vendors that also sell those other products, they should provide details on how that integration actually happens (which ironically, in a lot of cases, they don’t really have a good integration story/capabilities)

7.3 Ability to Aggregate Projects:** **

This pertains to the ability to add meta-data to a new scan. This data could be used to aggregate and classify projects, which could be used to drive intelligence to management. For example, this can help in identifying programming languages that seem to genere more findings thus better utilizing training budge for example.

DC Comment: And how to integrate with aggregator tools like ThreadFix

Another example, is to mark certain applications as “External Facing” which triggers the analyzer to perform a more stringent predefined scan template.

DC Comment: this last paragraph should not be here (Enterprise support) and would make more sense in the ‘Customization section’

_

_