The Human Cost of Staying First

In the invisible battlefields of the 20th century, information superiority determined national survival. We developed ways of dealing with information complexity, of recognizing “patterns in the noise.” This is the concept of “wizard thinking” that I will introduce later in the chapter. First comes the strategic imperative: information superiority.

Radio intelligence is the practice of listening-in on someone else’s radio messages and gaining information. That practice creates an invisible battlefield where information superiority determined national survival. The following two examples show the “winner take all” consequences involved.

Contrasting Fates Determined by Radio Intelligence (1941-1943)

U.S. Navy Rear Admiral, then Lieutenant Commander, Edwin T. Layton describes his arrival on station, December 7, 1941, in Hawaii:1

I dashed into the submarine base administration building and leaped up the stairs toward my second-floor office. Several officers were conferring in the corridor. Captain Willard Kitts, the staff gunnery officer, spotted me and called out, “Ah, here’s the man we should have been listening to all along.”

That was the day the U.S. Navy discovered that aircraft could indeed sink modern battleships. Captain Kitts, however, noted the consequence of the attack being a surprise. The U.S. Navy had been listening to, and interpreting, Japanese radio communications. The Pearl Harbor attack’s outcome shows the deadly consequence of failing to correctly act on radio signals intelligence.

Edwin Layton had been Admiral Kimmel’s intelligence officer. He stayed on to serve in that role for Admiral Chester Nimitz, the incoming commander of the Pacific Fleet. He maintained a good relationship with the Navy codebreaking unit called Station HYPO at Pearl Harbor in Hawaii.

Sixteen months later, on April 14, 1943, Layton brought Chester Nimitz an intercepted radio message that Station HYPO had just decrypted. It contained the travel itinerary of Admiral Yamamoto, the brains behind Imperial Japanese Navy strategy and commander of the Combined Fleet. Yamamoto’s tour of Japanese bases in the Solomon Islands would bring him within range of U.S. fighter aircraft.2

Three days later, a squadron of P-38 fighters equipped with external belly tanks to extend their range intercepted and shot down Yamamoto’s plane, killing him.

During World War II, signals intelligence (primarily based on intercepted radio messages and analysis of messaging patterns) determined national survival. These invisible battles played out as “winner takes all.”

Figure 1, “Marshal Admiral Yamamoto Isoroku saluting Naval pilots at Rabaul,” shows Admiral Yamamoto visiting naval pilots at Rabaul, April 18, 1943, a few hours before his death.3

The Invisible Battlefield Emerges (1903-1905)

This invisible battlefield emerged gradually, beginning with Japan’s modernization following Commodore Perry’s arrival in 1853.

On July 8, 1853, Commodore Matthew Perry steamed his four gunboats into Edo Bay (now Tokyo Bay) and fired his seventy-three cannons with blank shots in a show of force on behalf of U.S. President Millard Fillmore. Imperial Japan, over the next couple of years, was humiliated by the unequal treaties signed with the United States under Perry’s firepower.

Japan, over the next half century, set out to build a navy equal to the Western naval powers. Japan was successful as proven by the Russo-Japanese War. Japan’s Imperial Navy immobilized Russia’s Eastern Fleet in 1904 and annihilated Russia’s Baltic Fleet in 1905. Japan was now one of the Great Naval Powers.

Vice Admiral Kamimura Hikonojō (1903)

On December 28, 1903, Japan reorganized its naval forces because it was about to go to war with Russia. Admiral Tōgō Heihachirō1 took command of the new “Combined Fleet,” consisting of the First and Second battle fleets plus a Third Fleet held in reserve:4

- The First Fleet: all six battleships plus four protected cruisers (big guns)

- The Second Fleet: six armored cruisers plus four light cruisers (fast and maneuverable)

- The Third Fleet: lesser warships plus various obsolete warships (in reserve)

Vice Admiral Kamimura Hikonojō took command of the Second Fleet.5

Figure 2, “Left to right Funakoshi, Shimamura, Togo, Kamimura, Katō, Akiyama,” shows the Combined Fleet commanders on December 26, 1904.6

First Wartime Radio Traffic Analysis (1904)

On August 14, 1904, Admiral Kamimura and the Second Fleet waited in ambush for Russian Admiral Iessen’s three cruisers of the Vladivostok squadron. Kamimura’s radio-equipped warships had intercepted a Vladivostok squadron radio message. The Imperial Japanese Navy was thus the first to use radio traffic analysis as a technique in battle. After a three-hour gun battle, one Russian cruiser was sunk and the other two, badly damaged, were back in Vladivostok. Neither the Port Arthur nor Vladivostok warships ever left port again.7

This moment represented more than a tactical advantage. It marked the emergence of a pattern that would repeat throughout the 20th century: those who could extract meaningful intelligence through electromagnetic signals gained decisive strategic advantage. The “wizard thinking” that would later characterize computing pioneers began here, with the recognition that invisible transmissions contained patterns that, when properly interpreted, revealed intentions and capabilities that would otherwise remain hidden.

The Forgotten Best Seller (1909)

Would-be American soldier of fortune Homer Lea wrote a book, published in 1909. The book is often cited because he famously predicted the attack on Pearl Harbor as inevitable and provided maps. He predicted the Japanese attack on the Philippines, again with maps published inside that book. He was quite accurate as to routes and how long it would take to complete the conquest.

The most remarkable characteristic of Lea’s forgotten book is how he described geopolitics as dynamic systems interacting with each other. He recognized and described patterns present through the next half century.

Figure 3, “Homer Lea frontispiece, 1909,” shows Lea as he presented himself to the world.8

Furthermore, two years later, his book The Valor of Ignorance was translated and sold in Japan.9

It reportedly went through twenty-four editions and sold twenty-six thousand copies in a month’s time. The Japanese were far from alienated by the book; they were flattered.

If not the Pearl Harbor prediction, what, then, is relevant in that long-forgotten book? He described a system where national borders are ever-shifting battlefields as the major powers contend for supremacy.

What is interesting is that Lea unintentionally described the invisible battlefield of radio intelligence. In ancient times, a warrior’s limits were set by his opponent’s sword. Here is how Lea explained that the same is true of international borders. The time of free colonial expansion was essentially complete, he said (at the time of his writing in 1909):10

The political frontiers of nations are never other than momentarily quiescent; shrinking on the sides exposed to more powerful military nations and expanding on frontiers where they come in contact with weaker states… The older the world grows and the more compact it becomes through man’s inventions, the more strenuous and continuous becomes this struggle. No longer is it possible for any Great Power to expect the expansion of its geographical area at the expense of aboriginal tribes or petty kingdoms alone, for they have now fallen within the sphere of some greater nation.

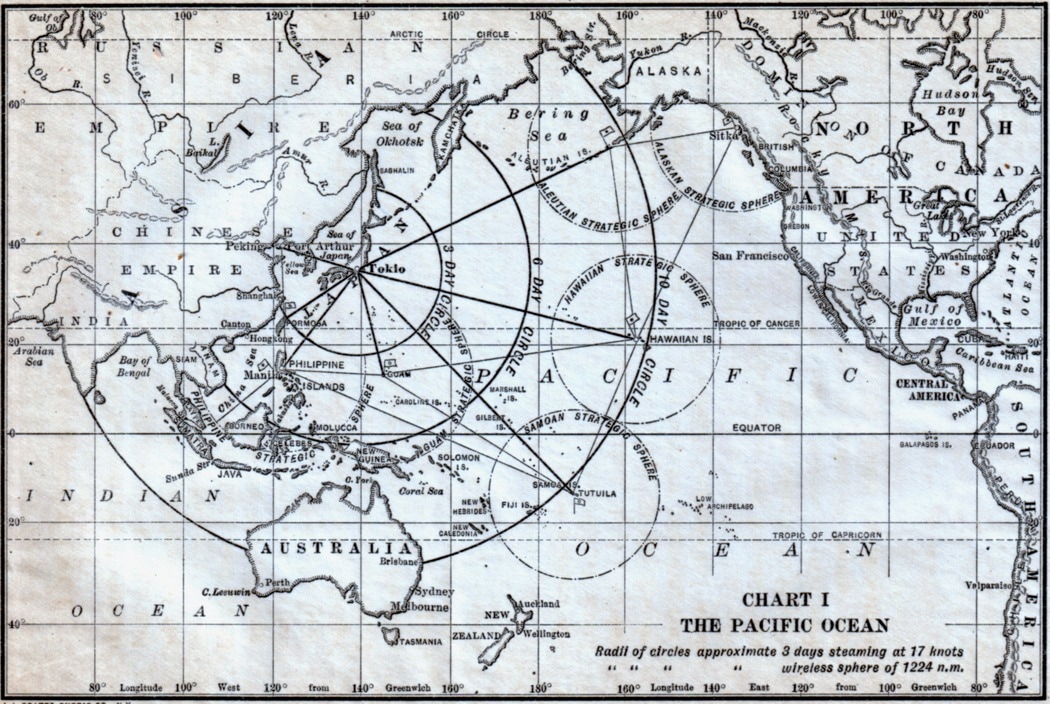

Figure 4, “Circles Marking Three Days’ Steaming,” is Lea’s chart showing the range available to naval forces within three day’s steaming to the destination. The larger circles indicate ranges for radio transmissions. His book is flawed, but this diagram is brilliant, showing the synergy between radio transmissions over vast distances guiding coal-powered warships.11

A Pattern of Thinking Emerges

While Lea was describing physical battles, his systems perspective inadvertently predicted the invisible battlefield where information, not just firepower, would determine outcomes.

With Homer Lea’s description in mind, consider Liebig’s Law of the Minimum from the field of agriculture. Justus von Liebig explained that plants might have many nutrients of sufficient quantity, but the plant’s growth would be limited by whatever nutrient is relatively the scarcest. The scarcest nutrient will always be the limiting factor for growth.

Just as a plant’s growth is limited by the scarcest ingredient, military advantage in the early 20th century was limited by information superiority. Radio intelligence was that limiting factor.

This approach represents a distinctive mental framework that would later become a hallmark of computing pioneers. It involves three key elements:

- First, creating a mental model of the system as a whole (Lea’s vision of international borders as constantly shifting battlefields)

- Second, identifying the interacting forces within that system (the relative military power of competing nations)

- Third, pinpointing the critical limiting factor (what would later become information superiority through radio intelligence)

The power of this approach lies in its ability to extract insights that remain hidden to conventional analysis. While traditional military thinking focused on physical force, this systems perspective revealed an emerging battlefield in the electromagnetic spectrum that would ultimately prove decisive. This pattern of cognitive synthesis (creating holistic models; identifying key interactions; finding the true constraint) would become essential to the development of computing technology in the decades that followed.

Incidentally, the above analysis exposes a hidden assumption to be seen throughout Lea’s book: racist social Darwinism expressed as the assumption that imperial conquest is both a good thing and inevitable.

Critical New Technology: Radio (1903-1943)

I use this idea, Liebig’s Law of the Minimum pointing to the limiting factor, when looking at systems at a whole. I look for the controlling factor. Homer Lea describes physical battles, with the limiting factor being the ability (and time required) to bring overwhelming force to the point of conflict.

Radio changed the calculus. Unlike a telegraph wire that was strictly point-to-point, a radio transmission could be sent anywhere. An enemy could listen to the radio message as easily as its intended recipient. Military and diplomatic messages were therefore encrypted.

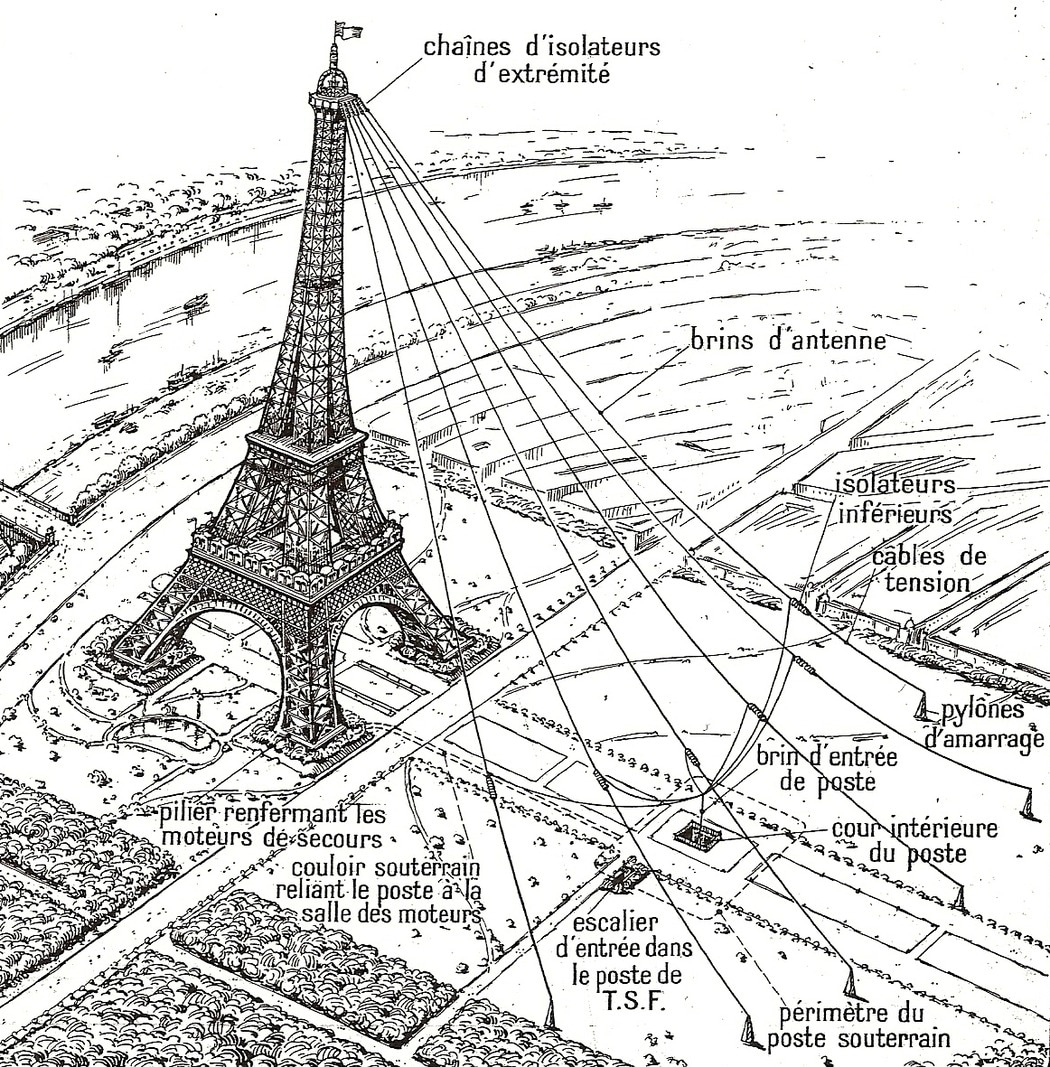

Figure 5, “Eiffel Tower antenna circa 1914,” shows the radiotelegraph station installed in 1908, then destroyed by the flood of 1911 and rebuilt. Six antenna strands descended to the ground of the Champ-de-Mars using tension cables connected to mooring pylons before entering through a square shaft into the underground wireless telegraphy station (marked “T.S.F.”). France used this antenna to intercept enemy radio messages.12

Homer Lea describes a system of competing powers. The successful attack on Pearl Harbor demonstrates the result of arriving with physically overwhelming force. In that system, the arrival of overwhelming force clearly identifies the controlling factor.

However, the invisible battleground represented by radio intelligence also has a controlling factor. The successful assassination of Admiral Yamamoto demonstrates the “winner take all” nature of superior radio intelligence.

As radio intelligence evolved from tactical advantage to strategic imperative, a second invisible battlefield emerged. Nuclear capabilities required far more sophisticated information superiority.

Second Invisible Battleground Emerges (1949)

In 1949, after two WB-29 Superfortresses failed, a third flown across the Pacific took on their secret mission. During the fourteen-hour return flight to Eielson Air Force Base in Alaska, the weather reconnaissance bomber passed through a radiation cloud from Josef Stalin’s first detonation of an atomic bomb. Twenty-two days later, President Truman announced the bomb detection to the American public.

Within the U.S. national security establishment, our mission became immediately clear: Stay first. Stay ahead, because if we fall behind, the cost is not just personal. It is global. We did not have the luxury of thinking about the human toll or the tedious grind of primitive computer programming. We were too busy winning the Cold War.

We called the situation “deterrence.” But deterrence demanded more than bombs. It required an unprecedented integration of radar systems, communications networks, and computing power to detect and intercept Soviet bombers before their payloads turned our cities to ash. The answer was SAGE—the Semi-Automatic Ground Environment—a continental air defense system built around the largest computers ever constructed (250 tons each). These mammoth machines processed radar data in real-time, directing both human pilots and Nike missiles. The situation changed war forever, and it would change us.

What of that historic WB-29? Her mission is well documented, but her fate is not. Figure 6, “Lady of the Lake,” shows the only remaining WB-29 at Eielson, a ghostly relic of the day the Cold War began. The “Lady of the Lake” continues peeking above the water to this day. Her mystery echoes the larger truth: staying first would require sacrifices we still do not fully understand.13

The abandoned WB-29 partially submerged in that Alaskan pond serves as both historical artifact and metaphor. It represents the visible remnants of hidden histories. Like this aircraft, the foundations of supercomputing remain partially submerged in classified histories, with only portions visible to casual observers. For the decades following the 1940s and 1950s, the origins of high-speed computing equipment remained a national secret. Like “Lady of the Lake,” only a few indications were visible of a bygone era we knew little about.

Necessity Influences Computing Architecture (1948)

Consider, for example, how naval cryptanalysis techniques directly influenced computer architecture design at Engineering Research Associates (ERA, described below), or how the detection of nuclear detonations created both the problem space and funding environment that made supercomputers necessary. These connections are not coincidental; they are the very patterns that a certain type of thinking, that I call “wizard thinking,” reveals.

Figure 7, “DEMON II at Fort Meade 1950,” shows one of the first ERA “contraptions” delivered, which ERA designed specifically to assist codebreakers in looking for patterns. “Contraptions” is an apt term for the high-speed computing device because another early ERA device was named GOLDBERG in honor of the humorously complicated machines depicted by cartoonist Rube Goldberg.14

Engineering Research Associates delivered the first five Demons to the National Security Agency in October 1948. Demon used data stored on a magnetic drum to perform a specialized form of table lookup in its search for codebreaking patterns. This was the first large-scale practical use of magnetic drums in the United States.15

The “Revolutionizers” (1952)

On August 15, 1952, the Armed Forces Security Agency (AFSA, the precursor to the NSA) explained how they evaluated computing equipment in a document marked “TOP SECRET CANOE, Security Information.” That explanation is a good description of the future “Nobody but Us” way of thinking.16

The critical point was that the AFSA’s analytical machines fall into two categories according to potentiality:

A. Labor-savers and extenders. Machines which replace men for operations which would be undertaken, at least in part, even without them.

B. Revolutionizers. Machines which make possible attacks which could not be undertaken without them.

AFSA went on to describe their heuristic for knowing the difference:

If we have a machine that makes it possible to undertake analytical attacks that we could not undertake, even partially, without it, we would seem to be slighting our mission if we allow it to spend any significant time idle or performing labor-saving operations.

If it has time available for a labor-saving operation, it should be so employed, but the moment this happens it should be a signal for the best brains to go into a huddle and devise some revolutionary employment to take over the time involved.

Maximum employment of the labor savers involves simply good AFSA-02 management in the ordinary sense; full time employment of the revolutionizers, however, involves something on an altogether different plane, inventiveness and scientific imagination and analytical competence of the highest order.

And the two require approaches from two different starting points; in the first case, the approach is “Which of these jobs can be done better by some machine?”; in the second, it should be “What can we get this machine to do?”

Six decades later, the NSA continued to express a similar doctrine: the NSA had revolutionizers of a sheer magnitude that nobody else had.

The NOBUS Doctrine Becomes Known (2013-2014)

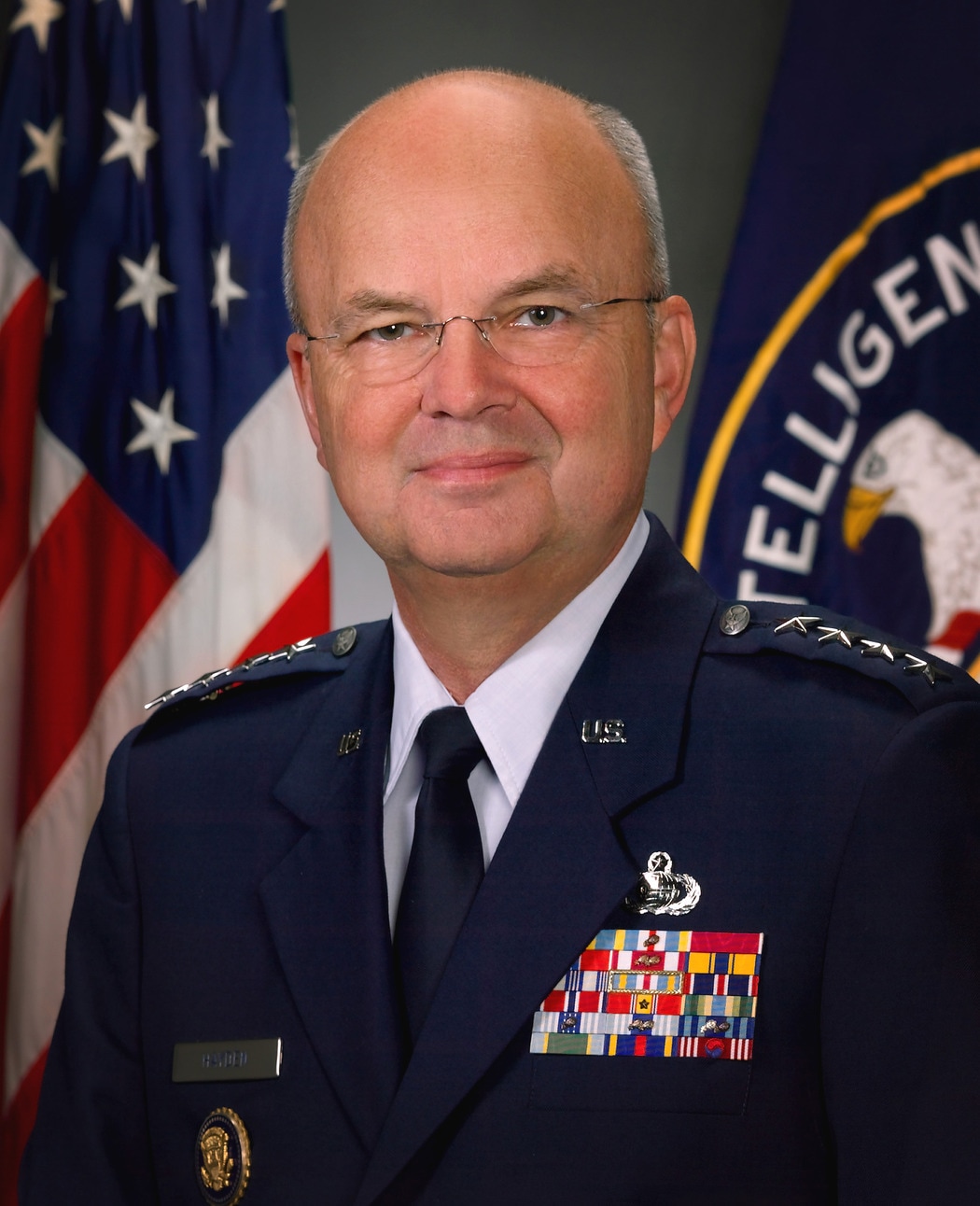

In 2013, U.S. Air Force General Michael Hayden, the NSA Director 1999-2005, explained the advantage of holding first place:17

If there’s a vulnerability here that weakens encryption but you still need four acres of Cray computers in the basement in order to work it you kind of think “NOBUS” [Nobody But Us] and that’s not a vulnerability we are ethically or legally compelled to try to patch—it’s one that ethically and legally we could try to exploit in order to keep Americans safe from others.

Figure 8, “General Michael Hayden, CIA Director, 2006,” is General Hayden’s official CIA portrait.18

Journalist Kim Zetter interviewed General Hayden in 2014. He again placed his explanation in terms of Cray supercomputers:19

“Nobody but us knows it, nobody but us exploits it,” he told me. “How unique is our knowledge of this or our ability to exploit this compared to others? … Yeah it’s a weakness, but if you have to own an acre and a half of Cray [supercomputers] to exploit it…”

For the first half of the 20th Century, radio intelligence was of overwhelming national importance, even though that fact remained secret for decades to follow. The outcome was “winner take all.”

However, with the Cold War, that doctrine evolved from “winner take all” to deterrence and prevention. When second place did not count, how could you be sure that you held first place? That was the emerging doctrine of “Nobody but Us”. If you could be absolutely sure that “nobody but us” had some capability, that was your assurance that you were in first place. That was your guarantee that you were on the winning side of “winner take all.”

Of all the computer systems in the world, Hayden referred to Cray supercomputers as enabling that “Nobody but Us” calculus. That story begins with the first three CRAY-1 systems delivered in 1976 and 1977. Cray Research, like most manufacturers, assigned sequential serial numbers to its machines. The first three CRAY-1 systems, serials 1, 2, and 3, each reveal a different facet of how supercomputing emerged from the imperatives of national security.

The First CRAY-1s: Three Machines That Changed Everything (1976-1977)

In the race to build the world’s fastest computer, there were no second-place trophies. Los Alamos Scientific Laboratory in particular needed the world’s best compute power. They had designed the first atomic bombs and, later, hydrogen bombs. Nobody knew if Josef Stalin was also building hydrogen bombs, and the scientists dared not be wrong.

A few days after Seymour Cray founded Cray Research in April 1972, he asked Les Davis to join him at his “niche firm intending to build the world’s fastest computers.” Les Davis, in turn, assigned mechanical engineer Dean Roush to figure out how to keep the machine cool. Machines were generally air cooled at that time, but Roush invented a freon refrigeration system. Les Davis proved to be the right person to take Seymour Cray’s designs and bring them to completion, ready to ship to a customer.20

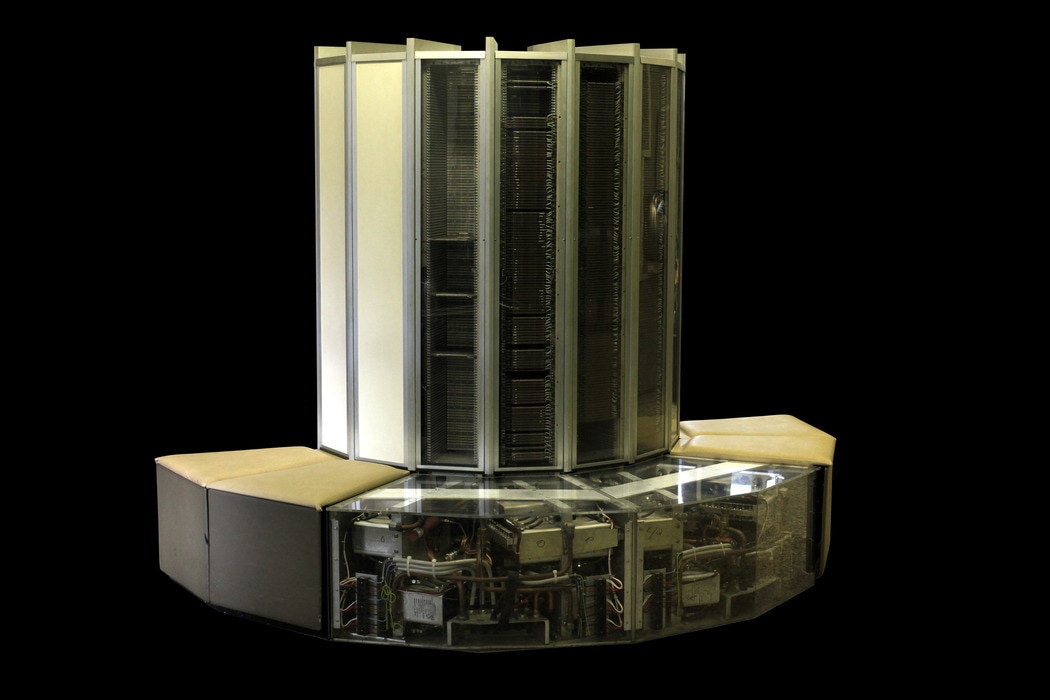

Figure 9, “CRAY-1 on display at EPFL, Lausanne, Switzerland,” shows a CRAY-1 system with see-through panels showing power supplies at the base and columns containing 144 modules each column.21

Cray Research delivered its first two CRAY-1 supercomputers in 1976. The first one went to the winner of a battle for bragging rights between two scientific laboratories. Both of these laboratories had been founded for nuclear weapons design. The second CRAY-1 marked a new chapter in the story that began in 1903 when Japan’s Imperial Navy reorganized its Combined Fleet and prepared for war with Russia.

The third CRAY-1, delivered in 1977, required something missing from the first two: software. This third sale was a cash purchase. Upon acceptance, Cray Research would be instantaneously profitable. But acceptance required a working operating system, FORTRAN compiler, assembler, and the mathematical libraries needed for atmospheric research. Cray Research had no software people.

Serial 1: Bragging Rights (1976)

The Los Alamos Scientific Laboratory (LASL, now named Los Alamos National Laboratory, LANL) derived from the Manhattan Project in late 1942. Its original purpose was to design the first atomic bombs. Its next purpose was to design hydrogen bombs. Los Alamos had a voracious need for scientists with security clearances, and for high-speed computing equipment to accomplish mathematical calculations and simulations of high-energy physics scenarios.

Dr. Ernest Lawrence founded the Radiation Laboratory at the University of California, Berkeley, in 1931. Dr. J. Robert Oppenheimer was working there in 1942 when Oppenheimer met with Major (later Lieutenant General) Leslie Groves, who was in charge of the Manhattan Project. Groves picked Oppenheimer as founding director of Project Y, the top-secret location later known as Los Alamos Scientific Laboratory, LASL.

Figure 10, “Robert Oppenheimer, Leslie Groves, Robert Sproul Present E Award, 1945,” shows (left to right), Oppenheimer, Groves, and Sproul presenting the coveted Army-Navy “E” award to the Los Alamos Laboratory on October 16, 1945. The Los Alamos Ranch House is in the background.22

Ten years later, in 1952, Dr. Ernest Lawrence and Dr. Edward Teller founded the University of California Radiation Laboratory, Livermore Branch, to directly compete with Los Alamos and spur innovation. In 1971 this laboratory got its own name, the Lawrence Livermore Laboratory (LLL).

My strongest memory of visiting the Lawrence Livermore Laboratory circa 1981 was the bathroom announcement. I was visiting from Seattle to teach a day of CRAY-1 system crash analysis. Whenever it was time to visit the restroom, my escort preceded me and loudly proclaimed, “An uncleared person is entering the area. Do not discuss any classified information at this time.” Upon departing, my escort swept out with “The uncleared person is now departing this area.” That experience brought questions to mind that I didn’t ask. I knew these security protocols were not bureaucratic theater. They reflected the reality that computational capability itself was classified. The machines we built were not just tools; they were strategic assets whose existence represented national advantage.

In 1976, both LASL and LLL were potential (and eager) customers of Cray Research. They competed with each other for the best scientists and best high-speed computing equipment. One way to attract the best scientists was to have the best equipment, that is, have the best “bragging rights” from a recruiting standpoint.

Seymour Cray already had a favorable reputation with the two scientific laboratories. Both felt that having the very first CRAY-1 on the market would provide ultimate bragging rights when it came to computing. Therefore, as either laboratory applied for government funding to purchase that CRAY-1, the other laboratory got that proposal squashed. Neither could get funding because of the other. Cray Research therefore had a working computer and no customers able to buy it.

Shy and reclusive Seymour Cray personally flew down to Los Alamos, New Mexico, and offered to give LASL the computer for free, for six months. At that point they could purchase it, lease it, or return it. Lawrence Livermore Laboratory, in northern California, could not figure out how to object to that agreement, since no funding was involved. LASL won that battle for bragging rights and received CRAY-1 serial 1.

There is an irony hidden in this story, in terms of laboratory names. On the east coast, the Massachusetts Institute of Technology (MIT) established their own Radiation Laboratory in 1940 as part of the war effort. However, “radiation” in MIT’s view meant radio waves. MIT’s Radiation Laboratory had to do with developing RADAR (Radio Direction And Ranging) and microwave-transmission technology.

MIT also founded their Servomechanisms Laboratory in 1940, again in support of military research and development. In modern terms, this was a robotics laboratory, but electromechanical rather than involving digital electronics.

Out of the Servomechanisms and Radiation Laboratories came Project Whirlwind. When the Soviet Union exploded its first atomic bomb, Project Whirlwind suddenly became the Semi-Automatic Ground Environment (SAGE), the largest computers ever created.

Whirlwind/SAGE developed high-speed core memory, extreme hardware reliability, and the idea of designing computing systems as building blocks with an overall system design. Seymour Cray’s first design at the founding of Control Data Corporation was a “building block” that Seymour Cray, Jim Thornton, and team turned into the biggest (fastest) computers in the world.

The first CRAY-1 went to Los Alamos. The second CRAY-1, which was officially scrapped but in fact delivered to a Top Secret location, represents the story of radio intelligence that began in 1903 as Japan prepared for war with Russia.

Serial 2: Codebreaking for Signals Intelligence (1976)

It is the fact that radio messages were so useful, so immediate, and so public, that led directly to creating the world’s most powerful computers. Digital and analog computers, and other high-speed computing devices, were invented for codebreaking as part of World War II and the Cold War.

As of 1976, the best and most capable place for decrypting the world’s radio messages was the U.S. National Security Agency (NSA). At some point during 1976, the NSA installed that second CRAY-1.

Figure 11, “NSA at Fort Meade, Maryland, circa 1950,” shows the relatively new NSA headquarters building. Fort Meade was chosen as being far enough from Washington D.C., to be outside a direct nuclear blast, while remaining within commuting distance for employees.23

Serial 2 represented the direct continuation of the invisible battlefield that began in 1904 when Admiral Kamimura intercepted Russian radio messages. Now, seven decades later, the NSA needed unprecedented computing power to maintain the U.S. edge in that very same invisible battlefield.

I have a memory of the National Security Agency circa 1993 involving my escort. If I wandered too far from my escort whose badge was paired to my visitor badge, the walls started chiming “bong… bong… bong…” at which point my escort noticed I had gotten distracted reading too many of the plaques hanging on the walls in the hallway. Reading the walls, I learned, was discouraged. The other interesting quirk was that, at Fort Meade (the NSA), anything I carried in was examined, but on the way out each day I was simply waved through with no examination.

In the interest of absolute accuracy, I should tell you that I have never seen documentation stating that specifically CRAY-1 serial 2 went to the National Security Agency. I have only seen documentation stating serial 2 was scrapped due to central memory redesign. National Cryptologic Museum historian J.V. Boone states that in 1976 “the initial customers were the Atomic Energy Commission and NSA.”24 I have seen no documentation stating what serial number (if any) was marked on that NSA computer.

The National Security Agency (NSA), receiver of CRAY-1 serial 2, states that the original motivation for developing digital computers during and after World War II had to do with codebreaking:25

Cryptology is an extraordinary national endeavor where only first place counts… and it is still true that only first place counts.

During the late 1970s and early 1980s, our unofficial motto at Cray Research was, “We make the world’s fastest computers. Period.” From the first CRAY-1 deliveries in 1976, up through 1981, the CRAY-1 was the fastest. We felt no pressure of competition. Cray Research was unique. Our own follow-on machines, the Cray X-MP, Y-MP, and Cray-2, re-took the title from CDC’s Cyber 205 in 1983, and held the title through 1989.

This “Nobody but Us” doctrine was more than abstract policy. It was our daily reality at Cray Research. When we said we built “the world’s fastest computers, period,” this wasn’t in our marketing materials. This was how we, as employees, saw ourselves. It meant we possessed capabilities no potential adversary could match.

In the Cold War calculus, this computational advantage translated directly to national survival. If we knew with certainty that only American scientists had access to simulation capabilities of this magnitude, we could be confident that we maintained our edge in nuclear weapon design, cryptanalysis, and other critical domains. The moment another nation could match our capabilities, even our closest allies, our security margin disappeared. This was not just about prestige. It was about preserving the strategic advantage that, we hoped, prevented nuclear confrontation.

Serial 3: Instant Profit or Instant Bust (1977)

Margaret Loftus joined Cray Research in the late spring of 1976. Her reason for hire, as she understood it, was to consider what software might be appropriate for Cray computers. She had a completely blank slate to work with, since she was the entire software department. She could freely choose techniques and languages.

During her third day on the job, Seymour Cray stopped by to see Loftus. Cray told her, “you might want to read the contract I just signed.” The National Center for Atmospheric Research, NCAR, had agreed to purchase a CRAY-1. The contract detailed the many software requirements along with financial penalties for delay or non-performance.

Margaret Loftus felt blind-sided. Why did they not mention this as she was hired? Upon reflection she realized that she was coming from Control Data (albeit from a foreign assignment in Australia) who was likely also competing for that same contract. She reminded herself that she had been getting bored at CDC and most definitely would not be bored here.

Cray Research was soon in the business of parallel processing, but in the sense of juggling customers. The newly-established (in 1975) European Centre for Medium-Range Weather Forecasting, ECMWF, took delivery of serial 1 when it came away from Los Alamos after the free trial. Los Alamos took delivery of serial 4.

ECMWF and NCAR both did weather forecasting. Thus Margaret Loftus and her software team were supporting simultaneous machine acceptances in both Boulder, Colorado (NCAR) and Reading, UK (ECMWF).

Two years later, December 1979, I was a senior in Computer Science at the University of Washington in Seattle. My attitude took a nose dive and left me wondering if I really wanted to be in the computer industry or not. I decided the best way to find out was to get a job and see if I liked it.

I left school and received two job offers: one for $12,000 and one for $18,000 per year. That was essentially the different value between FORTRAN and assembly language at the time. Assembly language meant operating system support for Cray Research when Boeing Computer Services received CRAY-1 serial 20 near Seattle.

Human Cost Induces “Wizard Thinking”

The human cost during this early computing era was significant but rarely discussed. Marriages strained under impossible deadlines. Physical health deteriorated during marathon debugging sessions. Many brilliant minds burned out entirely, sacrificing personal wellbeing for national security imperatives. Yet those who thrived in this pressure cooker environment discovered something extraordinary: cognitive frameworks that transformed impossible problems into solvable challenges.

These human costs were not incidental to technological progress but fundamentally shaped it. The mental frameworks that emerged—what we call wizard thinking—were not just technical innovations but survival adaptations. Those who could organize overwhelming complexity into meaningful patterns could withstand the pressure; those who could not often broke under it. By understanding these cognitive frameworks, you gain not just problem-solving tools but insights into sustaining innovation under extreme constraints. This lesson is as relevant today as it was during the Cold War.

This tension between innovation and human limitation became a defining characteristic of early supercomputing. In the classified environments where computing pioneers worked, mental burnout was often viewed as an acceptable casualty of progress. Yet the cognitive frameworks developed by those who thrived—what we now call wizard thinking—were not just technical accomplishments but survival mechanisms, ways of organizing overwhelming complexity into manageable patterns. Learning these mental techniques is valuable not just for their problem-solving power, but because they represent hard-won wisdom about sustaining innovation under extreme pressure.

We did not think of ourselves as wizards, although that is exactly what we were. In a time when computers were raw and unforgiving, and every instruction was a challenge, we conjured solutions from the air. Why? Because we had to. The stakes were not just about prestige. They were existential. Winning the Cold War meant staying first, and staying first demanded mastery of the craft at a level that consumed us.

We did not stop to measure the human cost. We were too focused on the mission, too absorbed in the details that we knew had to take care of themselves. We were the pioneers tasked with mastering our craft. That mastery was our way of transcending the mundane details of getting the design right or getting the software to actually work.

This is the essence of “wizard thinking:” mastery born of necessity, driven by a vision that leaves no room for mediocrity. At its core, wizard thinking is the cognitive ability to perceive meaningful patterns within overwhelming complexity and synthesize solutions across disciplinary boundaries. It combines rigorous analytical thinking with intuitive leaps that connect seemingly unrelated domains, not through magic, but through trained perception that reveals what others cannot see. What distinguished computing pioneers was not just their technical knowledge, but their ability to mentally organize chaotic information into coherent systems.

We did not call ourselves wizards at the time. We were engineers, mathematicians, and programmers consumed by seemingly impossible problems. But looking back, that is exactly what we were: people who had developed cognitive frameworks that let us see order in chaos.

When I encountered my first CRAY-1 assembly listing during my job interview, it was because I asked to see it while waiting for the next interviewer. I found that I could read it. It was not an impenetrable wall of octal codes, but a coherent system with recognizable patterns. That listing helped assure me that I could take on the job being considered.

As I gained more experience, I learned that this was not magic; it was a trainable perception developed through immersion in complex problems where failure was not an option. The stakes of the Cold War had forged in us an ability to recognize meaningful structures within overwhelming complexity. This was the fundamental essence of wizard thinking.

Connecting the Invisible Threads

The path from the first CRAY-1 supercomputers in 1976 back to Admiral Kamimura’s radio intelligence triumph in 1904 is not a straight line. It is a web of interconnected developments driven by the imperative to stay first in the invisible battlefield.

When Seymour Cray founded his “niche company to build the world’s fastest computers” in 1972, he was extending a legacy that began with Engineering Research Associates (ERA) in 1946. ERA—formed by Navy cryptanalysts including Bill Norris—recognized that storage technology, not raw computing power, was the limiting factor in signals intelligence. Their pioneering work on magnetic drum memory and the ATLAS computer created foundations that Cray would later build upon at Control Data Corporation before launching his own company.

This technological lineage traces directly back to World War II, where Admiral Nimitz relied heavily on radio intelligence from Navy cryptanalysts to direct Pacific Fleet operations. These cryptanalysts had honed their skills during Prohibition, when Elizebeth Friedman broke bootlegger codes so effectively she could explain cryptanalysis to juries using only a chalkboard.

But the strategic importance of radio intelligence intertwined with another critical national concern: petroleum. As world navies converted from coal to fuel oil between 1910-1915, controlling petroleum reserves became vital to maintaining sea power. The 1920 San Remo Agreement and later petroleum politics shaped international relations, with the U.S. providing 80% of world oil exports, thus giving the U.S. extraordinary leverage over other nations’ naval capabilities.

These petroleum politics directly influenced U.S.-Japanese relations leading to World War II. After acquiring Pacific territories in 1898-1899 following the Spanish-American War, the U.S. posed an existential threat to Japan’s trade routes. Japan’s stunning victory over Russia in 1904-1905 demonstrated its naval prowess, setting the stage for decades of Pacific rivalry that would ultimately lead to Pearl Harbor.

The twin imperatives of maintaining superiority in both radio intelligence and strategic resources created the environment from which modern computing emerged. What began as tactical advantage in Admiral Kamimura’s 1904 ambush evolved through two world wars and the Cold War into the doctrine that would ultimately be named “Nobody But Us,” and would require the world’s fastest computers to maintain.

Summary

This chapter has traced the emergence of two invisible battlefields that shaped modern computing. The first, radio intelligence, emerged in 1904 when Admiral Kamimura demonstrated its decisive advantage by intercepting Russian naval communications. The second, nuclear deterrence, emerged when the Soviet Union detonated its first atomic bomb in 1949, creating an urgent need for computational power to maintain America’s strategic edge.

The “Nobody But Us” doctrine represents the culmination of these developments. “Nobody but Us” was the recognition that maintaining information superiority requires computational capabilities beyond the reach of potential adversaries. This imperative directly drove the development of the world’s fastest computers, including the CRAY-1 systems delivered in 1976-1977.

But staying first extracted a significant human cost, and this cost is rarely acknowledged in technical histories. The pressure-cooker environment created by national security imperatives pushed computing pioneers to their physical and mental limits. Those who thrived developed distinctive cognitive frameworks, “wizard thinking”, that transformed overwhelming complexity into manageable patterns.

In the chapters ahead, we will explore how this invisible battlefield evolved from Prohibition-era bootleggers through World War II codebreaking to the Cold War computing race. By revealing these connections, we will discover why supercomputing developed as it did, and why “Nobody But Us” expressed the philosophy that drove Cray Research to build the world’s fastest computers.

Japanese names in this and following chapters follow the Eastern convention of surname followed by given name.↩︎

Layton, Edwin T., Roger Pineau, and John Costello. “And I Was There”: Pearl Harbor and Midway—Breaking the Secrets. 1st Quill ed. New York: W. Morrow, 1985, 18.↩︎

Symonds, Craig L. Nimitz at War: Command Leadership from Pearl Harbor to Tokyo Bay. New York (N.Y.): Oxford University Press, 2024, 214.↩︎

Public domain photo. Last image alive↩︎

Evans, David C., and Mark R. Peattie. Kaigun: Strategy, Tactics, and Technology in the Imperial Japanese Navy, 1887–1941. 1. Naval Institute Press paperback ed. Annapolis, Md: Naval Inst. Press, 2012, 81.↩︎

Evans, Kaigun, 83.↩︎

Public domain photo. Combined Fleet↩︎

Layton, Edwin T., Roger Pineau, and John Costello. And I Was There: Pearl Harbor and Midway ; Breaking the Secrets. 1. pr., Bluejacket books ed. Bluejacket Books. Annapolis, MD: Naval Inst. Press, 2006, 27.↩︎

Lea, Homer. The Valor of Ignorance: With Specially Prepared Maps. New York: Harper, 1909, frontispiece. Public domain photo.↩︎

Kaplan, Homer Lea, 155.↩︎

Lea, Valor, 29-30.↩︎

Lea, Valor, Chart I opposite p. 192. Public domain photo.↩︎

Public domain image. Eiffel Tower↩︎

Public domain photo. Lady of the Lake↩︎

Norberg, Arthur L. Computers and Commerce: A Study of Technology and Management at Eckert-Mauchly Computer Company, Engineering Research Associates, and Remington Rand, 1946-1957. History of Computing. Cambridge, Mass: MIT Press, 2005, 63.↩︎

Friedman, William F. “Report by the Inspector to the Director on Analytical Machine Employment, Dated 15 August 1952,” August 15, 1952. https://www.nsa.gov/Portals/75/documents/news-features/declassified-documents/friedman-documents/reports-research/FOLDER_261/41761479080061.pdf, 6-8.↩︎

Hayden quoted in Peterson, Andrea, “Why Everyone Is Left Less Secure When the NSA Doesn’t Help Fix Security Flaws.” Washington Post, October 4, 2013. https://www.washingtonpost.com/news/the-switch/wp/2013/10/04/why-everyone-is-left-less-secure-when-the-nsa-doesnt-help-fix-security-flaws/.↩︎

Public domain photo. General Hayden CIA↩︎

Zetter, Kim. Countdown to Zero Day: Stuxnet and the Launch of the World’s First Digital Weapon. New York: Crown Publishers, 2014, 389.↩︎

“The Ultimate Team Player.” Accessed February 1, 2025. https://www.designnews.com/motion-control/the-ultimate-team-player.↩︎

CRAY-1 on display at EPFL, Lausanne, Switzerland. Creative Commons license, image by Rama. https://en.wikipedia.org/wiki /File:Cray_1_IMG_9126.jpg↩︎

“This image comes from Los Alamos National Laboratory, a national laboratory privately operated under contract from the United States Department of Energy by Los Alamos National Security, LLC between October 1, 2007 and October 31, 2018. LANL allowed anyone to use it for any purpose, provided that the copyright holder is properly attributed. Redistribution, derivative work, commercial use, and all other use is permitted. LANL requires the following text be used when crediting images to it: (link) Unless otherwise indicated, this information has been authored by an employee or employees of the Los Alamos National Security, LLC (LANS), operator of the Los Alamos National Laboratory under Contract No. DE-AC52-06NA25396 with the U.S. Department of Energy. The U.S. Government has rights to use, reproduce, and distribute this information. The public may copy and use this information without charge, provided that this Notice and any statement of authorship are reproduced on all copies. Neither the Government nor LANS makes any warranty, express or implied, or assumes any liability or responsibility for the use of this information.” E Award↩︎

Boone, J. V. A Brief History of Cryptology. Annapolis, Md: Naval Institute Press, 2005, 102.↩︎

Boone, James V., and James J. Hearn. Cryptology’s Role in the Early Development of Computer Capabilities in the United States. Center for Cryptologic History, National Security Agency, Second Edition, 2021. https://www.nsa.gov/Portals/70/documents/about/cryptologic-heritage/historical-figures-publications/publications/technology/cryptologys-role-in-the-early-development-of-computer-capabilities-in-the-united-states.pdf, 3; 30 (first and last statements of the document).↩︎