Chapter 2: Architecture Flow

The traditional approach to Software Architecture has been to work for quite a long time defining the scope, laying out components, archetypes or layers, and sometimes even building parts of the architecture blocks before the actual solution starts.

Of course this is a mismatch to the idea of adaptive design that Agile brought us. If we are supposed to discover the details of the product we are building during the project itself, and following Lean principles, deferring decisions to last responsible moment, then Big Design Upfront can’t apply to Architecture either.

Indeed, what we know now is that every time we defer some of those technical decisions to the moment we learned more about the problem domain, our stakeholder’s expectations and also about our own solution, we are creating more possibilities. Every time we take a decision and start working on its premise we bound ourselves a little to that. Over time, hard technical decisions are hard to change, as we always knew in Architecture, but instead of trying to take them right, we will start to take them late. Sometimes late means that we might get lucky and might never have to decide about it at all.

Another turn we take from the traditional approach is that instead of trying to figure out the “right” architecture with our stakeholders in the beginning, we typically discuss the basics, but then we don’t press them with details they don’t know, or they can’t never be sure enough. As we are supposed to discover the product features in detail over time, and release early versions to be used in production and learn from them, the architecture would probably need to be discussed and evaluated from actual data from the design process and runtime.

Most than anything else, my strategy is to keep the conversation about architecture (mostly discussing quality attributes) open and intertwined with functional discussions, as both things go along in the system development and usage. To do that, I will explore some lightweight alternative practices that can be added to standard planning and review meetings, as well as how much to advance at product’s inception.

Emergent vs. Adaptive

Even while this book’s title is Agile Architecture to try to convey the main ideas from which my model arises, I like to use the term Adaptive Architecture also, as there are a lot of ideas that could be used even in the context of not-so agile projects.

Emergent behavior

In Complex System Theory, emergence is the process where a system composed by several smaller entities shows properties that are not explicitly present in its parts. In Software Development, we used the idea of Emergent Behavior quite a few times, trying to explain how smaller functions, objects or components build up to a system exhibiting some features that are beyond the sum of its parts.

While the metaphor is nice and powerful, most software systems (with the exception of some Artificial Intelligence approaches) have not real emergent properties, because causality is too high on them, as we design components and the way they interact with a very specific purpose.

Growth of software projects and products is better explained by looking at Complex Adaptive Systems, which -although usually based on emergent behavior- have a difference in purpose and specialization (typically to foster survival). Adaptive Systems are complex in the dynamics of their interactions, and they don’t just happen, but self-organize to achieve a goal.

Adaptive Systems

In the highly multidisciplinary realm of Complexity Theory we as software developers or architects join economists, politicians, ethologists, entomologists, and a plethora of other professions dealing with loosely coupled relations and varying contexts.

Software is adaptive both from the development perspective, as development teams are comprised of humans dealing with a creative process, surrounded by changing conditions, subject to volatile restrictions and with unpredictable input (from other humans).

The Agile paradigm recognizes these factors and thus applies an empiric process where all this factors are constantly observed, following the scientific method of formulating hypothesis, trying to prove or discard them, then building on that learning to formulate further hypothesis.

In that sense, Architecture should follow the same principle, and to that end, I prefer to come up with architectural hypothesis when there are concrete experiments to run in order to prove them. And this hardly appears before actual functionality is built.

Quality-Driven Architecture

Beyond the different definitions found for Software Architecture, Quality Attributes (also mentioned as non-funtional requirements) are always at the core of the field.

This is completely independent of the Agile approach, as seen in many different formal approaches used over time to base architectural decisions on this attributes.

In particular, I always liked the “Quality Attribute Workshop” (or QAW) from SEI’s Software Architecture in Practice 2. The QAW follows an eight-step approach to discuss about the Quality Attributes for a system during a Workshop event at project’s inception, where business and technical people discuss these matters. The steps are the following:

- QAW Presentation and Introductions

- Business/Mission Presentation

- Architectural Plan Presentation

- Identification of Architectural Drivers

- Scenario Brainstorming

- Scenario Consolidation

- Scenario Prioritization

- Scenario Refinement

What I like from this approach is it pays attention to the business goal first, and then focus on scenarios discussed among business and technical people.

The main problem I found, as you can easily imagine, is that the workshop is an activity designed to be run at the start of the project, when all involved people know very little about the actual system being built, beyond the conceptual model and it assumptions. For the most part, little to nothing has been built, and no single feature has been used yet.

Iterative approach to Quality Attributes

Taken the previous idea and breaking it down to smaller, frequent discussions best fit to an Agile team, I came up with a lighter plan to achieve the same effect:.

First, we might have a brief discussion about high-level Architecture and Quality Attribute prioritization as part of the Agile Inception Workshop (more on this later in the “Practices” chapter). But we won’t try to define anything about this too much, but having an overall idea and a starting strategy instead.

Then, we will have some time to discuss about Quality Attributes in every Iteration (or Sprint) Planning meeting; not as an aside, but potentially around every User Story (or Backlog Item). So, for each small feature (or the bigger feature it is included in) the team will review what the quality expectations are.

For example, given a User Story relating to a Book Purchase, they will analyze (after the functional details) with the stakeholders:

- Is there any specific performance or security consideration?

- Is this something we will need to change frequently, where maintainability will be important?

- Is there any specific regional or accessibility concerns?

Of course, some of these concerns might have appeared previously (in previous User Stories), and some of them might have become part of the Team’s Definition of Done 3.

Whenever one of this issues appears the Team (together with the involved stakeholders) might take note in some artifact like this:

Response Time

Context:

Once a Purchase is confirmed, the user should not wait for confirmation.

Metric:

After confirmation, “Keep shopping” page should take no more than 0.4 seconds to render.

Strategy:

Operations are queued, and eventual failure will be informed by email and site notifications.

Notice that in the example above the decision is generalized from Book Purchase to “Purchase operations” in general. This card should be ideally pasted on a board in the Team Room (instead of going to die on a Wiki Page) to be frequently seen. It might also generate some specific technical tasks under the Book Purchase user story that will later be re-used, but this decision and the corresponding implementation are directly tied to a feature, and will be tested and reviewed in this context, rather than in a conceptual model.

The “Metric” field in the card should become an automated test which can be executed either continually (in every commit) or in a nightly profile (for load or performance testing requiring long-running or heavy processes which you don’t want to compromise your network during the day).

Business Value

As we recognize that delivering value early and often is what makes agility attractive to our customers, then we have to be able to justify our technical decisions following the same principle.

In that sense, non-functional requirements should be derived from the same kind of experimentation we use to build the most appropriate product over time, by staying lean and refusing to include features “just in case” some possible event happens.

We strive to make architectural discussion with stakeholders, and the corresponding design decisions and implementation continuous tasks, the same way as analysis, functional design, coding, testing and deployment.

We want to have a permanent relationship between stakeholders and architecture (represented by the team, not necessary “architects”).

To do this, we have to develop a common language accessible to everyone like other pattern languages. Among other ways to encourage this, I developed over time a deck of cards which finally became the “Architectural Tarot” (you can see the whole deck in Appendix I). The cards provide a visual approach that better engage non-technical stakeholders in architectural discussions.

We do this by using the deck not only during an Agile Inception or other kind of kick-start workshop, but frequently in iteration planning meetings, as we keep finding about the non-functional requirements as we develop the product and start using it.

Architecture in an Agile development cycle

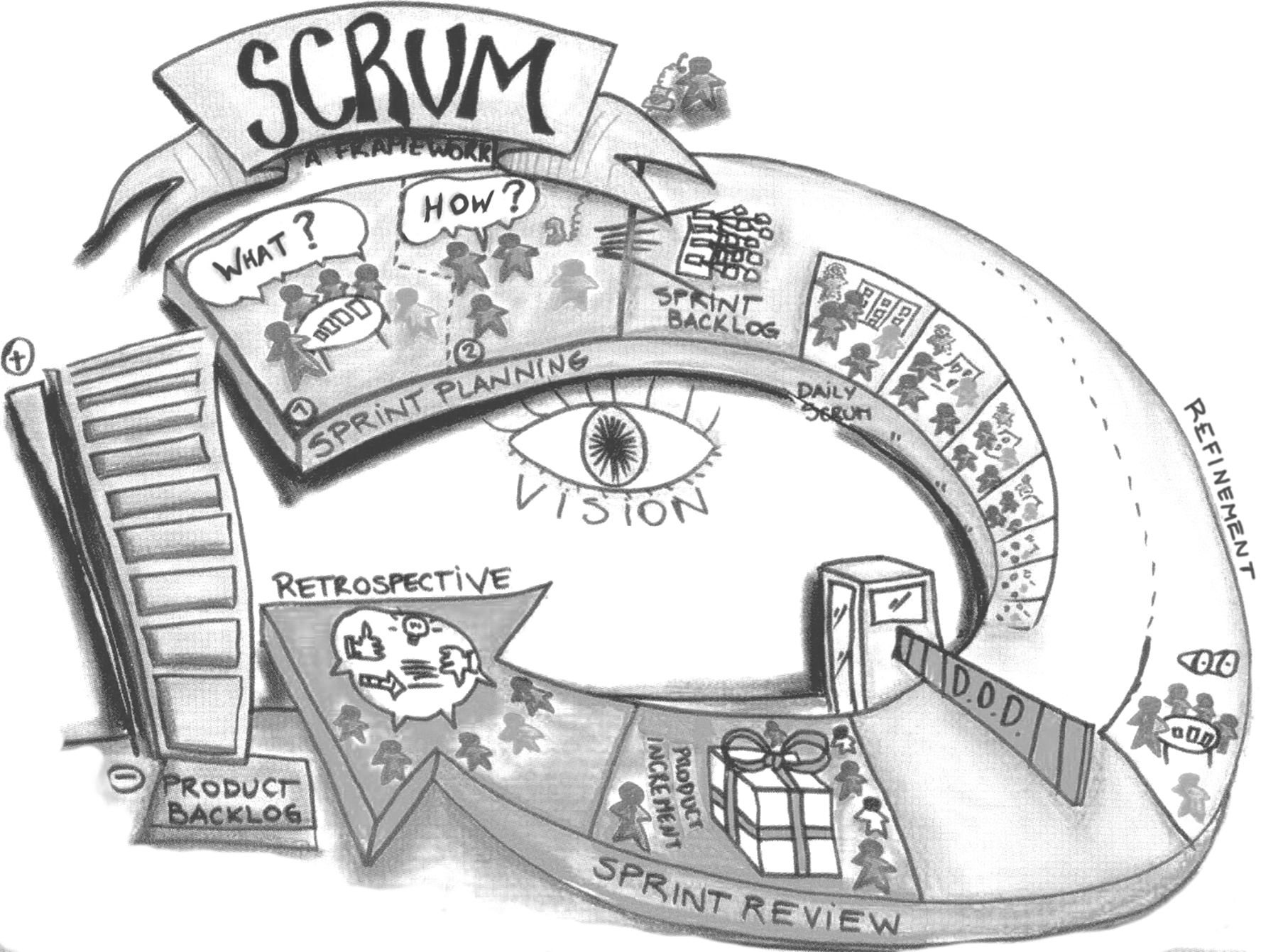

To better illustrate how architectural discussion gets integrated as part of the expected “osmotic communication” of an agile environment, I will use the Scrum diagram drawn by my friend and colleague Martin Alaimo:

Even if you are not following Scrum, this diagram shows high level view of most iterative flows. Below I list the Scrum names and then the generic description which can apply to any other agile approach.

- Product Backlog - a dynamic list of prioritized work to do

- Sprint - an iteration (usually of fixed lenght)

- Planning - session at the start of each iteration

- Definition of Done - shared agreement on when an item is “Done”

- Review - session to evaluate the product increment at the end of the iteration

- Retrospective - continuous improvement session (usually on every iteration)

How can we mix architectural stuff all over there?

Planning and Reviews

While discussing every item with stakeholders, the team can use the Architectural Tarot (or any other technique) to better understand the quality attributes involved.

Based on the expected behavior, the team might come up with some quality metrics which may become either (a) Acceptance Criteria for this particular item or (b) something to be added to the Definition of Done, as it will be expected on several different contexts.

Retrospectives

As part of the focus on improvement, the team can review how the architecture is doing so far, trying to figure out potential problems and spot common excesses on time.

Some inductive reasoning questions I use for that are:

- What we feel is too fragile so far?

- What is being to hard to do?

- What can be simpler in our current architecture?

A few recommendations before closing this section:

- Keep architectural ideas coming from the retrospectives on the lookout for next planning

- Beware of polluting the Definition of Done

- Remember the Definition of Done should be understood and agreed with the customers/stakeholders